AWS VPC 101: What is a VPC, topology, VPC access, and packet flow

AWS VPC 101

Amazon Virtual Private Cloud (Amazon VPC) enables you to launch AWS resources into a virtual network that you’ve defined. This virtual network closely resembles a traditional network that you’d operate in your own data center, with the benefits of using the scalable infrastructure of AWS. In this blog we will learn:

What is an AWS VPC (Virtual Private Cloud)?

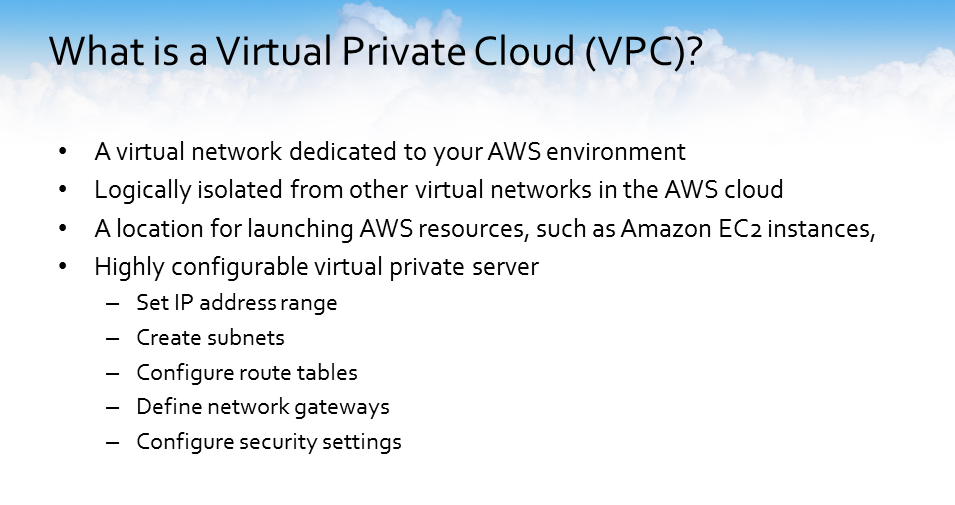

A VPC (Virtual Private Cloud) is a virtual network that’s specific to your environment. Amazon Virtual Private Clouds or AWS VPC provide you with your own virtual private data center and private network within your AWS account. Once you have created a VPC, you can create resources, such as EC2 instances, in your VPC to keep them physically isolated from other resources in AWS.

A VPC gives you configuration options that allow you to tune your VPC environment. These options include: being able to configure your private IP address ranges, setup private subnets, control and manipulate the routing versus route tables, setup different networking gateways and get granular with security settings from both a network ACL as well as a security group type settings.

AWS Virtual Private Cloud Flexibility

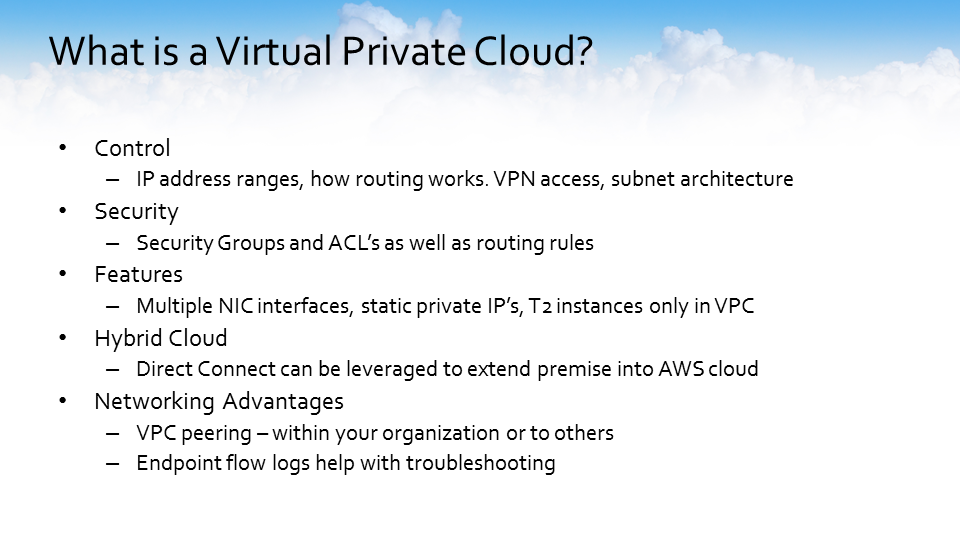

One of the greatest features of the AWS VPC is flexibility. You configure what is going to be your IP address range, how the VPC routing works, whether or not you’re going to allow VPN access, and what the architecture of the different VPC subnets is going to be. You have security options such as security groups and network ACLs and specific routing rules that can be configured to enable different features, such as running multiple NIC interfaces, configuring static private IP addresses and VPC peering.

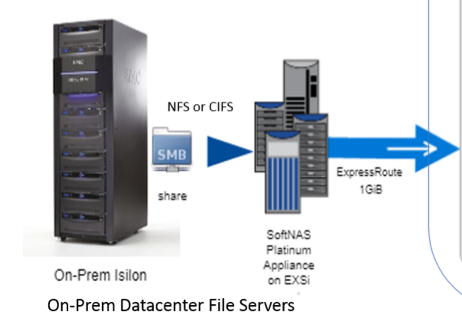

VPCs can be leveraged to create an AWS hybrid cloud by leveraging the AWS Direct Connect service. AWS Direct Connect allows you to connect your on-prem resources into the AWS cloud over a high bandwidth, low latency connection.

It is possible to connect one VPC to another VPC. Both VPCs may be in the same organization, or you can connect one to other organizations for specific services. Endpoint flow logs can be enabled that can help you with troubleshooting connectivity issues to specific services within the VPC itself.

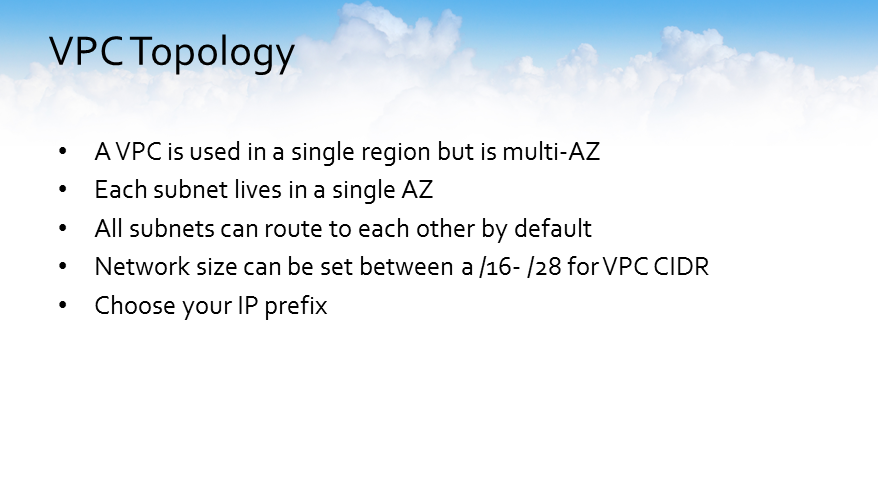

AWS VPC Topology

VPCs are used in a single region. But they are a multi-availability zone, which basically means that each subnet you create has the ability to live in a different availability zone or in a single availability zone. All of the subnets that you create within a VPC can route to each other by default.

The overall network size for a single VPC can be anywhere between a16 or 28 subnets for the overall CIDR (Classless Inter-Domain Routing) of the VPC and is configurable for each of the subnets that you want to set within the AWS VPC.

You have the ability to choose your own IP prefix. If you want the 10 networks, the 50 networks, or whatever you’d like your private IP address to be, the IP prefix is configurable within your AWS VPC network topology.

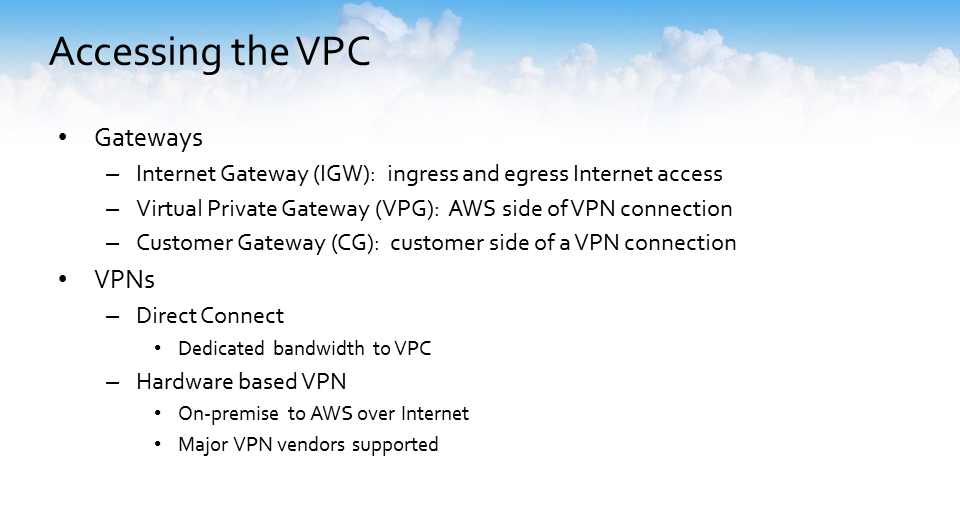

Accessing the AWS VPC

There are several types of gateways to connect into and out of a VPC. One of the questions often asked is what do each of these gateways do? How do they work?

The internet gateway allows you to point specific resources within your VPC via route tables to gain access to the outside world, or you can leverage a NAT (Network Address Translation) instance. This allows you to enable VPN access to your VPC, using AWS Direct Connect or another VPN solution to gain access to resources inside the VPC.

- There are two parts involved in setting up a VPN to your VPC.

- The VPG (Virtual Private Gateway) which is the AWS side of a VPN connection.

- The customer gateway is the customer side of a VPN connection. Most of the major VPN hardware vendors have supported template configurations that can be downloaded directly from the virtual private gateway interface within your VPC via the AWS console.

AWS VPC Packets Flow

How do the packets flow within a Virtual Private Cloud?

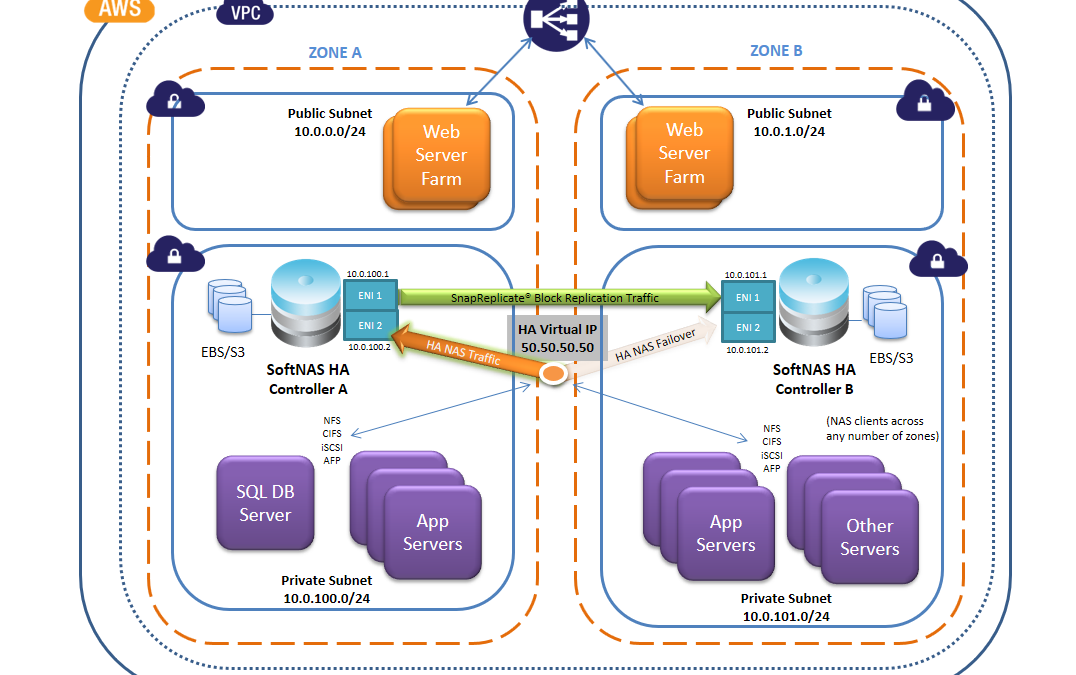

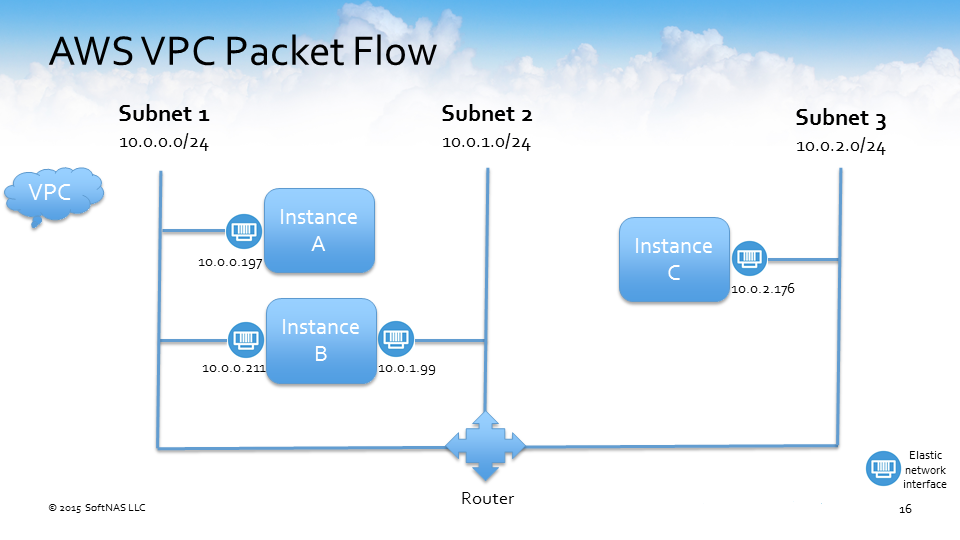

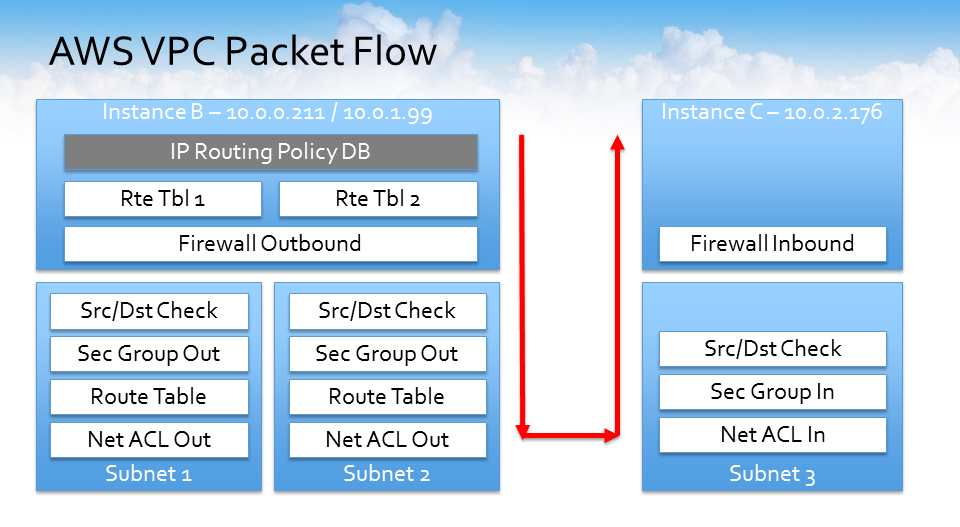

Let’s use the above example setup of an AWS VPC and discuss how the packets will flow.

In this example, we have three subnets:

- 10.0.0.0

- 10.0.1.0

- 10.0.2.0.

We have three instances in the AWS VPC:

- instance A connected to subnet 1 (10.0.0.0)

- instance C connected to subnet 3 (10.0.2.0)

- instance B has two elastic network interfaces, or ENIs, that are connected to two subnets

- subnet 1 (10.0.0.0)

- subnet 2 (10.0.1.0)

AWS VPC Packet Flow (Instance A and Instance B)

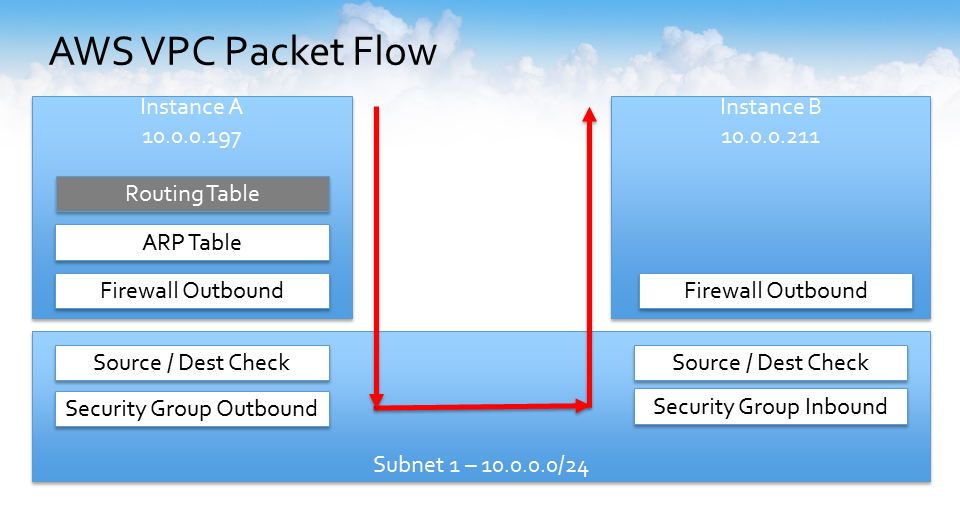

How do instance A and instance B connect to each other over subnet 1?

- Instance A and B both live in the same subnet so by default, the routing table is the first check and the routing table automatically has a default route associated with it to route to all traffic within the overall CIDR of the VPC.

- Next, it hits the ARP (Address Resolution Protocol) table, the outbound portion of the firewall, and a source and destination check occurs, which is a configurable option within AWS.

- Then it hits the outbound security group which, by default, the outbound security group is wide open. All traffic is allowed out.

- It then goes over to the other instance and checks the inbound security group, a second source, and destination check, and then hits the firewall before the packet flows into instance B.

When people run into VPC networking issues they say, “I can SSH, or I can’t SSH, or I can ping, or I can’t ping,” and a lot of the problems that people experience in troubleshooting connectivity here are primarily around the security groups on the inbound side because the outbound is opened by default. The inbound side is usually the first place to check when you’re having some type of connectivity issue within the AWS VPC to ensure that the security group is not blocking the type of traffic based upon either source or destination IP or port number, for example, that may be impairing your connectivity.

AWS VPC Packet Flow (Instance B and Instance C)

How would the packets flow to instances B and C?

Remember, instance B is living in two subnets (Subnet 1 and 2), and instance C which is living in subnet 3.

If instance B wanted to talk to instance C, it can go one of two ways. It could go out of subnet 1 or subnet 2. Regardless of which subnet it goes out on, the same rules apply. It’s going to hit the routing table, go to the firewall, source destination check, and security group out.

It’s going to check the route table to make sure it has a route to that destination network, and then because it’s going to a different network, it’s going to check the network ACL out, and then on the reverse side, it comes back in. It’s going to check the network ACL in before it checks the security group, so it’s different types of connectivity options for instances that happen to live in a different subnet.

Understanding the above flow can be very useful. Once you understand how the packets flow and where they were going, and how everything was being checked, it allows you to better troubleshoot VPC network connectivity issues.

Once you grasp the VPC networking concepts in this blog, we suggest you have a look at our “AWS VPC Best Practices” blog post. In it, we share a detailed look at best practices for the configuration of an AWS VPC and common VPC configuration errors.

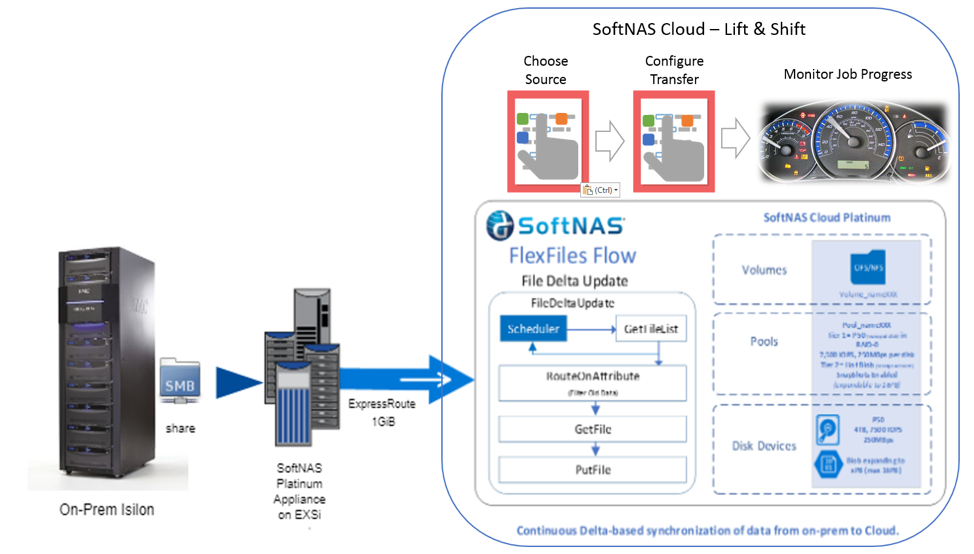

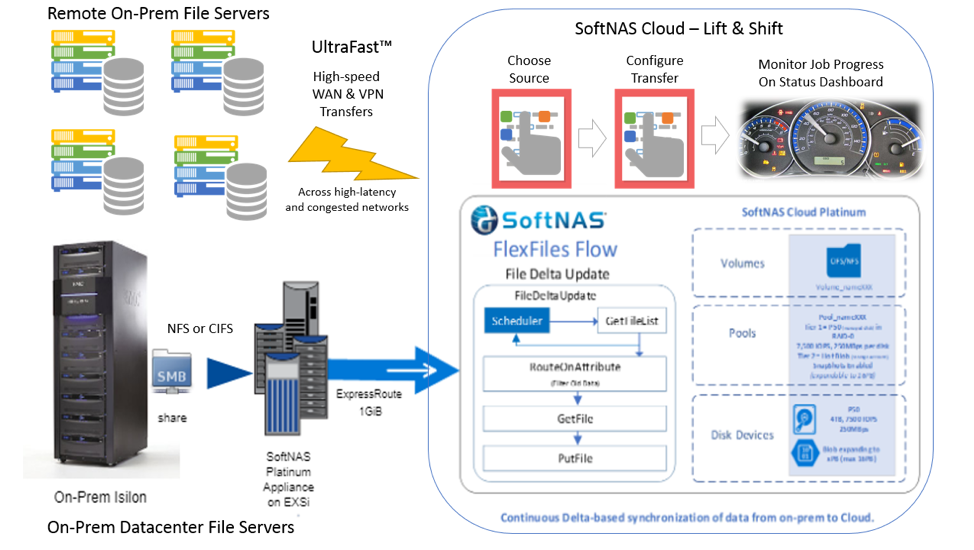

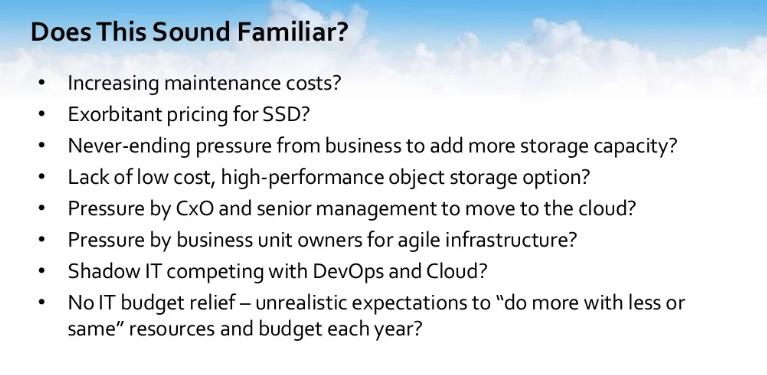

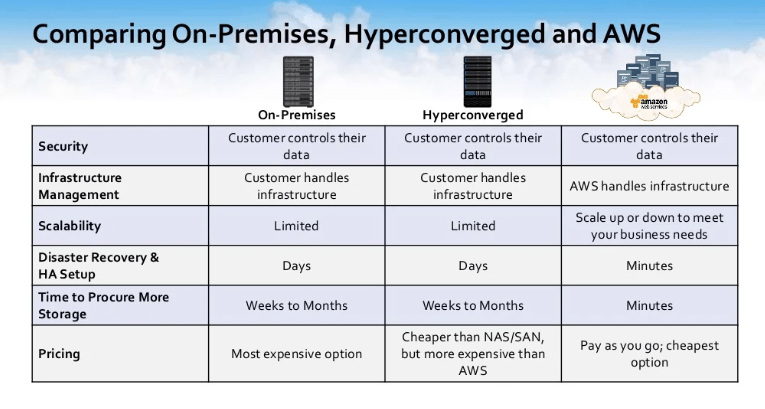

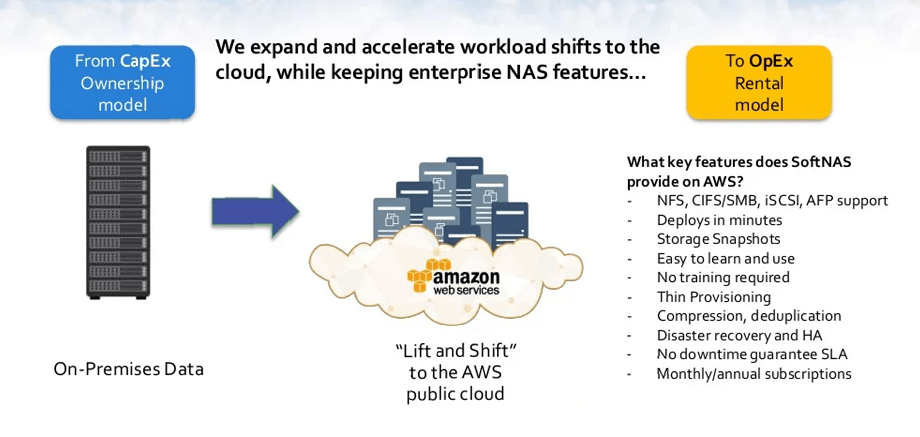

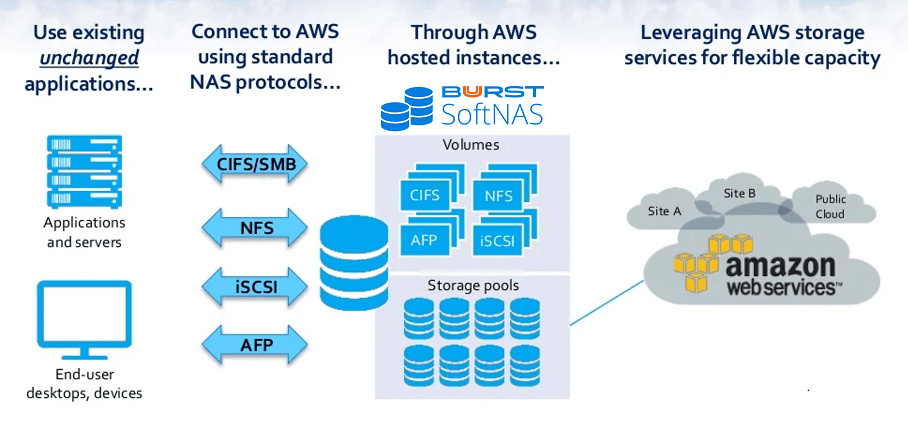

SoftNAS AWS NAS Storage Solution

SoftNAS offers AWS customers an enterprise-ready NAS capable of managing your fast-growing data storage challenges including AWS Outpost availability. Dedicated features from SoftNAS deliver significant cost savings, high availability, lift and shift data migration, and a variety of security protection.

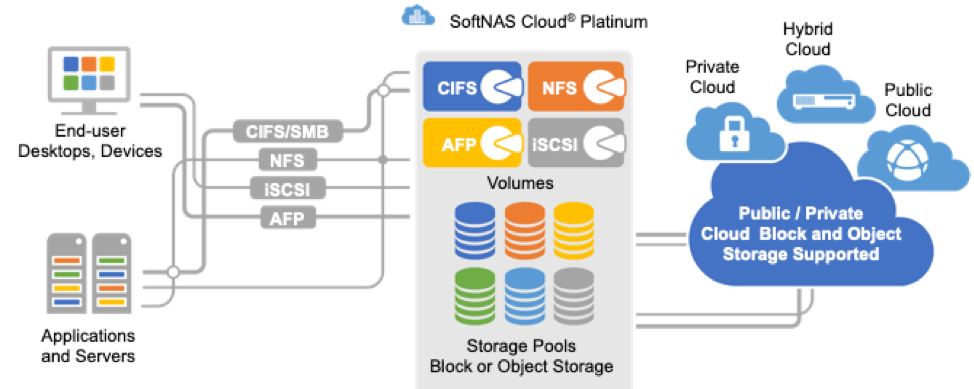

SoftNAS AWS NAS Storage Solution is designed to support a variety of market verticals, use cases, and workload types. Increasingly, SoftNAS NAS deployed on the AWS platform to enable block and file storage services through Common Internet File System (CIFS), Network File System (NFS), Apple File Protocol (AFP), and Internet Small Computer System Interface (SCSI). Watch the SoftNAS Demo.

SoftNAS leverages the cloud providers’ block and object storage

SoftNAS leverages the cloud providers’ block and object storage