AWS VPC Best Practices with SoftNAS

Buurst SoftNAS has been available on AWS for the past eight years providing cloud storage management for thousands of petabytes of data. Our experience in design, configuration, and operation on AWS provides customers with immediate benefits.

Best Practice Topics

- Organize Your AWS Environment

- Create AWS VPC Subnets

- SoftNAS Cloud NAS and AWS VPCs

- Common AWS VPC Mistakes

- SoftNAS Cloud NAS Overview

- AWS VPC FAQs

Organize Your AWS Environment

Organizing your AWS environment is a critical step in maximizing your SoftNAS capability. Buurst recommends the use of tags. The addition of new instances, routing tables, and subnets can create confusion. The simple use of tags will assist in identifying issues during troubleshooting.

When planning your CIDR (Classless Inter-Domain Routing) block, Buurst recommends making it larger than expected. This is because every VPC subnet created uses five IP addresses for the subnet. Thus, remember that all newly created subnets have a five IP overhead.

Additionally, avoid using overlapping CIDR blocks as any future updates in pairing the VPC with another VPC will not function correctly with complicated VPC pairing solutions. Finally, there is no cost associated with a larger CIDR block, so simplify your scaling plans by choosing a larger block size upfront.

Create AWS VPC Subnets

A best practice for AWS subnets is to align VPC subnets to as many different tiers as possible. For example, the DMZ/Proxy layer or the ELB layer uses load balancers, application, or database layer. If your subnet is not associated with a specific route table, then by default, it goes to the main route table. Missing subnet associations are a common issue where packets are not flowing correctly due to not associating subnets with route tables.

Buurst recommends putting everything in a private subnet by default and using either ELB filtering or monitoring services in your public subnet. A NAT is preferred to gain access to the public network as it will become part of a dual NAT configuration for redundancy. Cloud formation templates are available to set up highly available NAT instances which require proper sizing based on the amount of traffic going through the network.

Set up VPC peering to access other VPCs within the environment or from a customer or partner environment. Buurst recommends leveraging the endpoints for access to services like S3 instead of going out either over a NAT instance or an internet gateway to access services that don’t live within the specific VPCs. This setup is more efficient with a lower latency by leveraging an endpoint rather than an external link.

Control Your Access

Control access within the AWS VPC by not cutting corners using a default route to the internet gateway. This access setup is a common problem many customers spend time on with our Support organization. Again, we encourage redundant NAT instances leveraging cloud formation templates available from Amazon or creating highly available redundant NAT instances.

The default NAT instance size is an m1.small, which may or may not suit your needs depending on the traffic volume in your environment. Buurst highly recommends using IAM (Identity and Access Management) for access control, especially configuring IAM roles to instances. Remember that IAM roles cannot be assigned to running instances and are set up during instance creation time. Using those IAM roles will allow you to not have to continue to populate AWS keys within the specific products to gain access to some of those API services.

How Does SoftNAS Fit Into AWS VPCs?

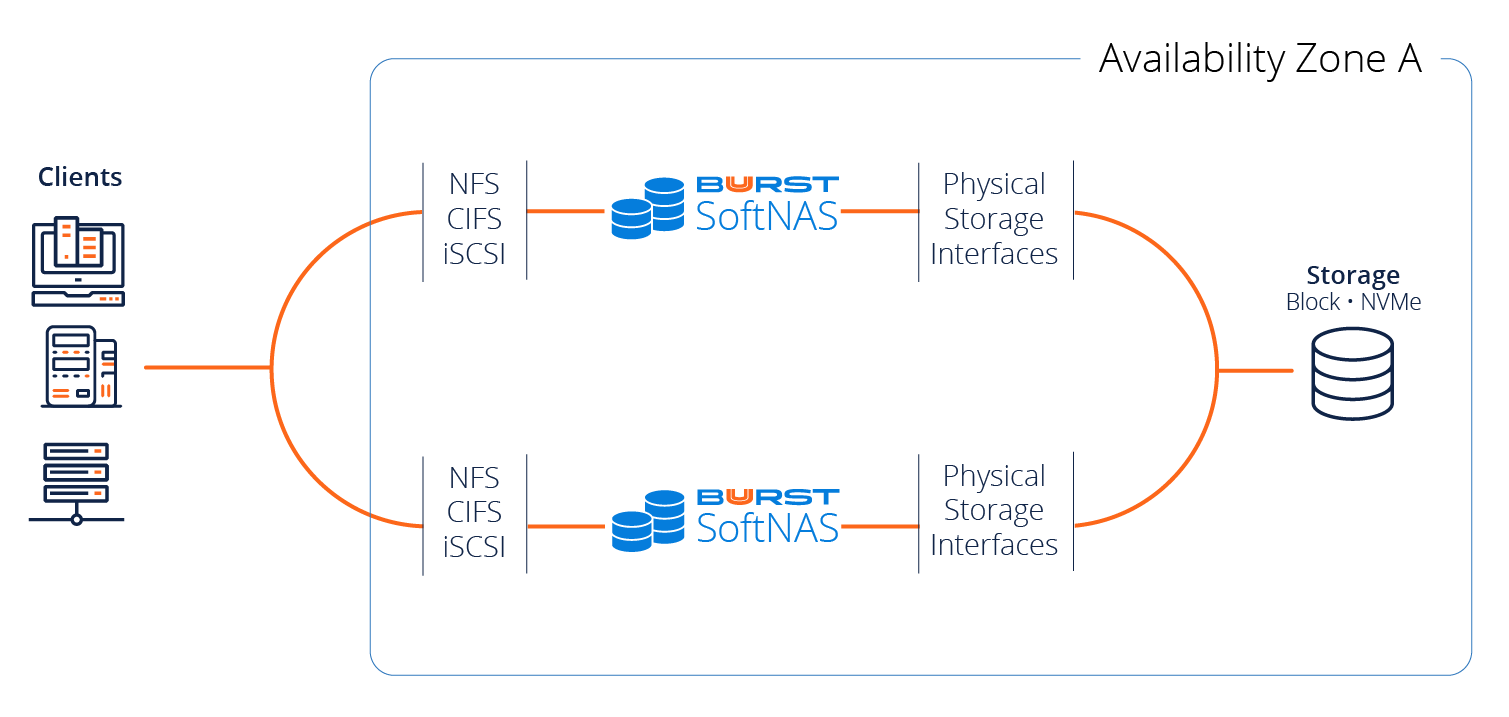

Buurst SoftNAS offers a highly available architecture from a storage perspective, leveraging our SNAP HA capability, allowing us to provide high availability across multiple availability zones. SNAP HA offers 99.999% HA with two SoftNAS controllers replicating the data into block storage in both availability zones. Buurst customers who run in this environment qualify for our Buurst No Downtime Guarantee.

Additionally, AWS provides no SOA (Start of Authority) unless your solution runs in a multi-zone deployment.

SoftNAS uses a private virtual IP address in which both SoftNAS instances live within a private subnet and are not accessible externally, unless configured with an external NAT, or AWS Direct Connect.

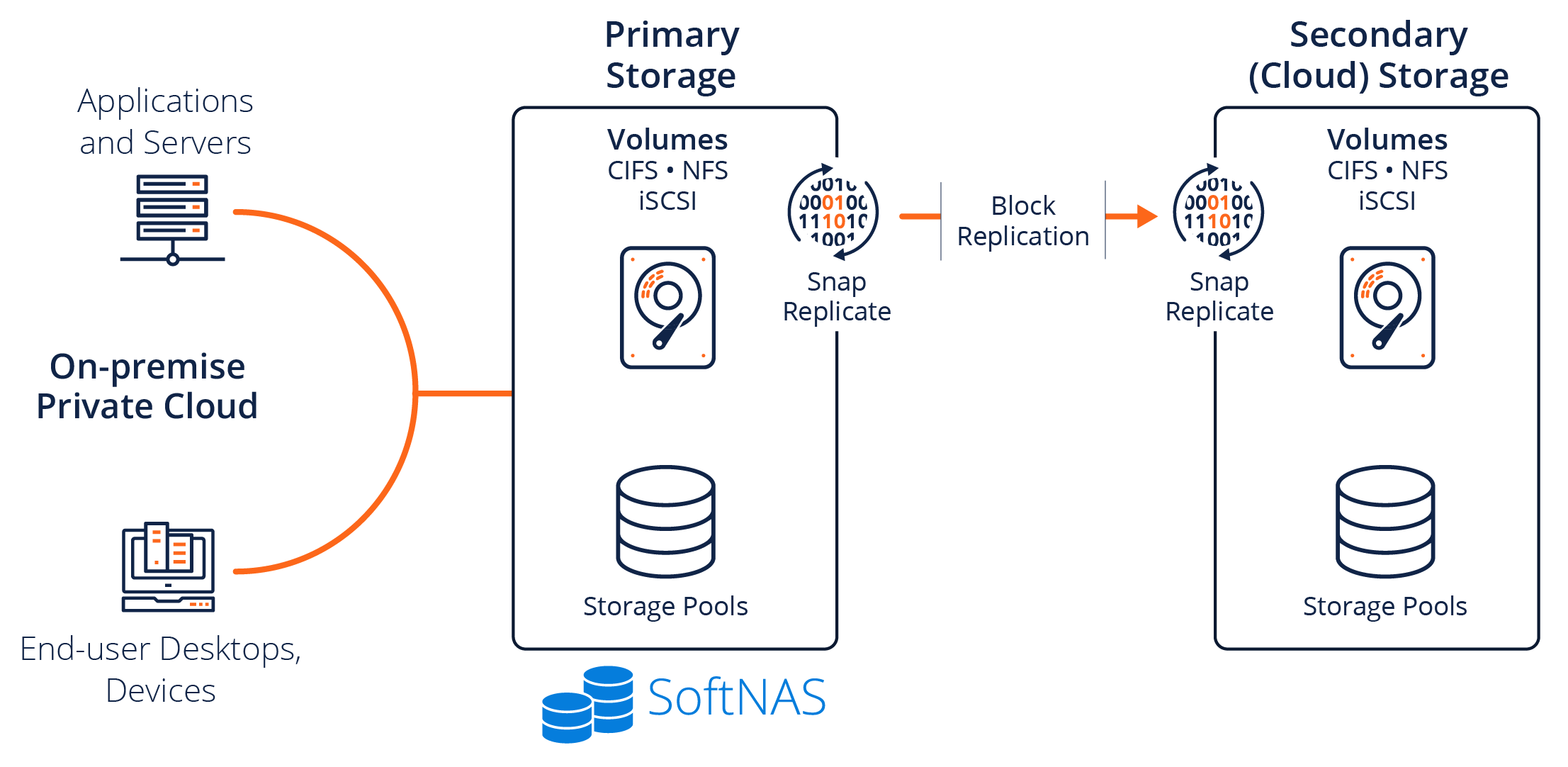

SoftNAS SNAP HA™ provides NFS, CIFS and iSCSI services via redundant storage controllers. One controller is active, while another is a standby controller. Block replication transmits only the changed data blocks from the source (primary) controller node to the target (secondary) controller. Data is maintained in a consistent state on both controllers using the ZFS copy-on-write filesystem, which ensures data integrity is maintained. In effect, this provides a near real-time backup of all production data (kept current within 1 to 2 minutes).

A key component of SNAP HA™ is the HA Monitor. The HA Monitor runs on both nodes that are participating in SNAP HA™. On the secondary node, HA Monitor checks network connectivity, as well as the primary controller’s health and its ability to continue serving storage. Faults in network connectivity or storage services are detected within 10 seconds or less, and an automatic failover occurs, enabling the secondary controller to pick up and continue serving NAS storage requests, preventing any downtime.

Once the failover process is triggered, either due to the HA Monitor (automatic failover) or as a result of a manual takeover action initiated by the admin user, NAS client requests for NFS, CIFS and iSCSI storage are quickly re-routed over the network to the secondary controller, which takes over as the new primary storage controller. Takeover on AWS can take up to 30 seconds, due to the time required for network routing configuration changes to take place.

Common AWS VPC Mistakes

These are the most common support issues in AWS VPC configuration:

- Deployments require two NIC interfaces with both NICs in the same subnet. Double-check during configuration.

- SoftNAS health checks perform a ping between the two instances requiring the security group to be open at all times

- A virtual IP address must not be in the same CIDR of the AWS VPC. So, if the CIDR is 10.0.0.0/16, select a virtual IP address not within that subnet.

SoftNAS Overview

Buurst SoftNAS is an enterprise virtual software NAS available for AWS, Azure, and VMware with industry-leading performance and availability at an affordable cost.

SoftNAS is purpose-built to support SaaS applications and other performance-intensive solutions requiring more than standard cloud storage offerings.

- Performance – Tune performance for exceptional data usage

- High Availability – From 3-9’s to 5-9’s HA with our No Downtime Guarantee

- Data Migration – Built-in “Lift and Shift” file transfer from on-premises to the cloud

- Platform Independent – SoftNAS operates on AWS, Azure, and VMware

Learn: SoftNAS on AWS Design & Configuration Guide

AWS VPC FAQs

Common questions related to SoftNAS and AWS VPC:

We use VLANs in our data centers for isolation purposes today. What VPC construct do you recommend to replace VLANs in AWS?

That would be subnets, so you could either leverage the use of subnets or if you really wanted to get a different isolation mechanism, create another VPC to isolate those resources further and then actually pair them together via the use of VPC pairing technology.

You said to use IAM for access control, so what do you see in terms of IAM best practices for AWS VPC security?

The most significant thing is that you deal with either third–party products or customized software that you made on your web server. Anything that requires the use of AWS API resources needs to use a secret key and an access key, so you can store that secret key and access key in some type of text file and have it reference it, or, b, the easier way is just to set the minimum level of permissions that you need in the IAM role, create this role and attach it to your instance and start time. Now, the role itself cannot be assigned, except during start time. However, the permissions of several roles can be modified on the fly. So you can add or subtract permissions should the need arise.

So when you’re troubleshooting the complex VPC networks, what approaching tools have you found to be the most effective?

We love to use traceroute. I love to use ICMP when it’s available, but I also like to use the AWS Flow Logs which will actually allow me to see what’s going on in a much more granular basis, and also leveraging some tools like CloudTrail to make sure that I know what API calls were made by what user to understand what’s gone on.

What do you recommend for VPN intrusion detection?

There are a lot of them that are available. We’ve got some experience with Cisco and Juniper for things like VPN and Fortinet, whoever you have, and as far as IVS goes, Alert Logic is a popular solution. I see a lot of customers that use that particular product. Some people like some of the open–source tools like Snort and things like that as well.

Any recommendations around secure junk box configurations within AWS VPC?

If you’re going to deploy a lot of your resources within a private subnet and you’re not going to use a VPN, one of the ways that a lot of people do this is to just configure a quick junk box, and what I mean by that is just to take a server, whether it be a Windows or Linux, depending upon your preference, and put that in the public subnet and only allow access from a certain amount of IP addresses over to either SSH from a Linux perspective or RDP from a Windows perspective. It puts you inside of the network and actually allows to gain access to the resources within the private subnet.

And do junk boxes sometimes also work? Are people using VPNs to access the junk box too for added security?

Some people do that. Sometimes they’ll just put like a junk box inside of the VPN and your VPN into that. It’s just a matter of your organization security policies.

Any performance or further considerations when designing the VPC?

It’s important to understand that each instance has its own available amount of resources, from not only from a network IO but from a storage IO perspective, and also it’s important to understand that 10GB, a 10GB instance, like let’s say take the c3.8xl which is a 10GB instance. That’s not 10GB worth of network bandwidth or 10GB worth of storage bandwidth. That’s 10GB for the instance, right? So if you have a high amount of IO that you’re pushing there from both a network and a storage perspective, that 10GB is shared, not only from the network but also to access the underlying EBS storage network. This confuses a lot of people, so it’s 10GB for the instance not just a 10GB network pipe that you have.

Why would use an elastic IP instead of the virtual IP?

What if you had some people that wanted to access this from outside of AWS? We do have some customers that primarily their servers and things are within AWS, but they want access to files that are running, that they’re not inside of the AWS VPC. So you could leverage it that way, and this was the first way that we actually created HA to be honest because this was the only method at first that allowed us to share an IP address or work around some of the public cloud things like node layer to broadcast and things like that.

Looks like this next question is around AWS VPC tagging. Any best practices for example?

Yeah, so I see people that basically take different services, like web and database or application, and they tag everything within the security groups and everything with that particular tag. For people that are deploying SoftNAS, I would recommend just using the name SoftNAS as my tag. It’s really up to you, but I do suggest that you use them. It will make your life a lot easier.

Is storage level encryption a feature of SoftNAS Cloud NAS or does the customer need to implement that on their own?

So as of our version that’s available today which is 3.3.3, on AWS you can leverage the underlying EBS encryption. We provide encryption for Amazon S3 as well, and coming in our next release which is due out at the end of the month we actually do offer encryption, so you can actually create encrypted storage pools which encrypt the underlying disk devices.

Virtual VIP for HA: does the subnet this event would be part of add in to the AWS VPC routing table?

It’s automatic. When you select that VIP address in the private subnet, it will automatically add a host route into the routing table. Which allows clients to route that traffic.

Can you clarify the requirement on an HA pair with two next, that both have to be in the same subnet?

So each instance you need to move NIC ENIs, and each of those ENIs actually need to be in the same subnet.

Do you have HA capability across regions? What options are available if you need to replicate data across regions? Is the data encryption at-rest, in-flight, etc.?

We cannot do HA with automatic failover across regions. However, we can do SnapReplicate across regions. Then you can do a manual failover should the need arise. The data you transfer via SnapReplicate is sent over SSH and across regions. You could replicate across data centers. You could even replicate across different cloud markets.

Can AWS VPC pairings span across regions?

The answer is, no, that it cannot.

Can we create an HA endpoint to AWS for use with AWS Direct Connect?

Absolutely. You could go ahead and create an HA pair of SoftNAS Cloud NAS, leverage direct connect from your data center, and access that highly available storage.

When using S3 as a backend and a write cache, is it possible to read the file while it’s still in cache?

The answer is, yes, it is. I’m assuming that you’re speaking about the eventual consistency challenges of the AWS standard region; with the manner in which we deal with S3 where we treat each bucket as its own hard drive, we do not have to deal with the S3 consistency challenges.

Regarding subnets, the example where a host lives in two subnets, can you clarify both these subnets are in the same AZ?

In the examples that I’ve used, each of these subnets is actually within its own VPC, assuming its own availabilities. So, again, each subnet is in its own separate availability zone, and if you want to discuss more, please feel free to reach out and we can discuss that.

Is there a white paper on the website dealing with the proper engineering for SoftNAS Cloud NAS for our storage pools, EBS vs. S3, etc.?

Click here to access the white paper, which is our SoftNAS architectural paper which was co-written by SoftNAS and Amazon Web Services for proper configuration settings, options, etc. We also have a pre-sales architectural team that can help you out with best practices, configurations, and those types of things from an AWS perspective. Please contact sales@softnas.com and someone will be in touch.

How do you solve the HA and failover problem?

We actually do a couple of different things here. When we have an automatic failover, one of the things that we do when we set up HA is we create an S3 bucket that has to act as a third-party witness. Before anything takes over as the master controller, it queries the S3 bucket and makes sure that it’s able to take over. The other thing that we do is after a take-over, the old source node is actually shut down. You don’t want to have a situation where the node is flapping up and down and it’s kind of up but kind of not and it keeps trying to take over, so if there’s a take-over that occurs, whether it’s manual or automatic, the old source node in that particular configuration is shut down. That information is logged, and we’re assuming that you’ll go out and investigate why the failover took place. If there are questions about that in a production scenario, support@softnas.com is always available.