SoftNAS High Availability and Disaster Recovery-Oriented Solutions Safeguard A Business

Buurst deploys its Hybrid Cloud solution with High-Availability and Disaster Recovery to EMEA customers that can minimize adverse impacts from IT Outages.

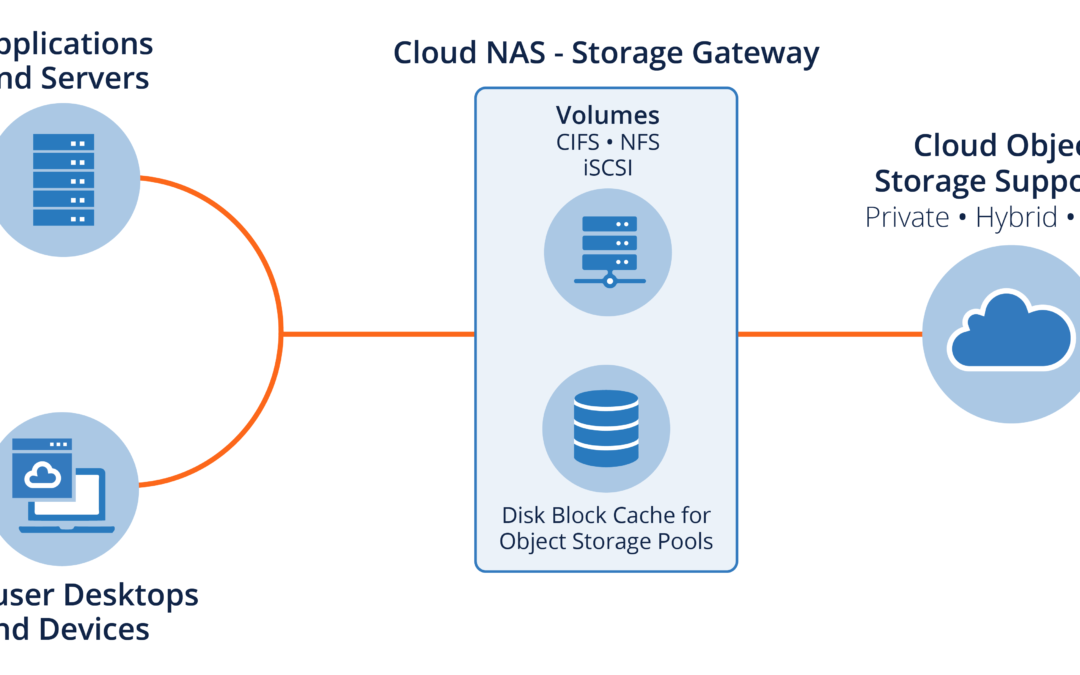

HOUSTON, TEXAS, UNITED STATES, August 1, 2024 /EINPresswire.com/ — In the wake of the recent IT outage that crippled many organizations worldwide, businesses are re-evaluating their data protection strategies. Downtime can lead to lost revenue, productivity, and customer trust. SoftNAS, a software-defined Networked Attached Storage (NAS) solution, empowers businesses to avoid or minimize the impact of outages with its robust High Availability (HA) and Disaster Recovery (DR) capabilities.

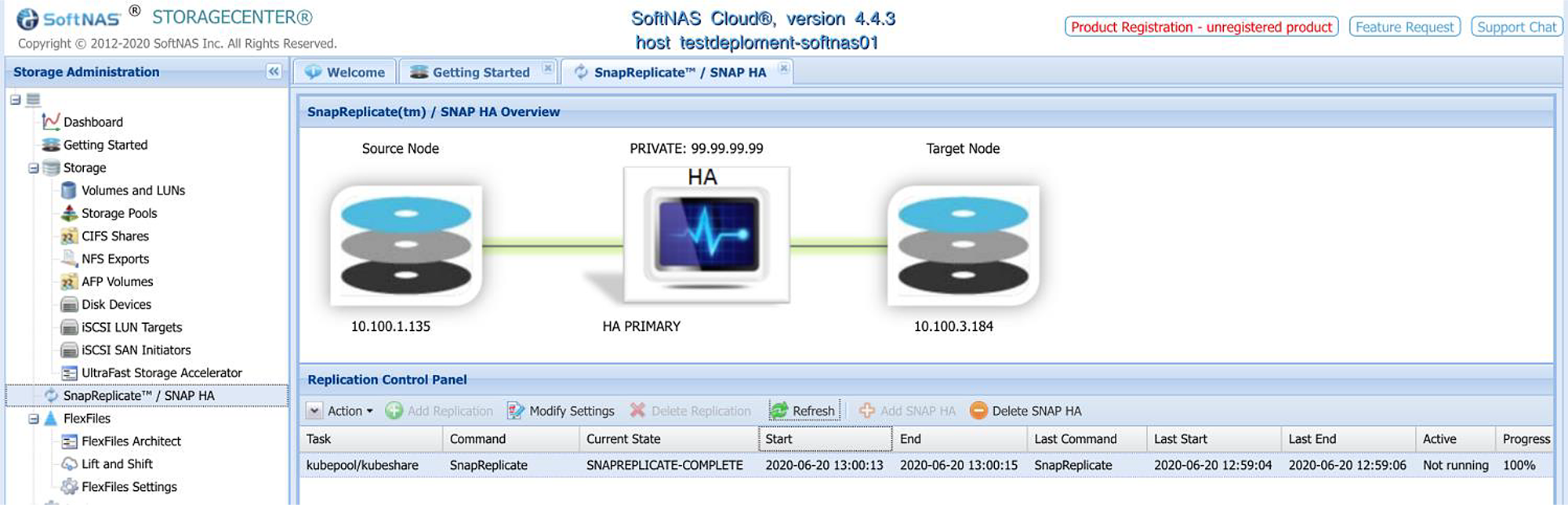

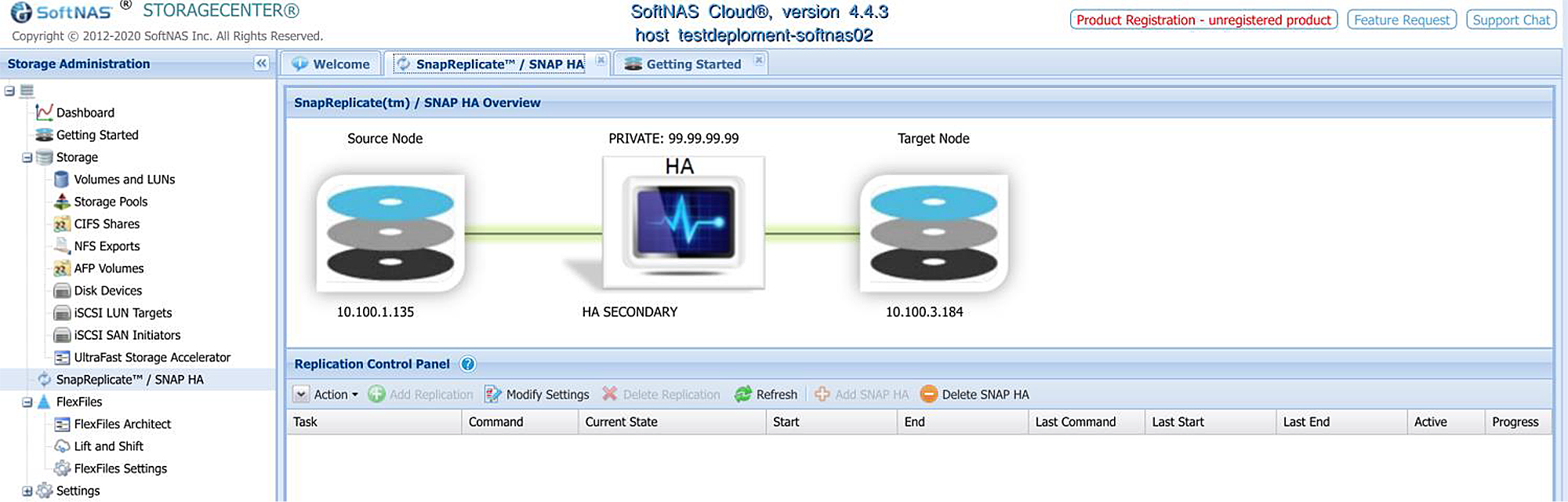

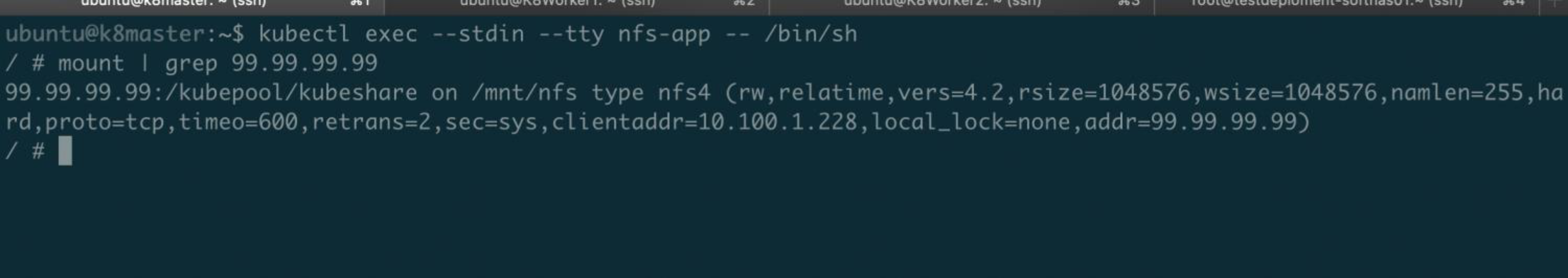

SoftNAS delivers a cost-effective, low-complexity solution for high availability storage clustering. It automatically detects and recovers from hardware failures, software crashes, and network disruptions, ensuring continuous access to data for business-critical applications.

SoftNAS goes beyond HA by offering DR solutions as well. SoftNAS can enable continuous data replication to a secondary site or Cloud, allowing for rapid recovery in the event of a disaster.

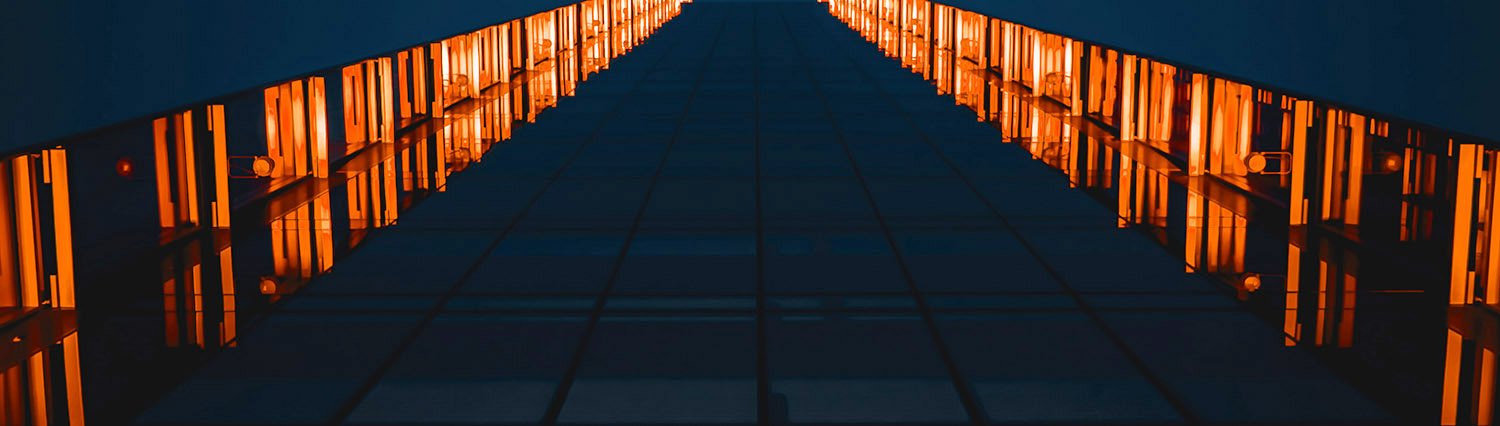

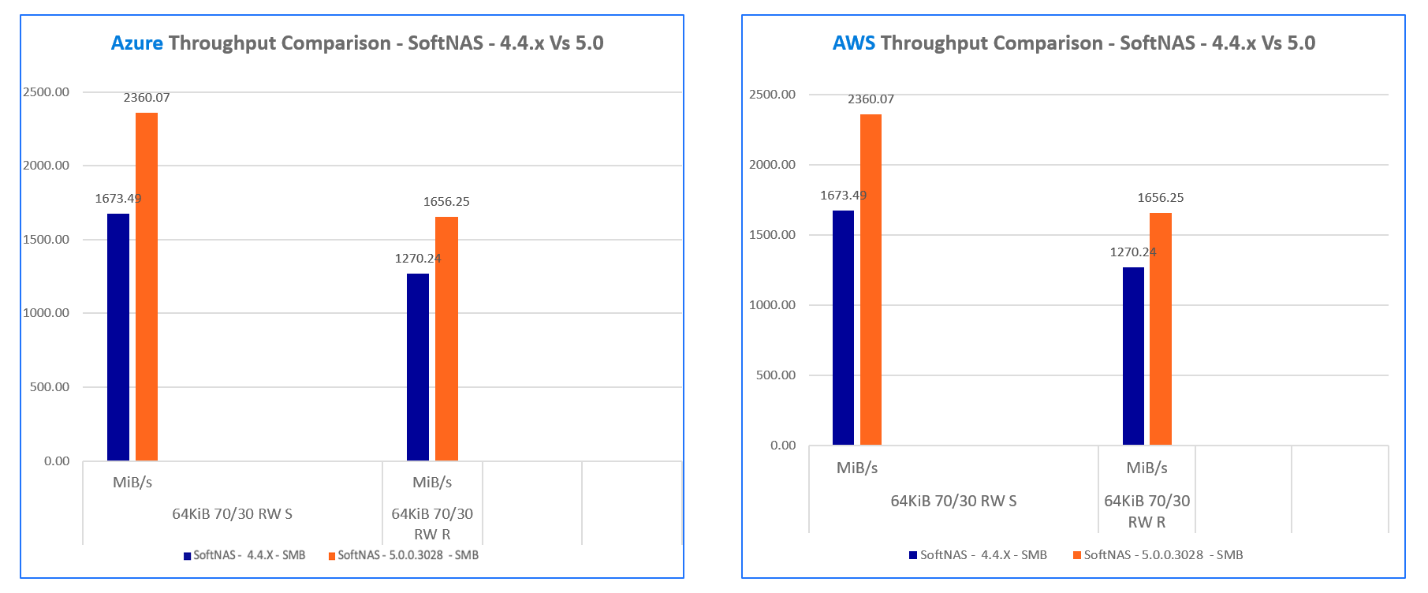

“Recent customer deployments showcasing various combinations of Hybrid Cloud, HA, and DR demonstrate the power of SoftNAS,” says Vic Mahadeven, CEO of Buurst. “Our customers are leveraging our solutions to achieve high-performance, low-cost data storage, ensuring their business-critical applications and workloads remain operational or quickly recoverable.”

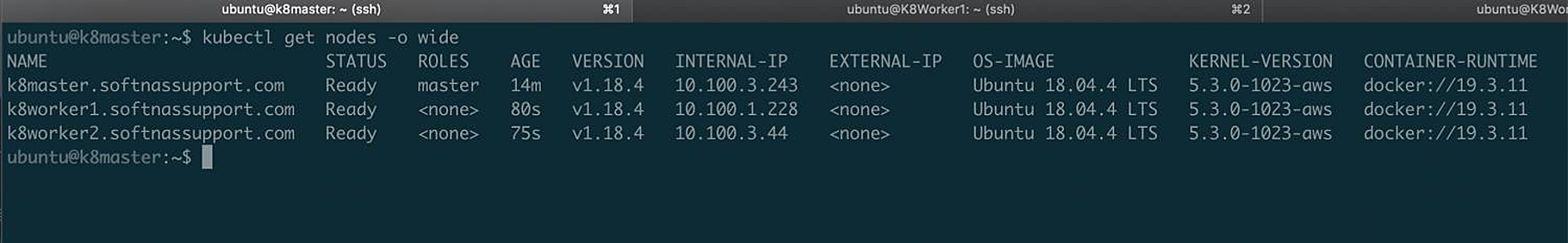

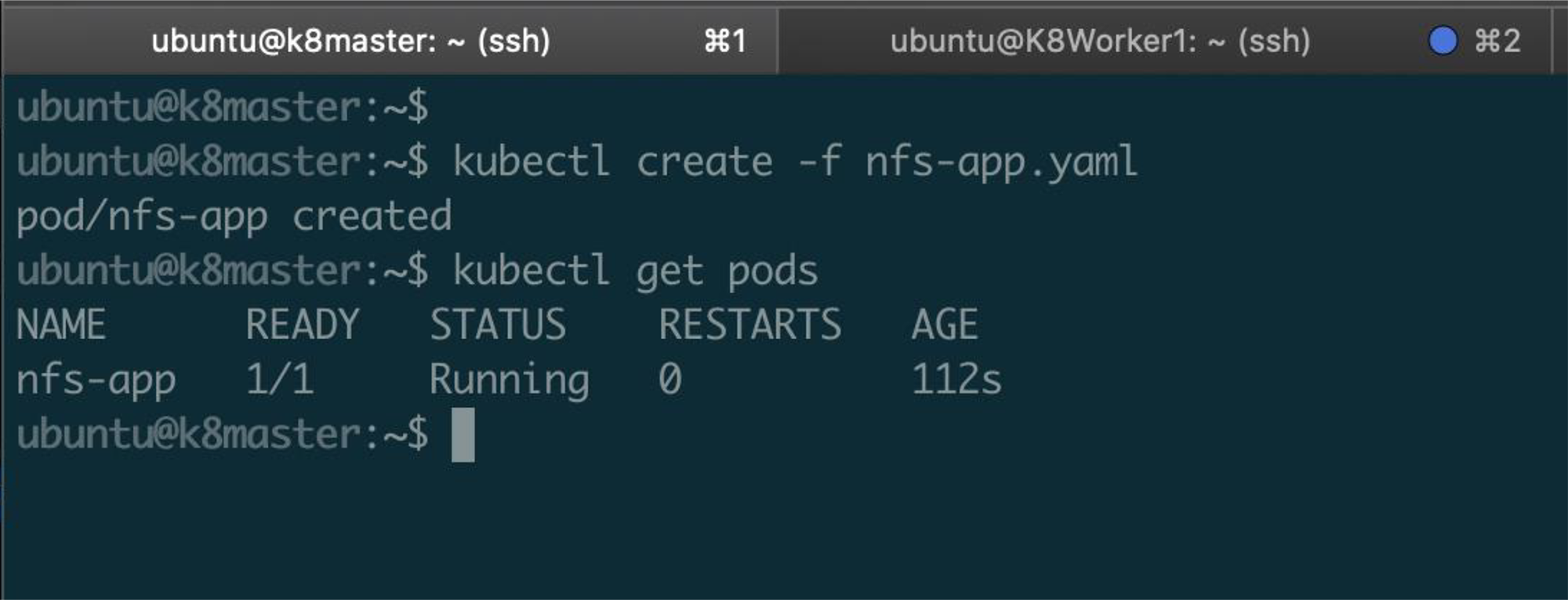

A proof point for this type of solution is seen by one or Buurst’s customers in the Middle East, which relied on a storage solution which encountered replication issues within their on-premise storage infrastructure. Buurst solved these issues by providing engineering services to install SoftNAS in an HA set-up in a primary Cloud environment, enabled a DR instance into a secondary Cloud environment, then provided migration assistance. This resilient architecture now supports the customer’s business-critical applications with both outage safeguards, secure connections, and lower latency.

“Just in the past month, we have deployed Installation and Migration services for a key EMEA customer for their Hybrid Cloud environment, along with licensing SoftNAS instances across their HA and DR Cloud environments”, stated Andy Bowden, Head of Product and Marketing.

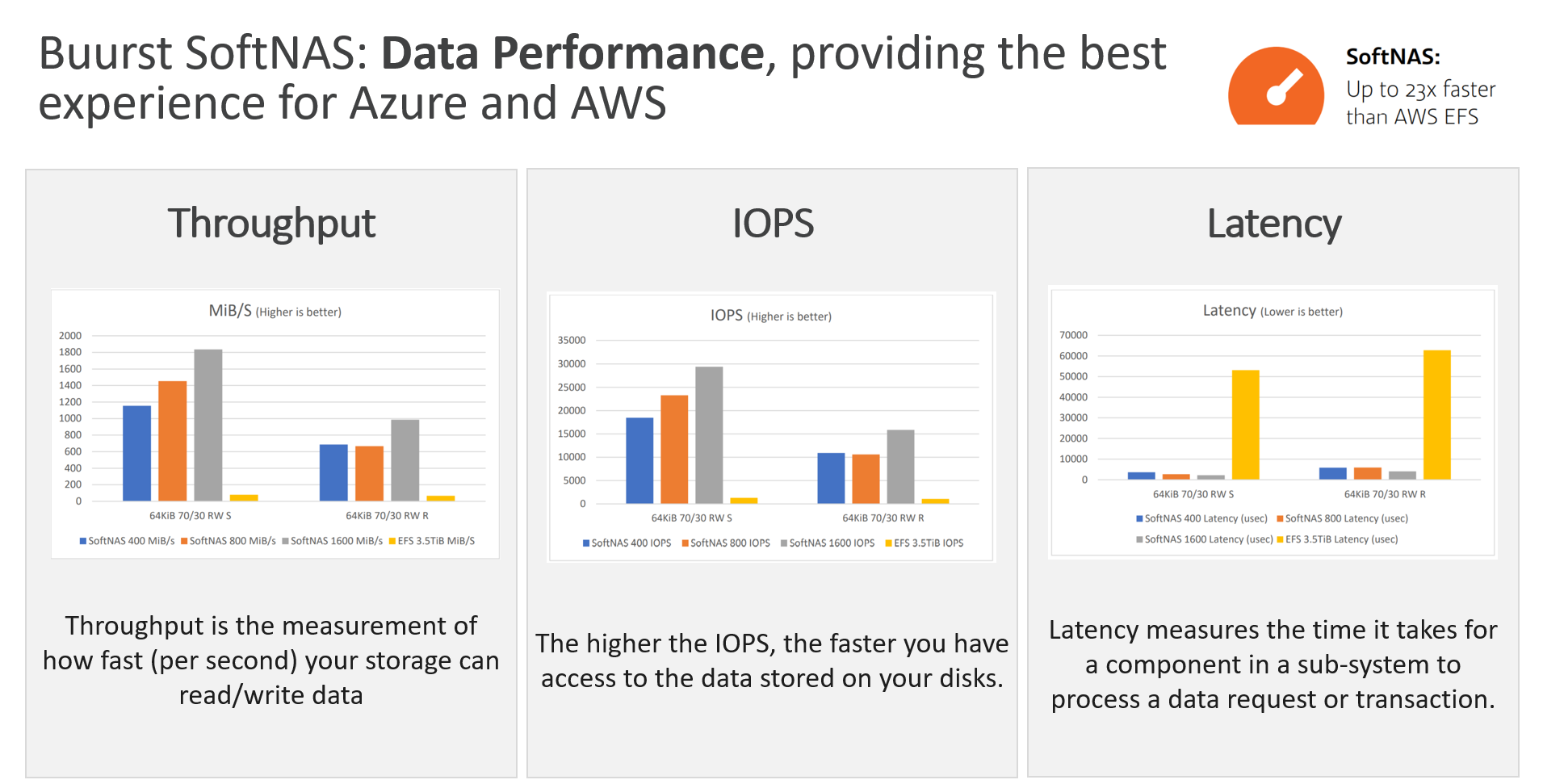

Buurst’s results provide significant benefits for its customers by enhancing business continuity strategies, reduce operational Storage costs, improving performance, and lastly, increasing scalability. The solutions provided by SoftNAS provides a highly scalable platform that can adapt to the customer’s evolving data storage requirements.

About SoftNAS

SoftNAS provides High-Performance software-defined storage solutions that deliver exceptional performance, scalability, and ease of management for virtualized, on-premises, and cloud environments. SoftNAS empowers businesses to consolidate storage resources, optimize storage utilization, and ensure business continuity with its HA and DR capabilities.

Contact:

Buurst Public Relations team, Buurst, Inc, pr@buurst.com

###

Andy Bowden

Buurst, Inc

+1 346-410-0643

email us here

Visit us on social media:

X

LinkedIn