NVMe (non-volatile memory express) technology is available as a service in the AWS cloud. Coupled with 100 Gbps networking, NVME SSDs open new frontiers of HPC and transactional workloads to run in the cloud. And because it’s available “as a service,” robust HPC storage and compute clusters can be spun up on-demand, without the capital investments, time delays, and long-term commitments usually associated with High-Performance Computing (HPC).

How to leverage AWS i3en instances for better performance

In this post, we look at how to leverage AWS i3en instances for performance-sensitive workloads that previously would only run on specialized, expensive hardware in on-premises datacenters. This technology enables both commercial HPC and research HPC, where capital budgets have limited an organization’s ability to leverage traditional HPC. It also bodes well for demanding AI and machine learning workloads, which can benefit from HPC storage coupled with 100 Gbps networking directly to GPU clusters.

According to AWS, “i3en instances can deliver up to 2 million random IOPS at 4 KB block sizes and up to 16 GB/s of sequential disk throughput.” These instances come outfitted with up to 768 GB memory, 96 vCPUs, 60 TB NVMe SSD, and they support up to 100 Gbps low-latency networking within-cluster placement groups.

AWS NVMe SSD Performance

NVMe SSDs are directly attached to Amazon EC2 instances and provide maximum IOPS and throughput, but they suffer from being pseudo-ephemeral; that is, whenever the AWS EC2 instance is shut down and started back up, a new set of empty NVMe disks are attached, possibly on a different host. Thus, data loss results as the original NVMe devices are no longer accessible. Unfortunately, this behavior limits the applicability of these powerful instances.

Customers need a way to bring demanding enterprise SQL database workloads for SAP, OLA, data warehousing, and other applications to the cloud. These business-critical workloads require both high availability (HA) and high transactional throughput, along with storage persistence and disaster recovery capabilities.

Customers also want to run commercial HPC and research HPC Solutions in the cloud, along with AI/deep learning, 3D modeling, and simulation workloads.

Unfortunately, most EC2 instances top out at 10 Gbps (1.25 GB/sec) network bandwidth. Moderate HPC workloads require 5 GB/sec or more read and write throughput. Higher-end HPC workloads need 10 GB/sec or more throughput. Even the fastest class of EBS, provisioned IOPS, can’t keep up.

Mission-critical application and transactional database workloads also require non-stop operation, data persistence, and high-performance storage throughput of several GB/sec or more.

NVMe solves the IOPS and throughput problems, but its non-persistence is a showstopper for most enterprise workloads.

So, the ideal solution must address NVMe data persistence without slowing down the HPC workloads. Mission-critical applications must also deliver high availability with at least a 99.9% uptime SLA.

High availability with high performance for Windows Server and SQL Server workloads.

Buurst recently decided to put these AWS hotrod NVMe-backed instances to the test to solve the data persistence and HA issues without degrading their high-performance benefits.

Solving the persistence problem paves the way for many compelling use cases to run in the cloud, including:

- Commercial HPC workloads

- Deep learning workloads based upon Python-based ML frameworks like TensorFlow, PyTorch, Keras, MxNet, Sonnet, and others that require feeding massive amounts of data to GPU compute instances

- 3D modeling and simulation workloads

A secondary objective was to add high availability with high-performance for Windows Server and SQL Server workloads, SAP HANA, OLTP, and data warehousing. High Availability (HA) makes delivering an SLA for HPC workloads possible in the cloud.

Buurst developed two solutions: Linux-based workloads and another for Windows Server and SQL Server use cases.

Buurst SoftNAS Solutions for HPC Linux Workloads

This solution leverages the Elastic Fabric Adapter (EFA), and AWS clustered placement groups with i3en family instances and 100 Gbps networking. Buurst testing measured up to 15 GB/second random read and 12.2 GB/second random write throughput. We also observed more than 1 million read IOPS and 876,000 write IOPS from a Linux client, all running FIO benchmarks.

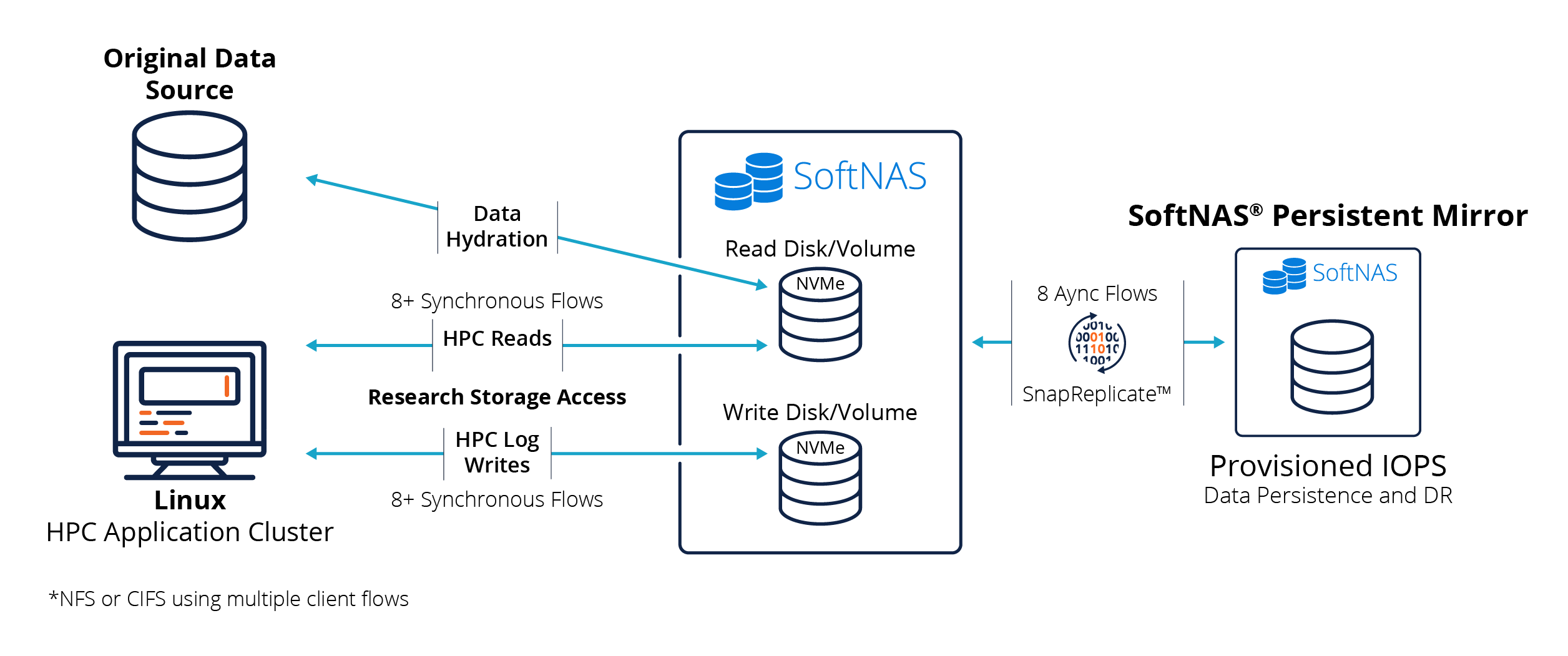

The following block diagram shows the system configuration used to attain these results.

The NVMe-backed instance contained storage pools and volumes dedicated to HPC read and write tasks. Another SoftNAS Persistent Mirror instance leveraged SoftNAS’ patented SnapReplicate® asynchronous block replication to EBS provisioned IOPS for data persistence and DR.

In real-world HPC use cases, one would likely deploy two separate NVMe-backed instances – one dedicated to high-performance read I/O traffic and the other for HPC log writes. We used eight or more synchronous iSCSI data flows from a single HPC client node in our testing. It’s also possible to leverage NFS across a cluster of HPC client nodes, providing eight or more client threads, each accessing storage. Each “flow,” as it’s called in placement group networking, delivers 10 Gbps of throughput. Maximizing the available 100 Gbps network requires leveraging 8 to 10 or more such parallel flows.

The persistence of the NVMe SSDs runs in the background asynchronously to the HPC job itself. Provisioned IOPS is the fastest EBS persistent storage on AWS. (Learn about AWS NAS Storage) SoftNAS’ underlying OpenZFS filesystem uses storage snapshots once per minute to aggregate groups of I/O transactions occurring at 10 GB/second or faster across the NVMe devices. Once per minute, these snapshots are persisted to EBS using eight parallel SnapReplicate streams, albeit trailing the near real-time NVMe HPC I/O slightly. When the HPC job settles down, the asynchronous persistence writes to EBS catch up, ensuring data recoverability when the NVMe instance is powered down or is required to move to a different host for maintenance patching reasons.

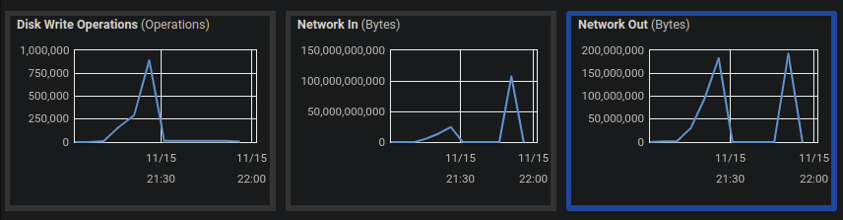

Here’s a sample CloudWatch screengrab taken off the SoftNAS instance after one of the FIO random write tests. We see more than 100 Gbps Network In (writes to SoftNAS) and approaching 900,000 random write IOPS. The reads (not shown here) clocked in at more than 1,000,000 IOPS (less than the 2 million IOPS AWS says the NVMe can deliver – it would take more than 100 Gbps networking to reach the full potential of the NVMe).

One thing that surprised us is there’s virtually no observable difference in random vs. sequential performance with NVMe. Because NVMe comprises high-speed memory that’s directly attached to the system bus, we don’t see the usual storage latency differences between random seek vs. sequential workloads – it all performs at the same speed over NVMe.

The level of performance delivered over EFA networking to and from NVMe for Linux workloads is impressive – the fastest SoftNAS Labs has ever observed running in the AWS cloud – a million IOPS and 15 GB/second read performance and 876,000 write IOPS at 12.2 GB/second.

This HPC storage configuration for Linux can be used to satisfy many use cases, including:

- Commercial HPC workloads

- Deep learning workloads based upon Python-based ML frameworks like TensorFlow, PyTorch, Keras, MxNet, Sonnet, and others that require feeding massive amounts of data to GPU compute clusters

- 3D modeling and simulation workloads

- HPC container workloads.

Buurst SoftNAS Solutions for HPC Windows Server and SQL Server Workloads

This solution leverages the Elastic Network Adapter (ENA) and AWS clustered placement groups with i3en family and 25 Gbps networking. Buurst testing measured up to 2.7 GB/second read and 2.9 GB/second write throughput on Windows Server running Crystal Disk benchmarks. We did not have time to benchmark SQL Server in this mode, something we plan to do later.

Unfortunately, Windows Server on AWS does not support the 100 Gbps EFA driver, so at the time of these tests, placement group networking with Windows Server was limited to 25 Gbps via ENA only.

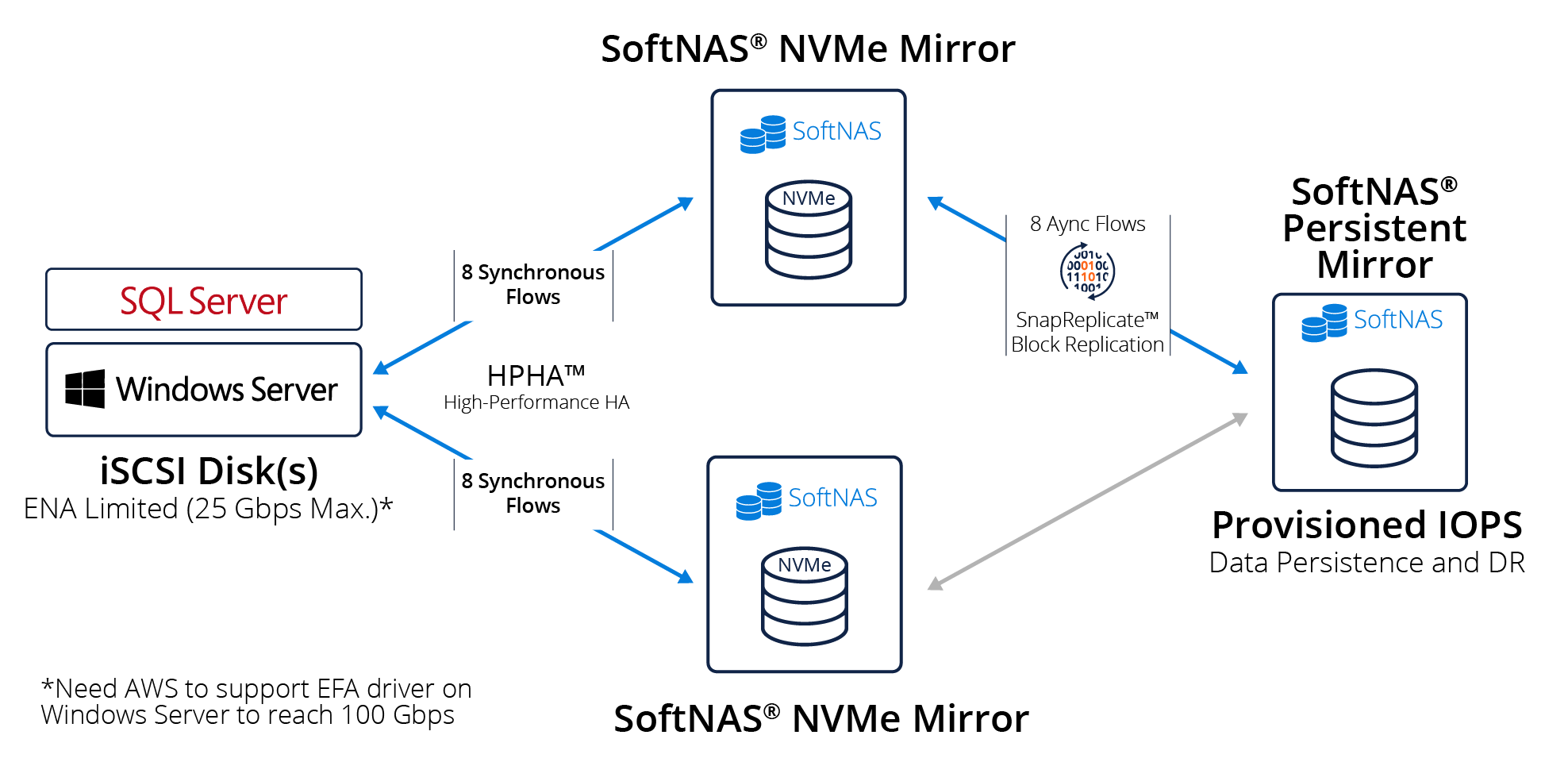

The following block diagram shows the system architecture used to attain these results.

To provide high availability and high performance, which Buurst calls High-Performance HA (HPHA), it’s necessary to combine two SoftNAS NVMe-backed instances deployed into an iSCSI mirror configuration. The mirrors use synchronous I/O to ensure transactional integrity and high availability.

SnapReplicate uses snapshot-based block replication to persist the NVMe data to provisioned IOPS EBS (or any EBS class or S3) for DR. The DR node can be in a different zone or region indicated by the DR requirements. We chose provisioned IOPS to minimize persistence latency.

Windows Server supports a broad range of applications and workloads. We increasingly see SQL Server, Postgres, and other SQL workloads being migrated into the cloud. It’s common to see various large-scale enterprise applications like SAP, SAP HANA, and other SQL Server and Windows Server workloads that require both high-performance and high availability.

The above configuration leveraging NVMe-backed instances enables AWS to support more demanding enterprise workloads for data warehousing, OLA, and OLTP use cases. Buurst SoftNAS HPHA allows high performance, synchronous mirroring across NVMe instances with high availability and a level of data persistence and DR required by many business-critical workloads.

Buurst SoftNAS for HPC solutions

AWS i3en instances deliver a massive amount of punch in CPU horsepower, cache memory, and up to 60 terabytes of NVMe storage. The EFA driver, coupled with clustered placement group networking, delivers high-performance 100 Gbps networking and HPC levels of IOPS and throughput. The addition of Buurst SoftNAS makes data persistence and high availability possible to more fully leverage the power these instances provide. This situation works well for Linux-based workloads today.

However, the lack of Elastic Fiber Adapter for full 100 Gbps networking with Windows Server is undoubtedly a sore spot – one we hope that AWS and Microsoft teams are working to resolve.

The future for HPC in AWS looks bright.

We can imagine a day when more than 100 Gbps networking becomes available, enabling customers to take full advantage of the 2 million IOPS the NVMe SSDs remain poised to deliver.

Buurst SoftNAS for HPC solutions operates very cost-effectively on as few as a single node for workloads that do not require high availability or as few as two nodes with HA. Unlike other storage solutions that require a minimum of six (6) i3en nodes, the SoftNAS solution provides cost-effectiveness, HPC performance, high availability, and persistence with DR options across all AWS zones and regions.

Buurst SoftNAS and AWS are well-positioned today with commercially off-the-shelf products that, when combined, clear the way to move numerous HPC, Windows Server, and SQL Server workloads from on-premises data centers into the AWS cloud. And since SoftNAS is available on-demand via the AWS Marketplace, customers with these types of demanding needs are just minutes away from achieving HPC in the cloud. SoftNAS is available to assist partners and customers in quickly configuring and performance-tuning these HPC solutions.

About Buurst™

Buurst is a data performance software company that provides solutions for enterprise data in the cloud or at the edge. The company offers high availability, secure access, and performance-based pricing to organizational data with the industry’s only No Downtime Guarantee for cloud storage. To learn more follow the company on Twitter, LinkedIn, and Vimeo.

To learn more or discuss your HPC and SQL Server migration needs, please contact Buurst or an authorized partner.