High Performance Computing Solutions (HPC Storage Solutions) in the Cloud – Why We Need It, and How to Make It Work.

Novartis successfully completed a cancer drug project in AWS. The pharma giant leased 10,000 EC2 instances with about 87,000 compute cores for 9 hours at a disclosed cost of approximately $4,200. They estimated that the cost to purchase the equivalent hardware on-prem and associated expenses required to complete the same tasks would have been approximately $40M. Clearly, High-Performance Computing Solutions, or HPC, in the cloud is a game-changer. It reduces CAPEX, and computing time, and provides a level playing field for all – you don’t have to make a huge investment in infrastructure. Yet, after all these years, cloud HPC hasn’t taken off as one would expect. The reasons for the lack of popularity of HPC in the cloud are many, but one big deterrent is storage.

Currently, available AWS and Azure services have throughput, capacity, pricing or cross-platform compatibility issues that make them less than adequate for cloud HPC workloads. For instance, AWS EFS requires a large minimum file system size to offer adequate throughput for HPC workloads. AWS EBS is a raw block device with a 16TB limit and requires an EC2 compute to front. AWS FsX for Lustre and Windows has similar issues as EBS and EFS.

The Azure Ultra SSD is still in preview. It supports only Windows Server and RHEL currently and is likely to be expensive too. Azure Premium Files, still in preview, have a 100TB share capacity that could be restrictive for some HPC workloads. Still, Microsoft promises 5GiB per share throughput with burstable IOPS to 100,000 per share with a capacity of up to 100TB per share.

Making Cloud High Performance Computing (HPC) storage work

For effective High Performance Computing solutions in the cloud, it is necessary to have predictable functioning. All components of the solution (Compute, Network, Storage) have to be the fastest available to optimize the workload and leverage the massive parallel processing power available in the cloud. Burstable storage is not suitable – withdrawal of any resources will cause the process to fail.

With the SoftNAS Cloud NAS Filer, dedicated resources with predictable and reliable functioning become available in a single comprehensive solution. There’s no need to purchase or integrate separate software and configure it. This translates to an ability to rapidly deploy the solution from the marketplace. You can have SoftNAS up and running in an hour from the marketplace.

The completeness of the solution also makes it easy to scale. As a business, you can select the compute and title storage needed for your NAS and scale up the entire Virtual cloud NAS as your needs increase.

Greater customization can be made to suit the specific needs of your business by choosing the type of drive needed, and choose between CIFs and NFS sharing with high availability.

HPC Solutions in the cloud – A use case

SoftNAS has worked with clients to implement cloud HPC. In one case, a leading oil and gas corporation commissioned us to identify the fastest throughput performance achievable with a single SoftNAS instance in Azure, in order to facilitate migration of their internal E&P application suite.

The suite was being run on-prem using NetApp SAN and HP Proliant current-gen blade servers, and remote customers connected to Hyper-V clusters running GPU-enabled virtual desktops.

Our team ascertained the required speeds for HPC in the cloud as:

Sustained write speeds of 500MBps to single CIFS share

Sustained read speeds of 800MBps from a single CIFS share

High Performance Computing in the Cloud PoC – our learnings

- While the throughput performance criteria were achieved, the LS64s_v2 bundled nVME disks are ephemeral, not persistent. In addition, the pool cannot be expanded with additional nVME disks, just SSD. These factors eliminate this instance type from consideration.

- Enabling Accelerated Networking on any/all VMs within an Azure solution is critical to achieve the fastest performance possible.

- It appears that Azure Ultra SSDs could be the fastest storage product in any Cloud. These are currently available only in beta in a single Azure region/AZ and cannot be tested with Marketplace VMs as of time of publishing. On Windows 2016 VMs, we achieved 1.4GBps write throughput on a DS_v3 VM as part of the Ultra SSD preview program.

- When testing the performance of SoftNAS with client machines, it is important that the test machines have network throughput capacity equal or greater to the SoftNAS VM and that accelerated networking is enabled.

- On pools comprised of nVME disks, adding a ZIL or read cache of mirrored premium SSD drives actually slows performance.

Achieving Cloud HPC Solutions/Success

SoftNAS is committed to leading the market as a provider of the fastest Cloud storage platform available. To meet this goal, our team has a game plan.

- Testing/benchmarking the fastest EC2s and Azure VMs (ex. i3.16xlarge, i3.metal etc.) with the fastest disks.

- Fast adoption of new Cloud storage technologies (ex. Azure Ultra SSD)

- For every POC, production deployment, or internal test of SoftNAS, measure the throughput and IOPS, and document the instance & pool configurations. This info needs to be accessible to our team so we can match configurations to required performance.

SoftNAS provides customers a unified, integrated way to aggregate, transform, accelerate, protect and store data and to easily create hybrid cloud solutions that bridge islands of data across SaaS, legacy systems, remote offices, factories, IoT, analytics, AI, and machine learning, web services, SQL, NoSQL and the cloud – any kind of data. SoftNAS works with the most popular public, private, hybrid, and premises-based virtual cloud operating systems, including Amazon Web Services, Microsoft Azure, and VMware vSphere.

SoftNAS Solutions for HPC Linux Workloads

This solution leverages the Elastic Fabric Adapter (EFA), and AWS clustered placement groups with i3en family instances and 100 Gbps networking. Buurst testing measured up to 15 GB/second random read and 12.2 GB/second random write throughput. We also observed more than 1 million read IOPS and 876,000 write IOPS from a Linux client, all running FIO benchmarks.

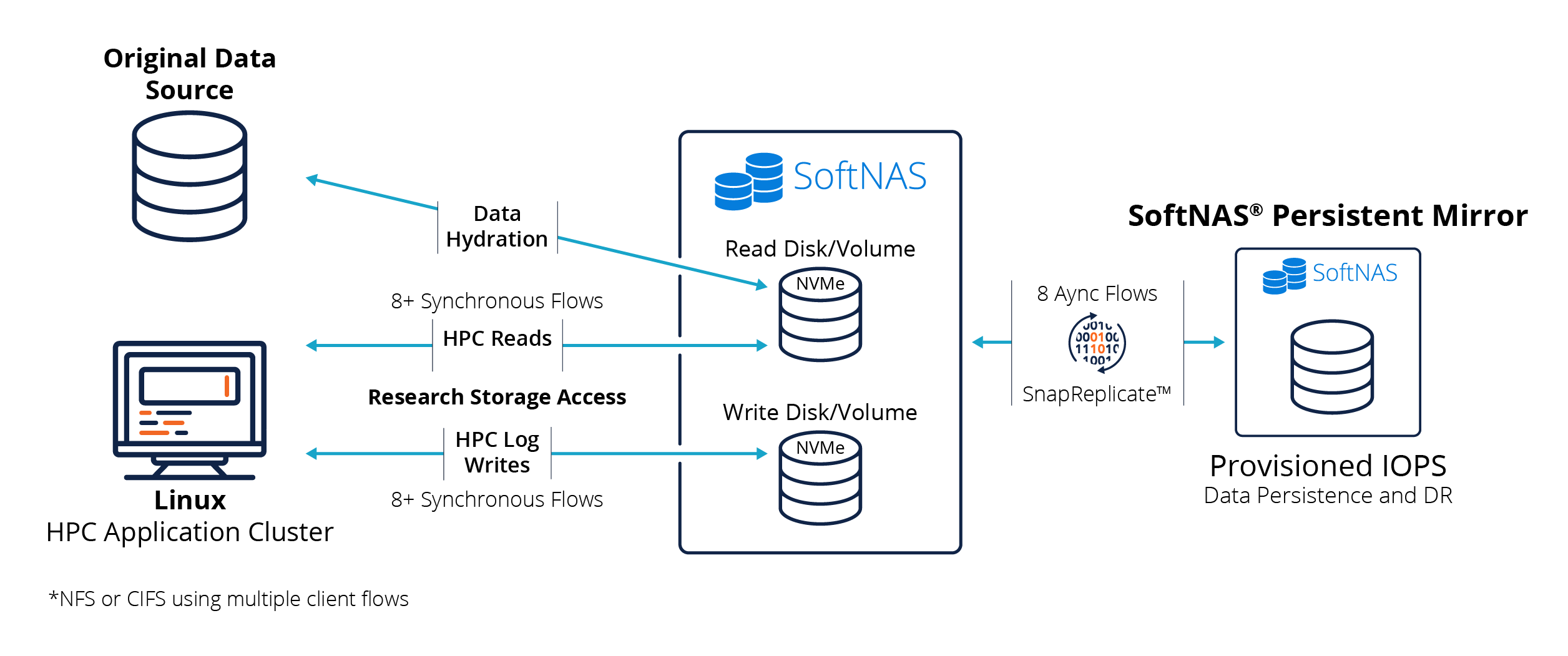

The following block diagram shows the system configuration used to attain these results.

The following block diagram shows the system architecture used to attain these results.

The NVMe-backed instance contained storage pools and volumes dedicated to HPC read and write tasks. Another SoftNAS Persistent Mirror instance leveraged SoftNAS’ patented SnapReplicate® asynchronous block replication to EBS provisioned IOPS for data persistence and DR.

In real-world HPC use cases, one would likely deploy two separate NVMe-backed instances – one dedicated to high-performance read I/O traffic and the other for HPC log writes. We used eight or more synchronous iSCSI data flows from a single HPC client node in our testing. It’s also possible to leverage NFS across a cluster of HPC client nodes, providing eight or more client threads, each accessing storage. Each “flow,” as it’s called in placement group networking, delivers 10 Gbps of throughput. Maximizing the available 100 Gbps network requires leveraging 8 to 10 or more such parallel flows.

The persistence of the NVMe SSDs runs in the background asynchronously to the HPC job itself. Provisioned IOPS is the fastest EBS persistent storage on AWS. SoftNAS’ underlying OpenZFS filesystem uses storage snapshots once per minute to aggregate groups of I/O transactions occurring at 10 GB/second or faster across the NVMe devices. Once per minute, these snapshots are persisted to EBS using eight parallel SnapReplicate streams, albeit trailing the near real-time NVMe HPC I/O slightly. When the HPC job settles down, the asynchronous persistence writes to EBS catch up, ensuring data recoverability when the NVMe instance is powered down or is required to move to a different host for maintenance patching reasons.

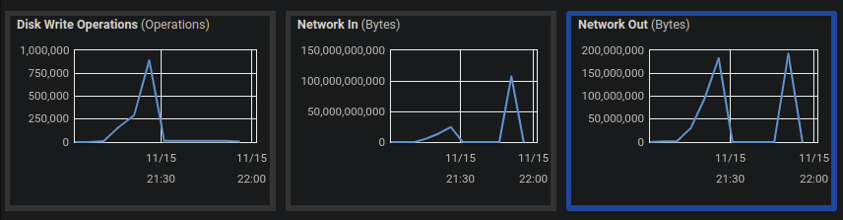

Here’s a sample CloudWatch screengrab taken off the SoftNAS instance after one of the FIO random write tests. We see more than 100 Gbps Network In (writes to SoftNAS) and approaching 900,000 random write IOPS. The reads (not shown here) clocked in at more than 1,000,000 IOPS (less than the 2 million IOPS AWS says the NVMe can deliver – it would take more than 100 Gbps networking to reach the full potential of the NVMe).

Here’s a sample CloudWatch screen grab taken off the SoftNAS instance after one of the FIO random write tests.

One thing that surprised us is there’s virtually no observable difference in random vs. sequential performance with NVMe. Because NVMe comprises high-speed memory that’s directly attached to the system bus, we don’t see the usual storage latency differences between random seek vs. sequential workloads – it all performs at the same speed over NVMe.

The level of performance delivered over EFA networking to and from NVMe for Linux workloads is impressive – the fastest SoftNAS Labs has ever observed running in the AWS cloud – a million IOPS and 15 GB/second read performance and 876,000 write IOPS at 12.2 GB/second.

This HPC storage configuration for Linux can be used to satisfy many use cases, including:

- Commercial HPC workloads

- Deep learning workloads based upon Python-based ML frameworks like TensorFlow, PyTorch, Keras, MxNet, Sonnet, and others that require feeding massive amounts of data to GPU compute clusters

- 3D modeling and simulation workloads

- HPC container workloads.

SoftNAS Solutions for HPC Windows Server and SQL Server Workloads

This solution leverages the Elastic Network Adapter (ENA) and AWS clustered placement groups with i3en family and 25 Gbps networking. Buurst testing measured up to 2.7 GB/second read and 2.9 GB/second write throughput on Windows Server running Crystal Disk benchmarks. We did not have time to benchmark SQL Server in this mode, something we plan to do later.

Unfortunately, Windows Server on AWS does not support the 100 Gbps EFA driver, so at the time of these tests, placement group networking with Windows Server was limited to 25 Gbps via ENA only.

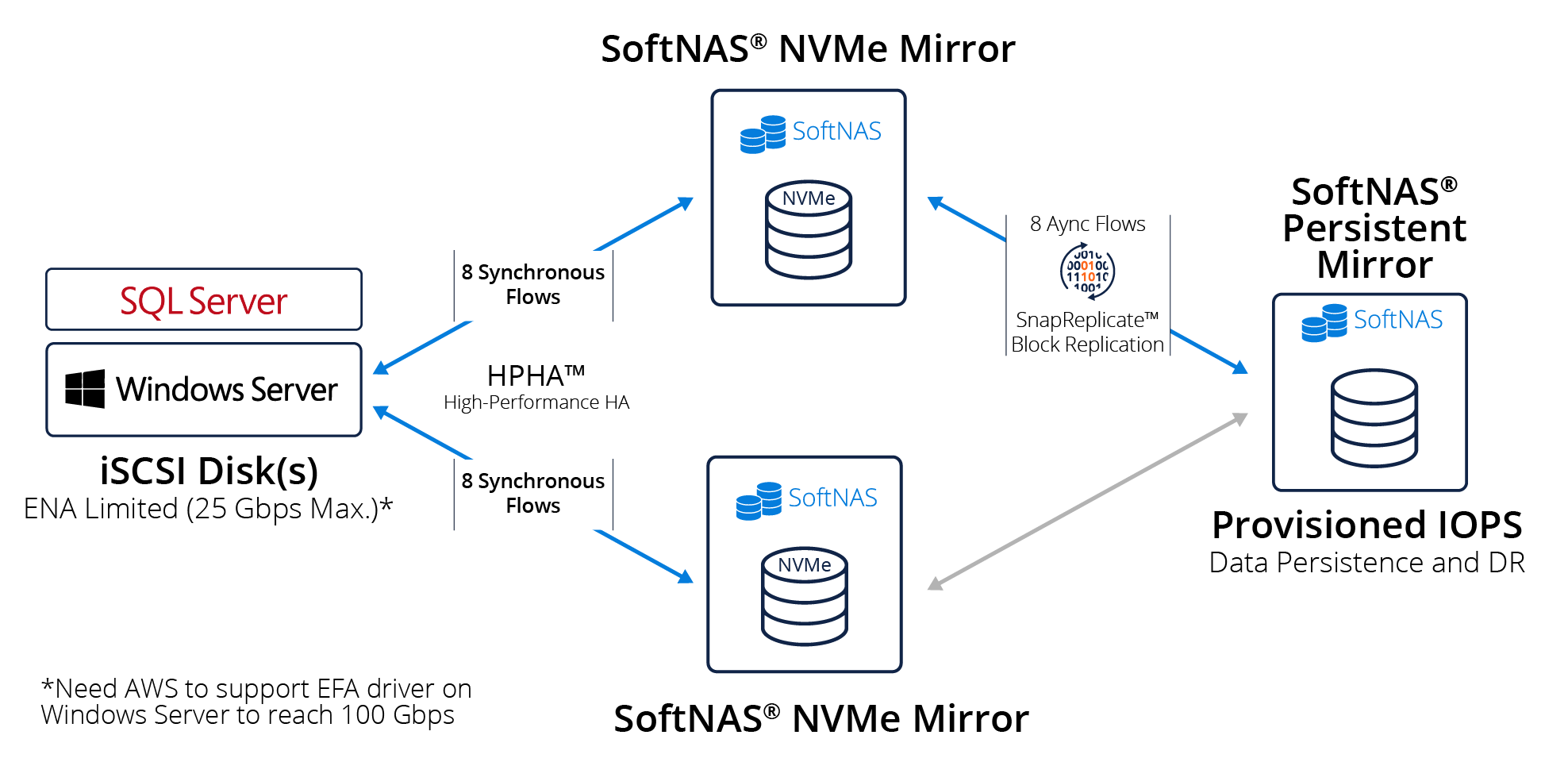

The following block diagram shows the system architecture used to attain these results.

The following block diagram shows the system architecture used to attain these results.

To provide high availability and high performance, which Buurst calls High-Performance HA (HPHA), it’s necessary to combine two SoftNAS NVMe-backed instances deployed into an iSCSI mirror configuration. The mirrors use synchronous I/O to ensure transactional integrity and high availability.

SnapReplicate uses snapshot-based block replication to persist the NVMe data to provisioned IOPS EBS (or any EBS class or S3) for DR. The DR node can be in a different zone or region indicated by the DR requirements. We chose provisioned IOPS to minimize persistence latency.

Windows Server supports a broad range of applications and workloads. We increasingly see SQL Server, Postgres, and other SQL workloads being migrated into the cloud. It’s common to see various large-scale enterprise applications like SAP, SAP HANA, and other SQL Server and Windows Server workloads that require both high-performance and high availability.

The above configuration leveraging NVMe-backed instances enables AWS to support more demanding enterprise workloads for data warehousing, OLA, and OLTP use cases. Buurst SoftNAS HPHA allows high performance, synchronous mirroring across NVMe instances with high availability and a level of data persistence and DR required by many business-critical workloads.

Buurst SoftNAS for HPC solutions

AWS i3en instances deliver a massive amount of punch in CPU horsepower, cache memory, and up to 60 terabytes of NVMe storage. The EFA driver, coupled with clustered placement group networking, delivers high-performance 100 Gbps networking and HPC levels of IOPS and throughput. The addition of Buurst SoftNAS makes data persistence and high availability possible to more fully leverage the power these instances provide. This situation works well for Linux-based workloads today.

However, the lack of Elastic Fiber Adapter for full 100 Gbps networking with Windows Server is undoubtedly a sore spot – one we hope that AWS and Microsoft teams are working to resolve.

The future for HPC in AWS looks bright. We can imagine a day when more than 100 Gbps networking becomes available, enabling customers to take full advantage of the 2 million IOPS the NVMe SSD’s remain poised to deliver.

Buurst SoftNAS for HPC solutions operates very cost-effectively on as few as a single node for workloads that do not require high availability or as few as two nodes with HA. Unlike other storage solutions that require a minimum of six (6) i3en nodes, the SoftNAS solution provides cost-effectiveness, HPC performance, high availability, and persistence with DR options across all AWS zones and regions.

Buurst SoftNAS and AWS are well-positioned today with commercially off-the-shelf products that, when combined, clear the way to move numerous HPC, Windows Server, and SQL Server workloads from on-premises data centers into the AWS cloud. And since SoftNAS is available on-demand via the AWS Marketplace, customers with these types of demanding needs are just minutes away from achieving HPC in the cloud. SoftNAS is available to assist partners and customers in quickly configuring and performance-tuning these HPC solutions.