SoftNAS storage solutions for SAP HANA

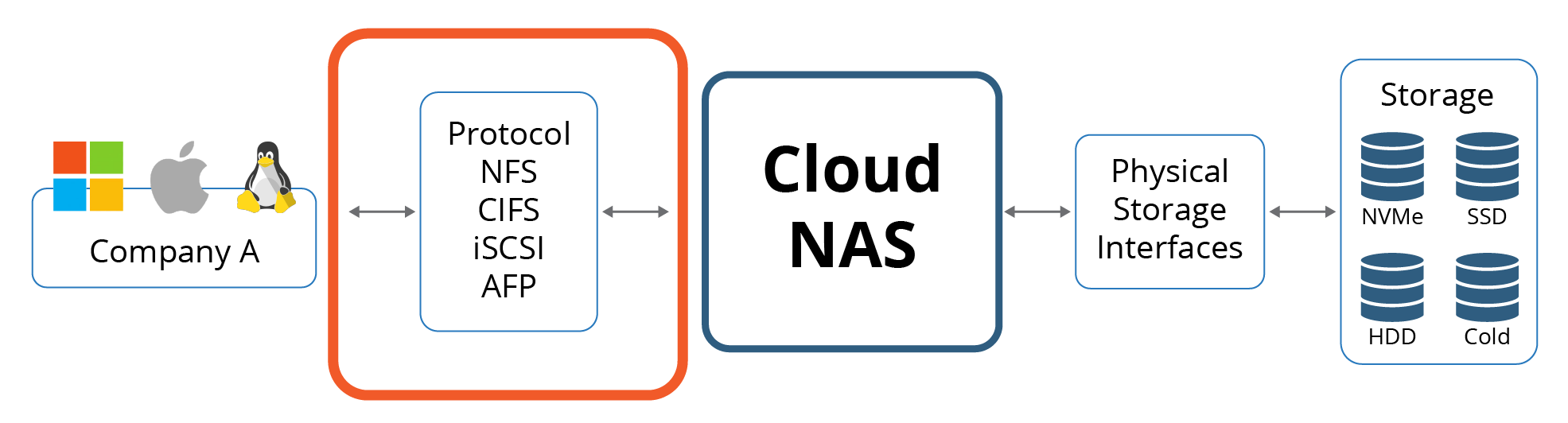

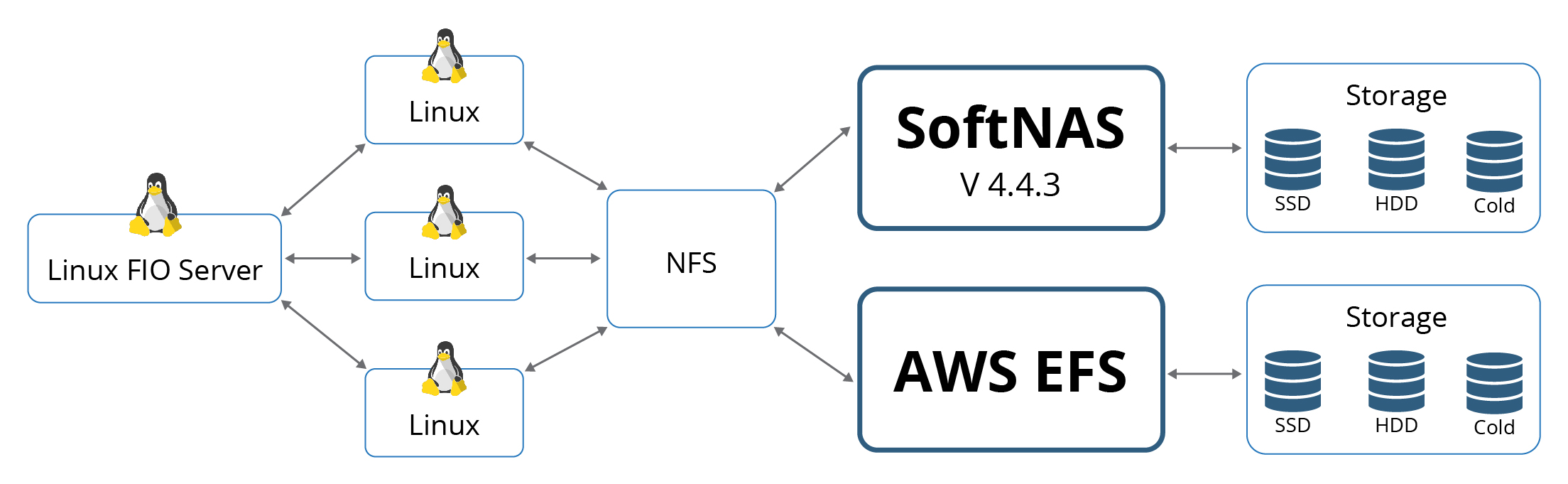

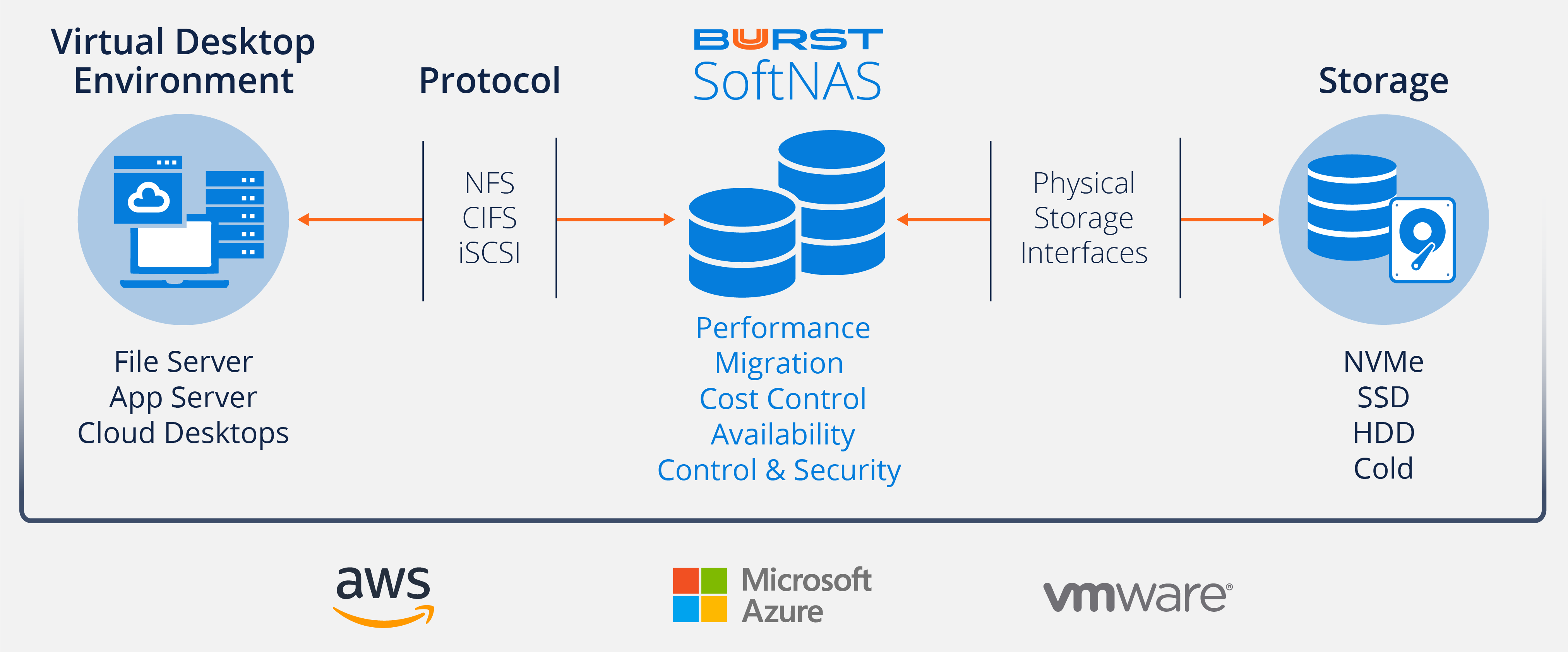

As we all know, one of the most critical parts of any system is storage. Buurst SoftNAS provides cloud storage performance to SAP HANA. This blog post will make it easier for you to understand the options available to SoftNAS and SAP HANA to improve data performance and reduce your environment’s complexity. You will also learn how to choose specific storage options for their SAP HANA environment.

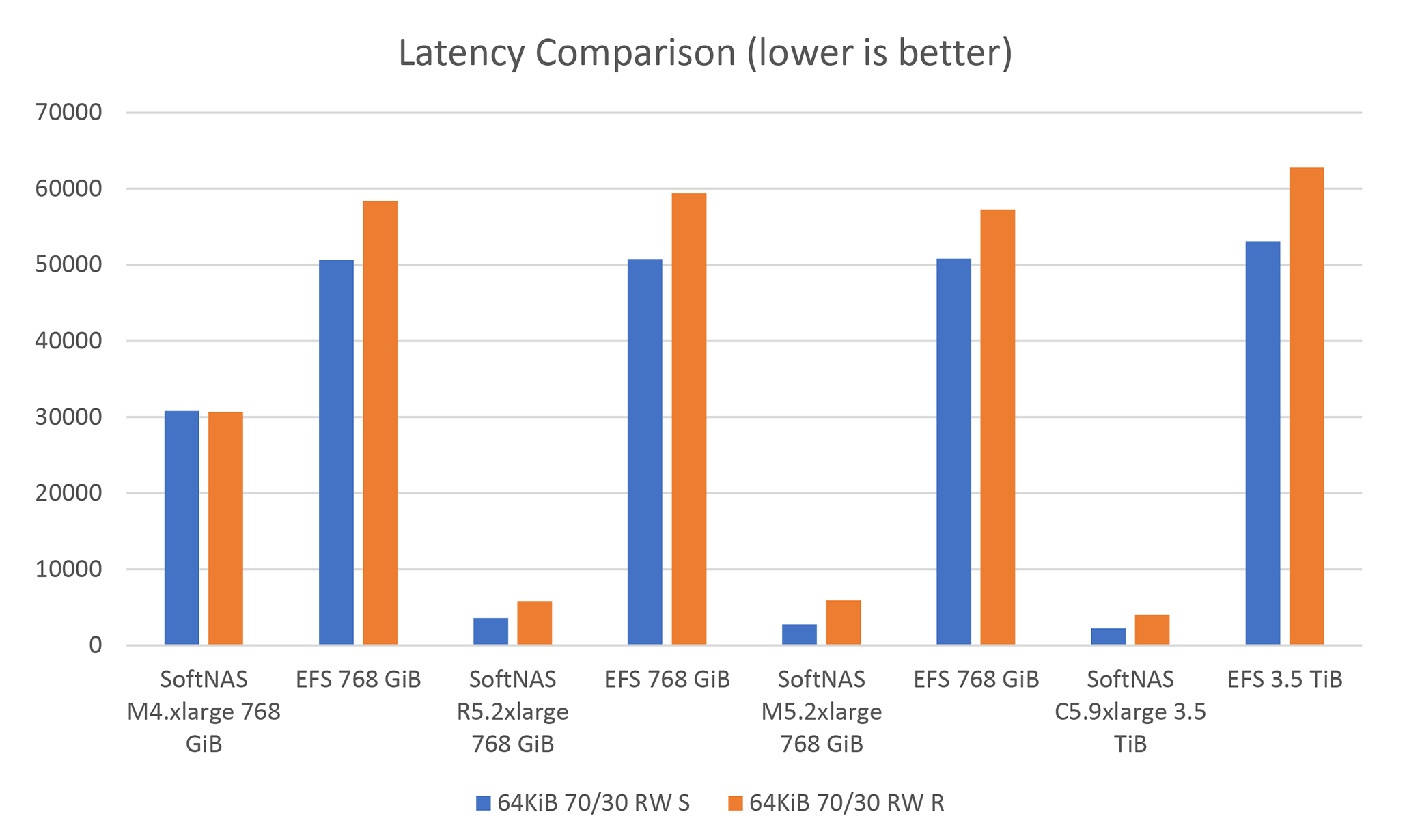

SAP HANA is a platform for data analysis and business analytics. HANA can provide insights from your data—faster than traditional RDMS systems. Performance is essential for SAP HANA because it helps to provide information more quickly. SAP HANA is optimized to work with real-time data, so performance is a significant factor.

Top 5 reasons to choose Buurst SoftNAS SAP HANA

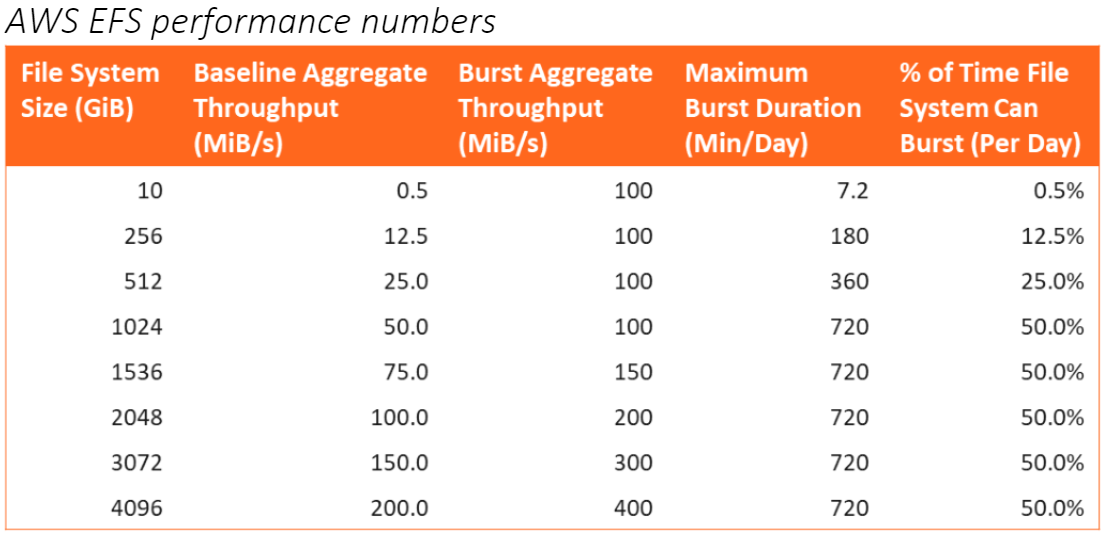

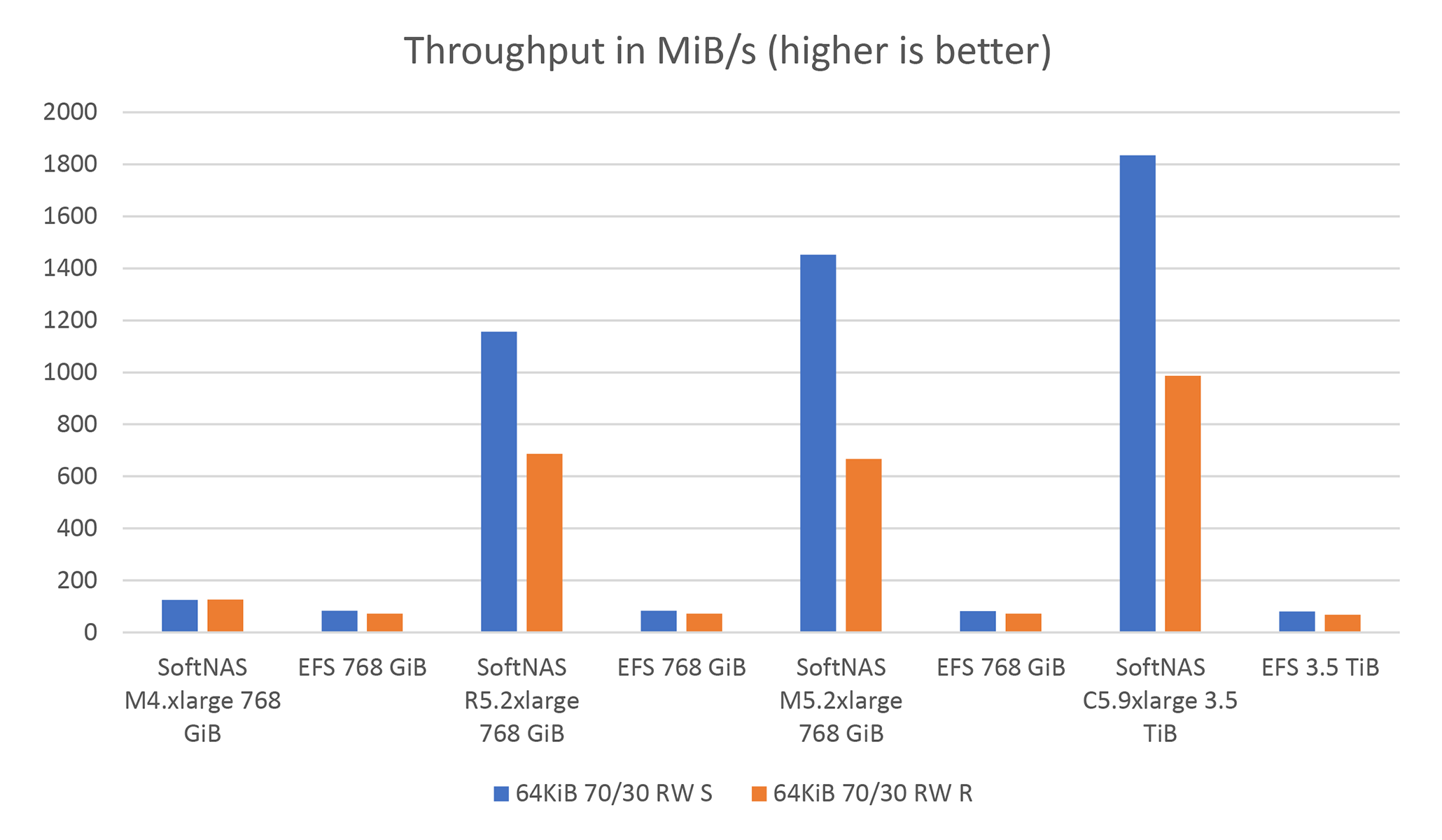

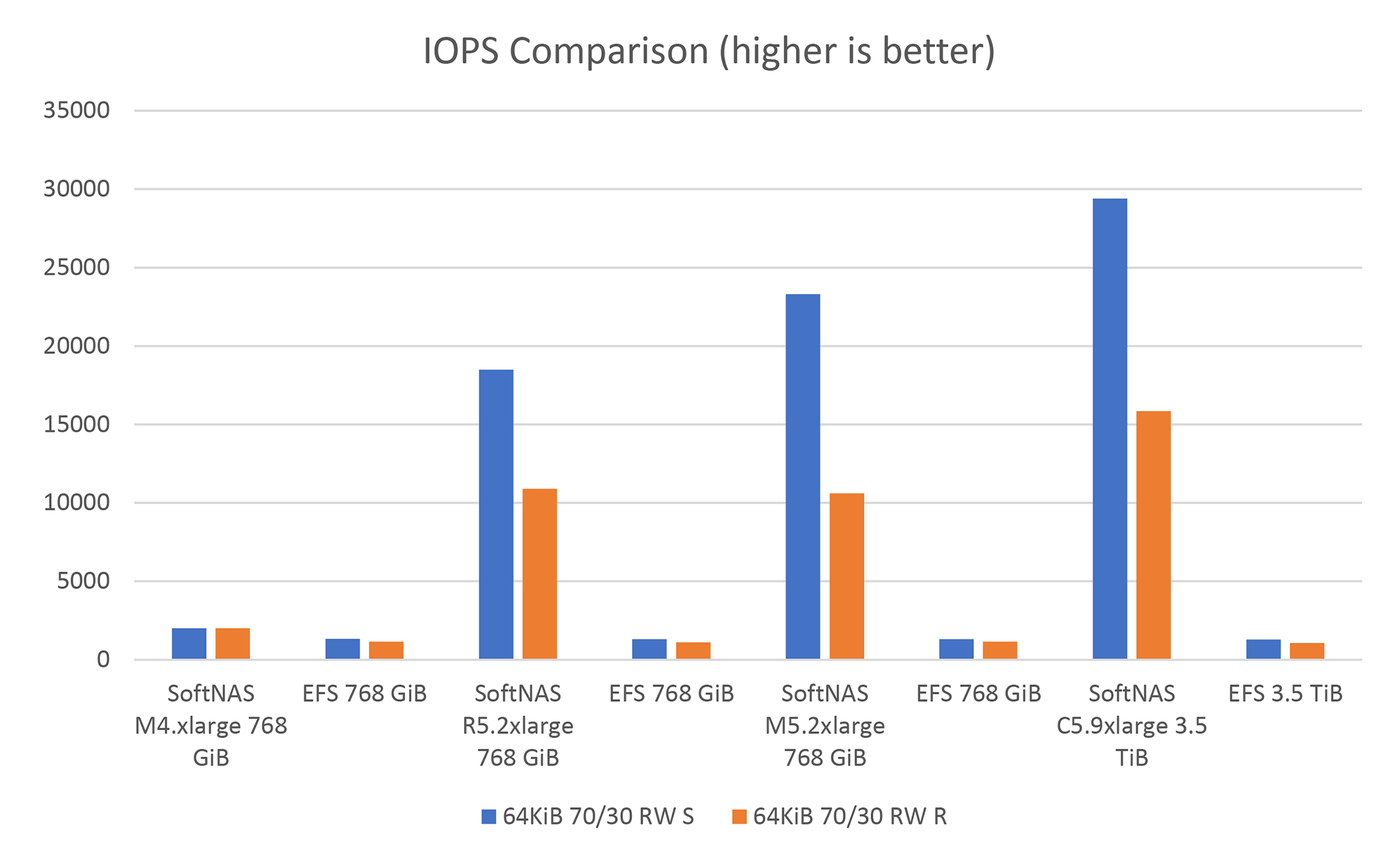

1. Superior performance for processing data

2. Maximum scale to process as much data as possible

3. High reliability

4. Low cost

5. Integration into existing infrastructure

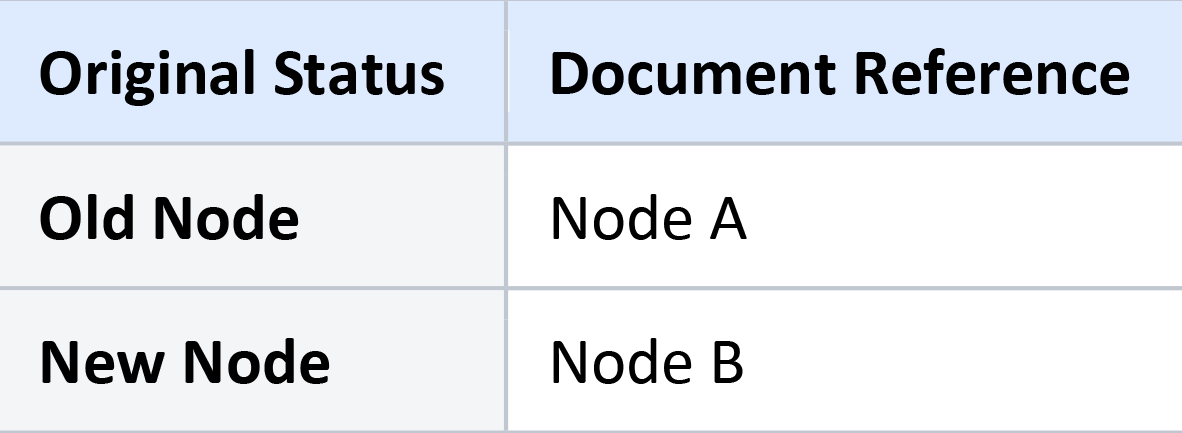

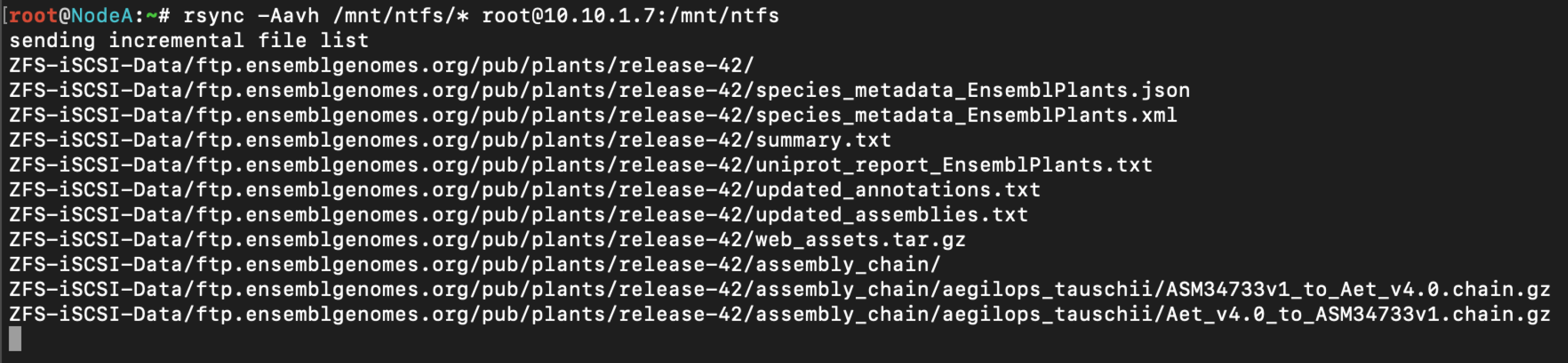

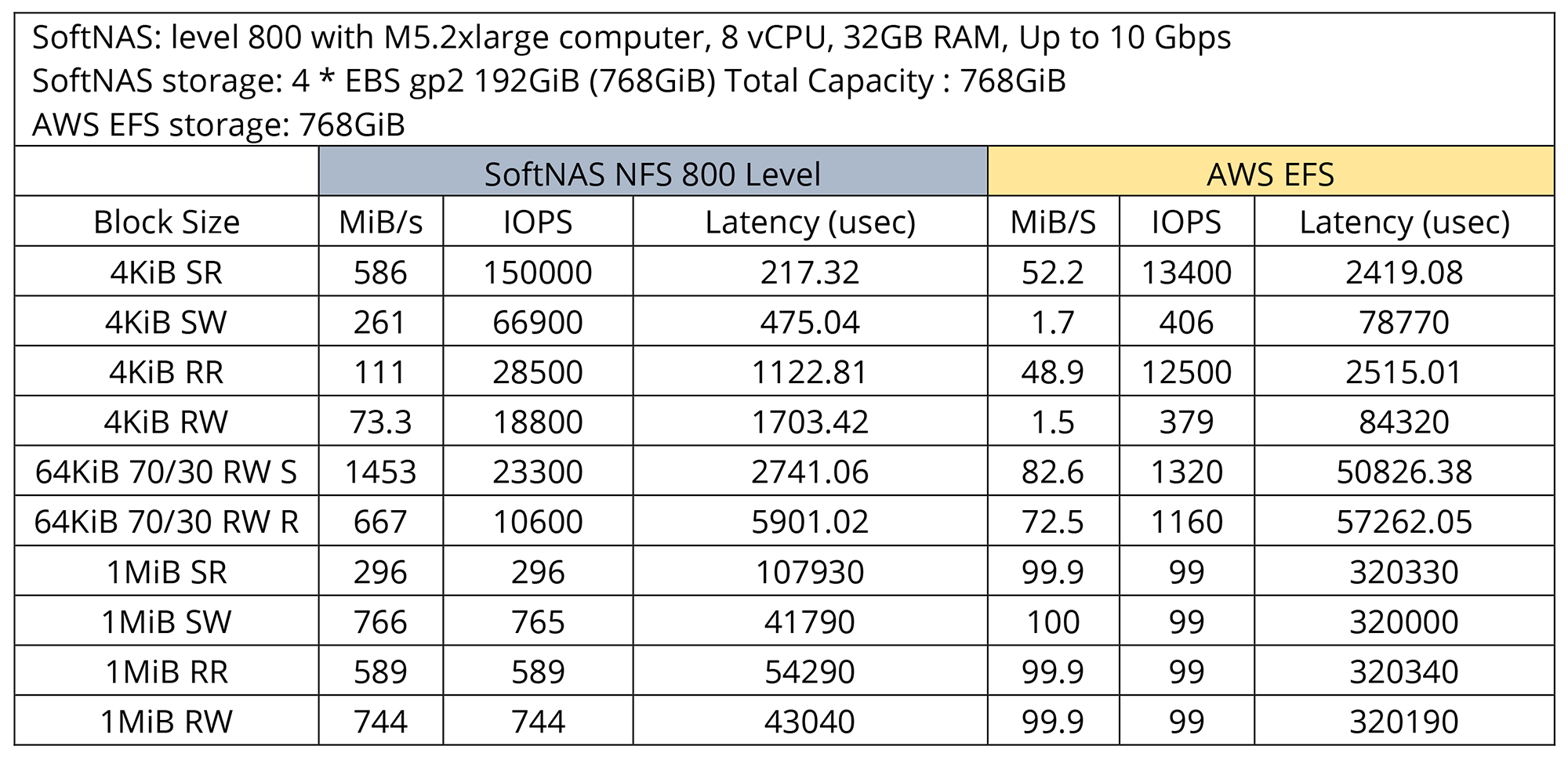

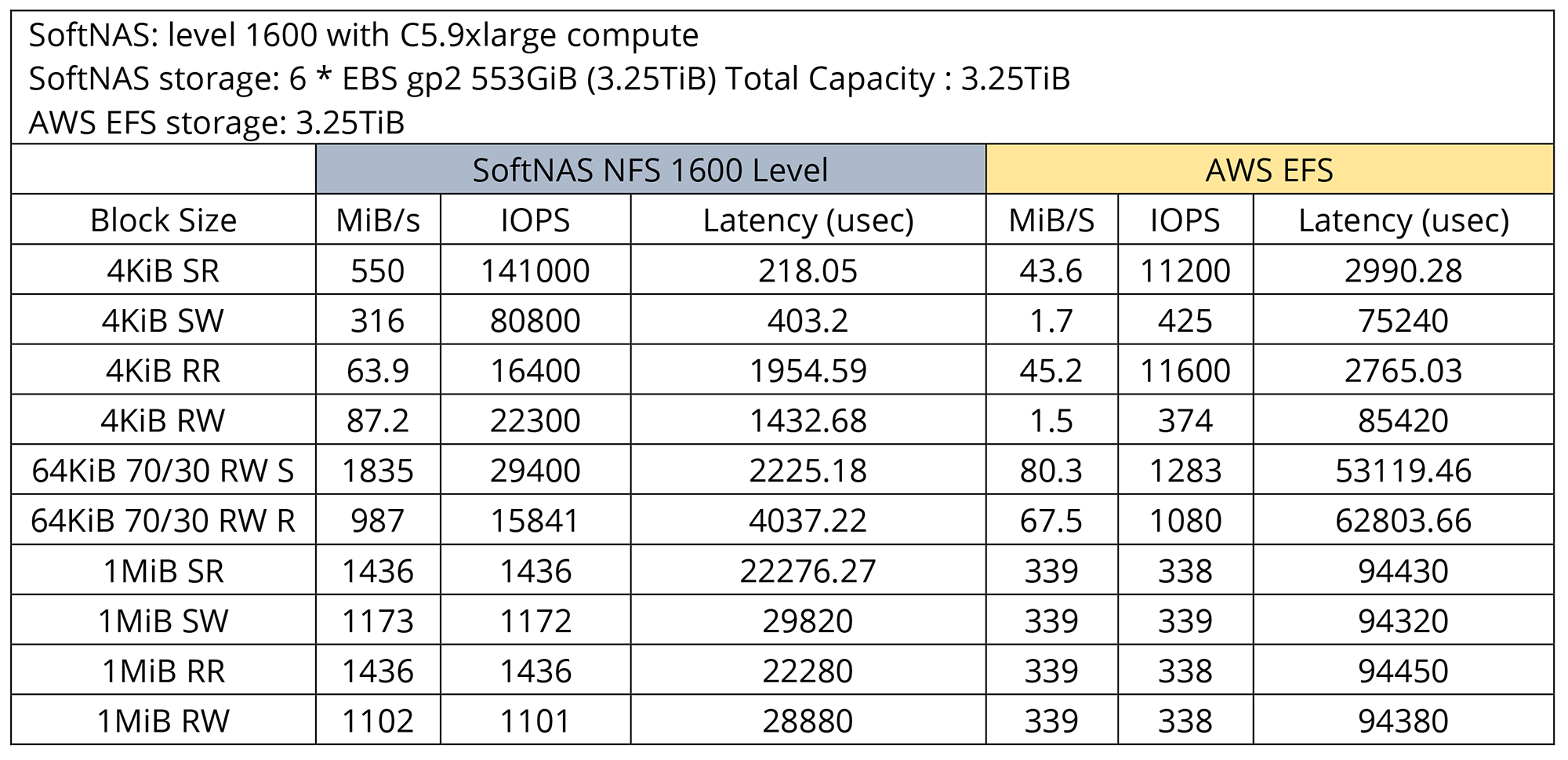

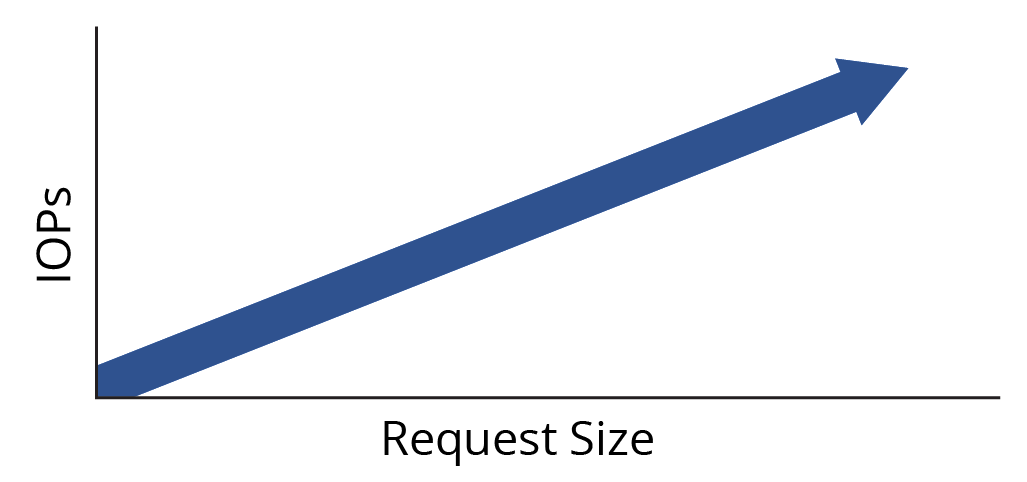

All data and metadata for the SAP HANA system are in shared objects. These objects are copied from data tables to logical tables and accessed by SAP HANA software. So, as this information is grown, the impact on performance grows as well. By using SoftNAS to address performance bottlenecks, SoftNAS can accelerate operations that otherwise might be less efficient.

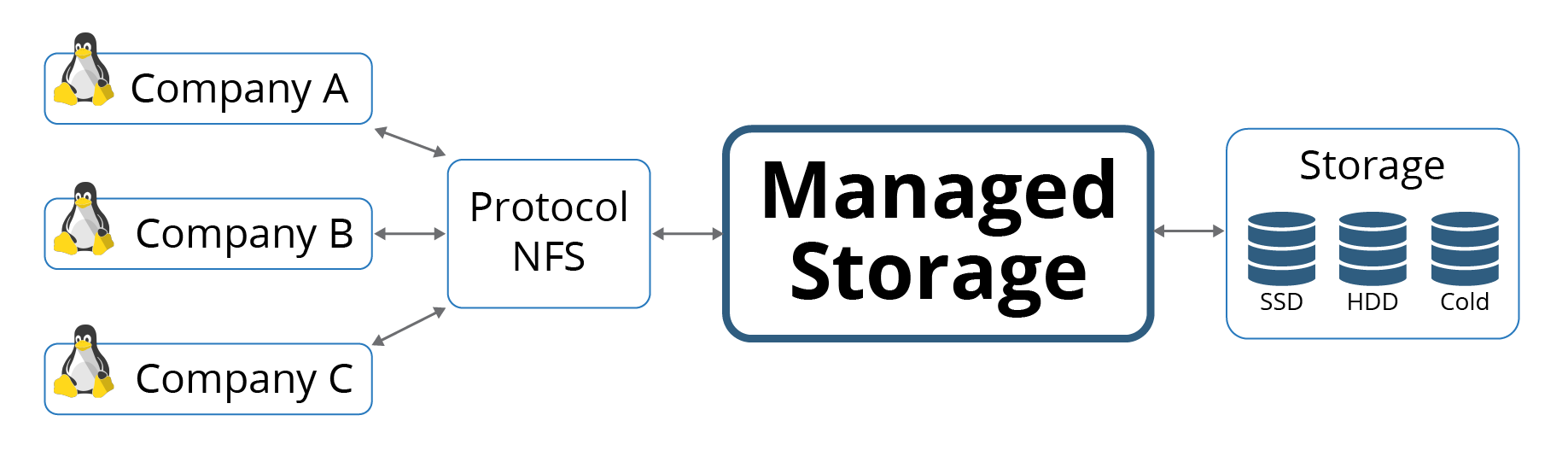

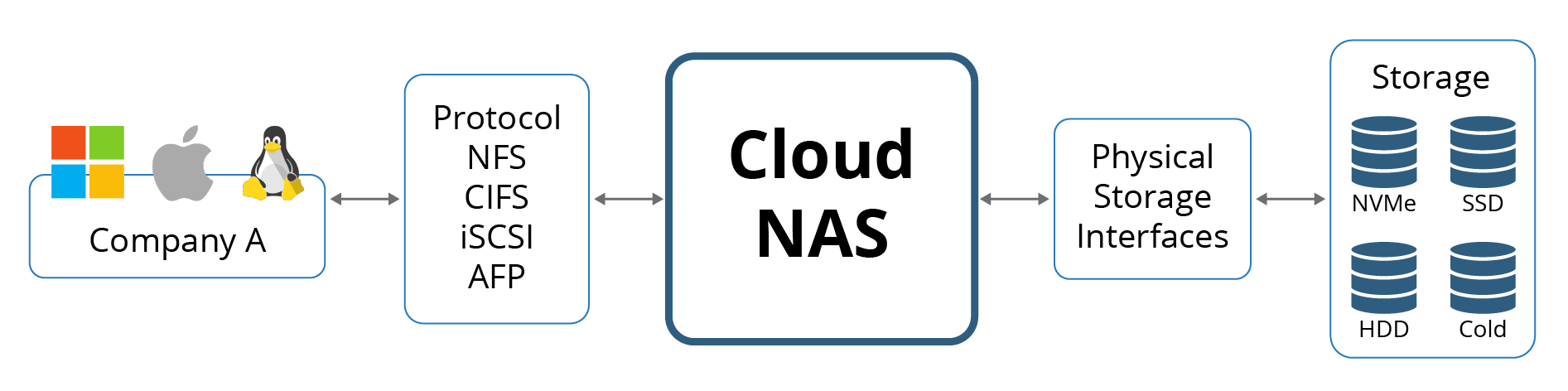

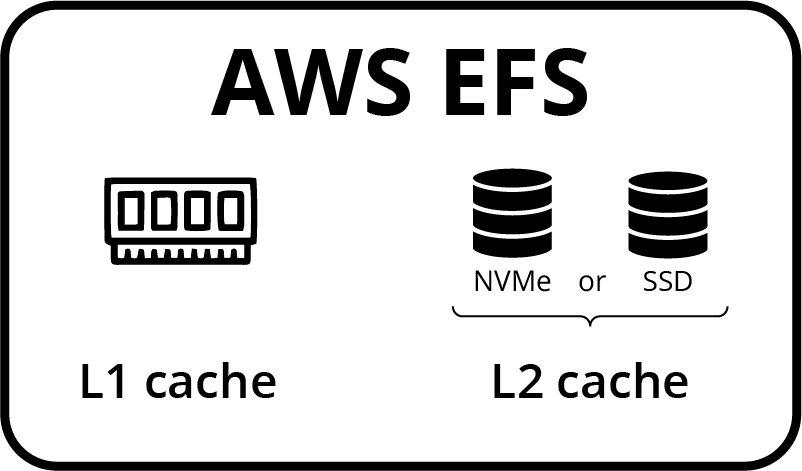

For example, the copy operation will undoubtedly be faster if you deploy storage with read-cache. Read cache is implemented with NVMe or SSD drives and helps copy the parameters from source tables to specialization indexes. Tables are frequently written, such as ETL operations, the technique of storing data and logs in SoftNAS reduces the complexity and resiliency, which reduces the overall risk for data loss. Cloud NAS can also improve your data requests’ response times and other critical factors like resource management and disaster recovery.

Storing your data and logs volumes on a NAS would certainly improve resilience. Using a NAS with RAID also allows for added redundancy if something goes wrong with one of your drives. Utilizing RAID will not only help to ensure your data is safe, but it will also allow you to maintain a predictable level of performance when it comes time to scale up your software.

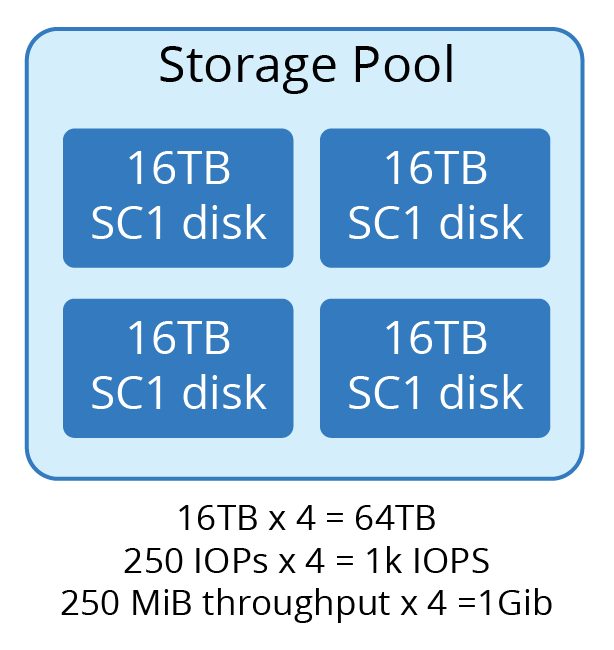

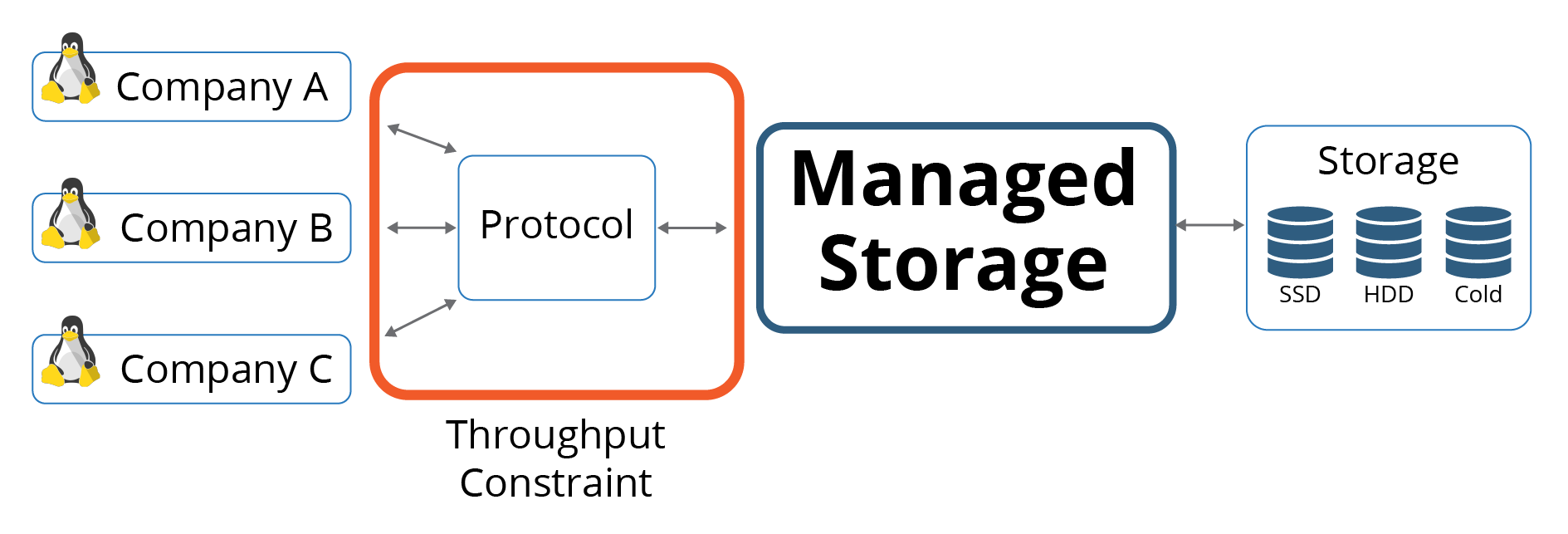

Partitioning of data volumes allows for efficient data distribution and high availability. Partitioning will also help you scale up your SAP HANA performance, which can be a challenge with only one large storage pool. Partitioning will involve allocating more than one volume to each table and moving the information across volumes over time.

SAP HANA supports persistent memory for database tables. Persistent memory retains data in memory between server reboots. Loading data requires time to boot and load the data and then refresh the data. With SAP HANA deployed with SoftNAS storage, loading times are not a problem at all. The amount of data you consume will significantly benefit from persistence memory. While reading (basically accessing) records from persistent memory takes a long time, writing to the memory works much better with SoftNAS.

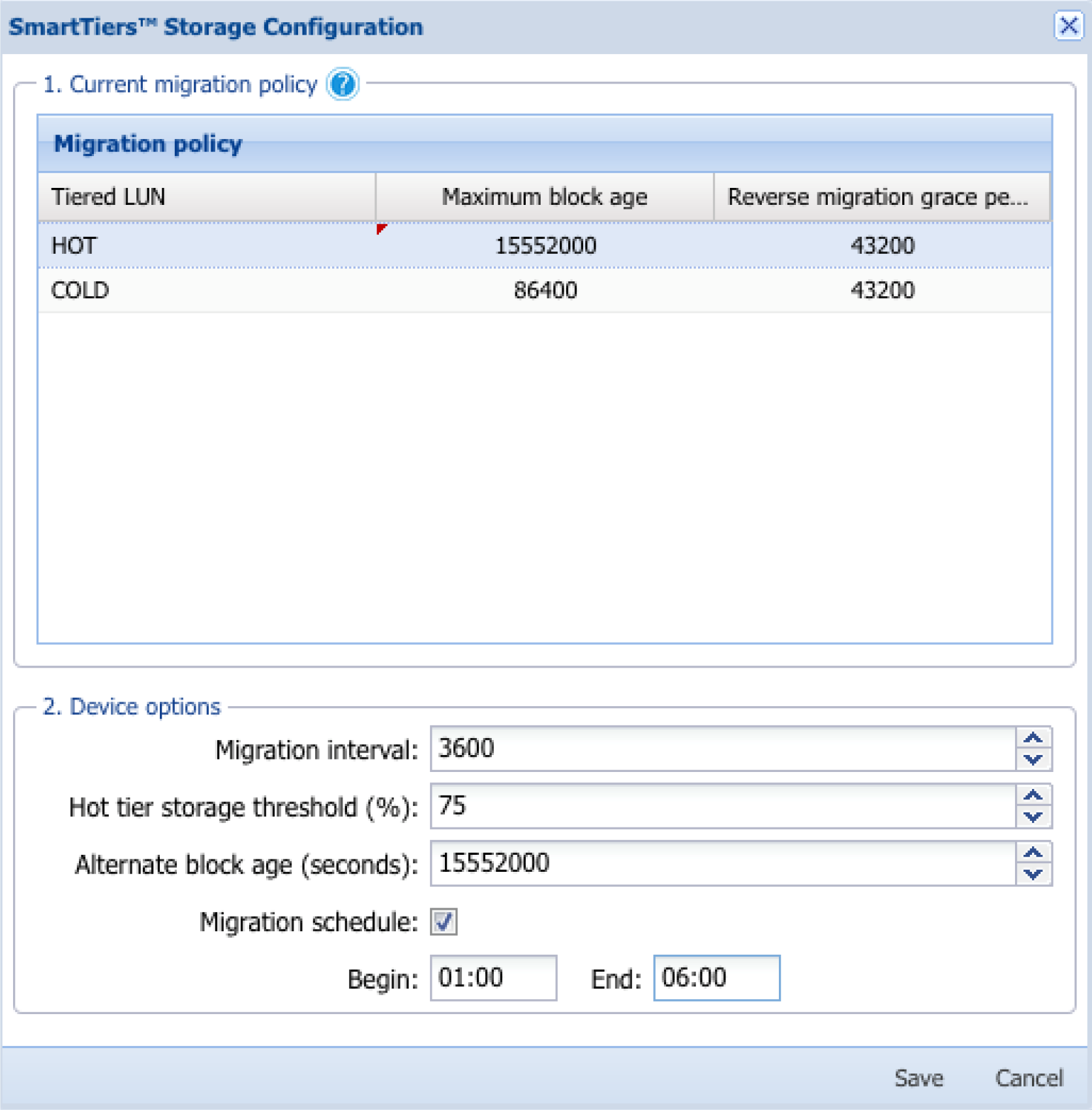

SoftNAS data snapshots enable SAP HANA backup multiple times a day without the overhead of file-based solutions, eliminating the need for lengthy consistency check times when backing up and dramatically reducing the time to restore the data. Schedule multiple backups a day with restore and recovery operations in a matter of minutes.

CPU and IO offloading help to support high-performance data processing. Reducing CPU and IO overhead effectively serves to increase database performance. By moving backup processes into the storage network, we can free up server resources to enable higher throughput and a lower Total Cost of Ownership (TCO).

You want to deploy SAP HANA because your business needs access to real-time information that allows you to make timely decisions with maximal value. SoftNAS is a cloud NAS that will enable you to develop real-time business applications, connecting your company with customers, partners, and employees in ways that you have never imagined before.