Scalability, virtualization, efficiency, and reliability are major Cloud Platform design goals of a cloud computing platform. Public cloud platforms like AWS and Azure offer a few different choices for persistent storage. Today I’m going to show you how to leverage a SoftNAS NAS storage appliance with these different storage types to scale your application to meet your specific performance and cost goals in the public cloud.

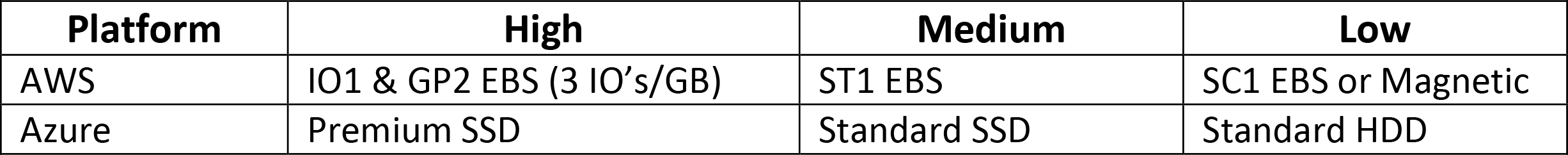

To get started, let’s take a quick look at these storage types in both AWS and Azure to understand their characteristics. The high-performance disk types are more expensive, and the cost decrease as the performance level decreases. Refer to the below table for a quick reference.

References below:

Because I’m going to use a SoftNAS as my primary storage controller in AWS or Azure, I can take advantage of all of the different disk types available on those platforms and design storage pools that meet each of my application performance and cost goals. I can create pools using high-performance devices along with pools that utilize magnetic media and object storage. I can even create tiered pools that utilize both SSD and HDD. Along with the flexibility of using different media types for my storage architecture, I can leverage the extra benefits of caching, snapshots, and file system replication that come along with using SoftNAS. There are tons of additional features that I could mention, but for this blog post, I’m only going to focus on the types of pools I can create and how to leverage the different disk types.

High-Performance Pools

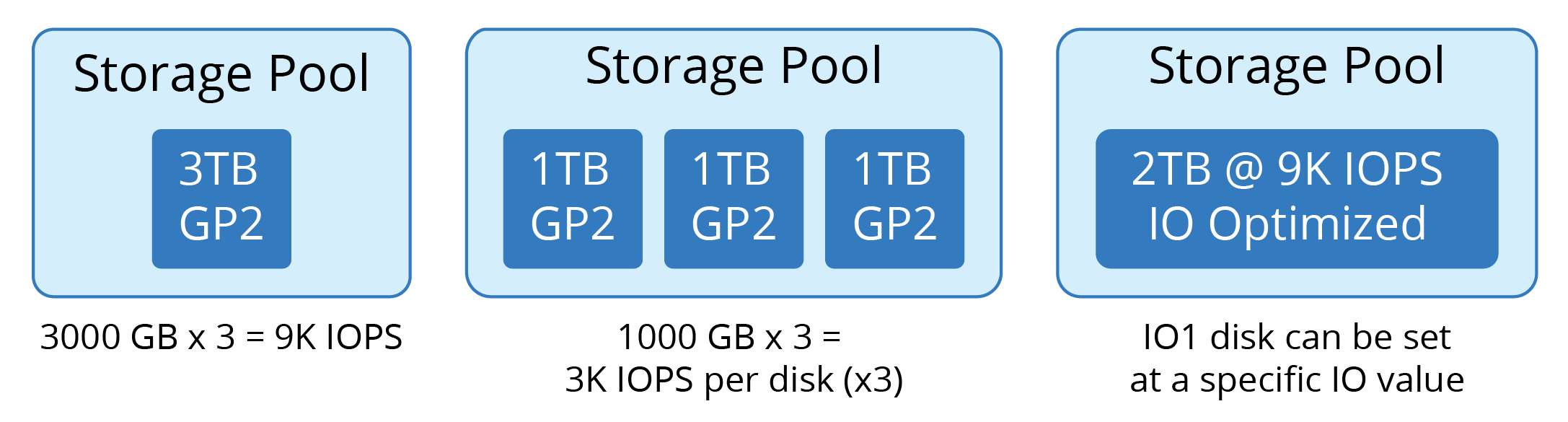

I’ll use AWS in this example. For an application that requires low latency and high IOPs, we would think about using SSDs like IO1 or GP2 as the underlying medium. Let’s say we need our application to have 9k available IOPs and at least 2TB of available storage. We can aggregate the devices in a pool to get the sum throughput and IO of all the devices combined, or we can provide a single IO Optimized volume to achieve the performance target. Let’s look at the underlying math and figure out what we should do.

We know that AWS GP2 EBS gives us 3 IOPs per GB of storage. With that in mind, 2TB would only give us 6k IOPs. That’s 3k short of our performance goal. To reach the 9k IOPs requirement, we would either need to provision 3TB of GP2 EBS disk or provision an IO Optimized (IO1) EBS disk and set the IOPS to 9k for that device.

Any of the below configurations would allow you to achieve this benchmark using Buurst’s SoftNAS.

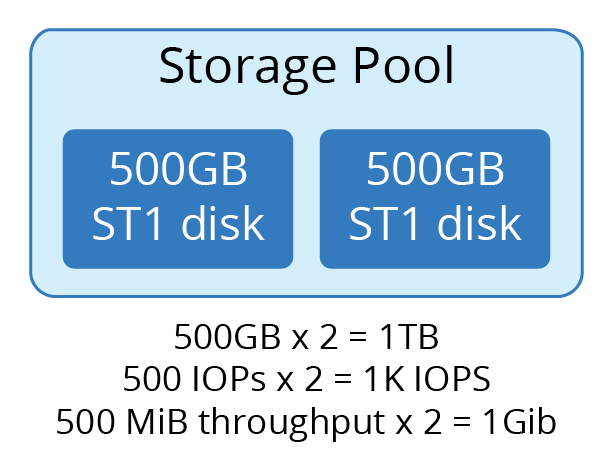

Throughput Optimized Pools

If your storage IO specification does not require low latency but does require a higher throughput level, then ST1 Type EBS may work well for you. ST1 disk types are going to be less expensive than the GP2 or IO1 type of devices. The same rules apply regarding aggregating the throughput of the devices to achieve your throughput requirements. If we look at the specs for ST1 devices (link above), we are allowed up to 500 IOP’s per device and a max of 500 MiB of throughput per device. If we require a 1TB volume to achieve 1GiB of throughput and 1000 IOPs, then we can design a pool with those requirements as well. It may look something like below:

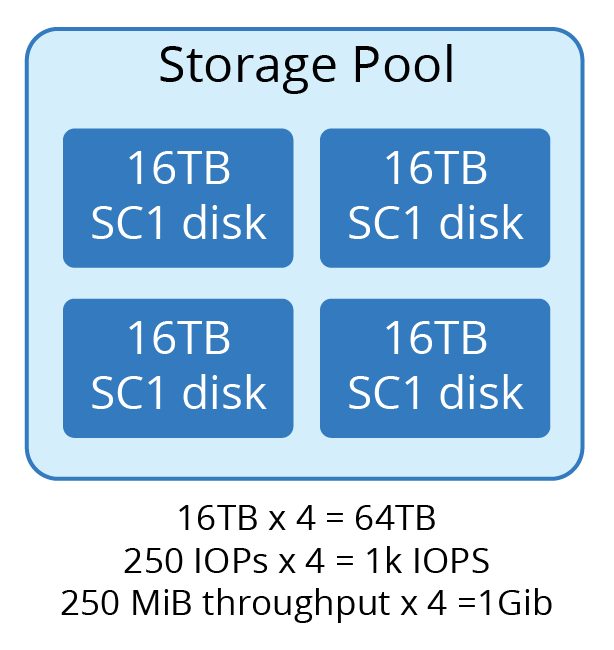

Pools for Archive and Less Frequently Accessed Data

If you require storing backups on disk or have a data set that is not frequently accessed, then you could save money by storing this data set on less expensive storage. Your options are going to be magnetic media or object storage. SoftNAS can also help you out with that. HDD in Azure or SC1 in AWS are good options for this. You can combine devices to achieve high capacity requirements for this infrequently accessed or archival data. The throughput limits on the HDD type devices are limited to 250MiB, but the capacity is higher, and the cost is much less when compared to SSD type devices. If we needed 64TB of cold storage in AWS, it might look like below. The largest device in AWS is 16TB, so that we will use four.

Tiered Pools

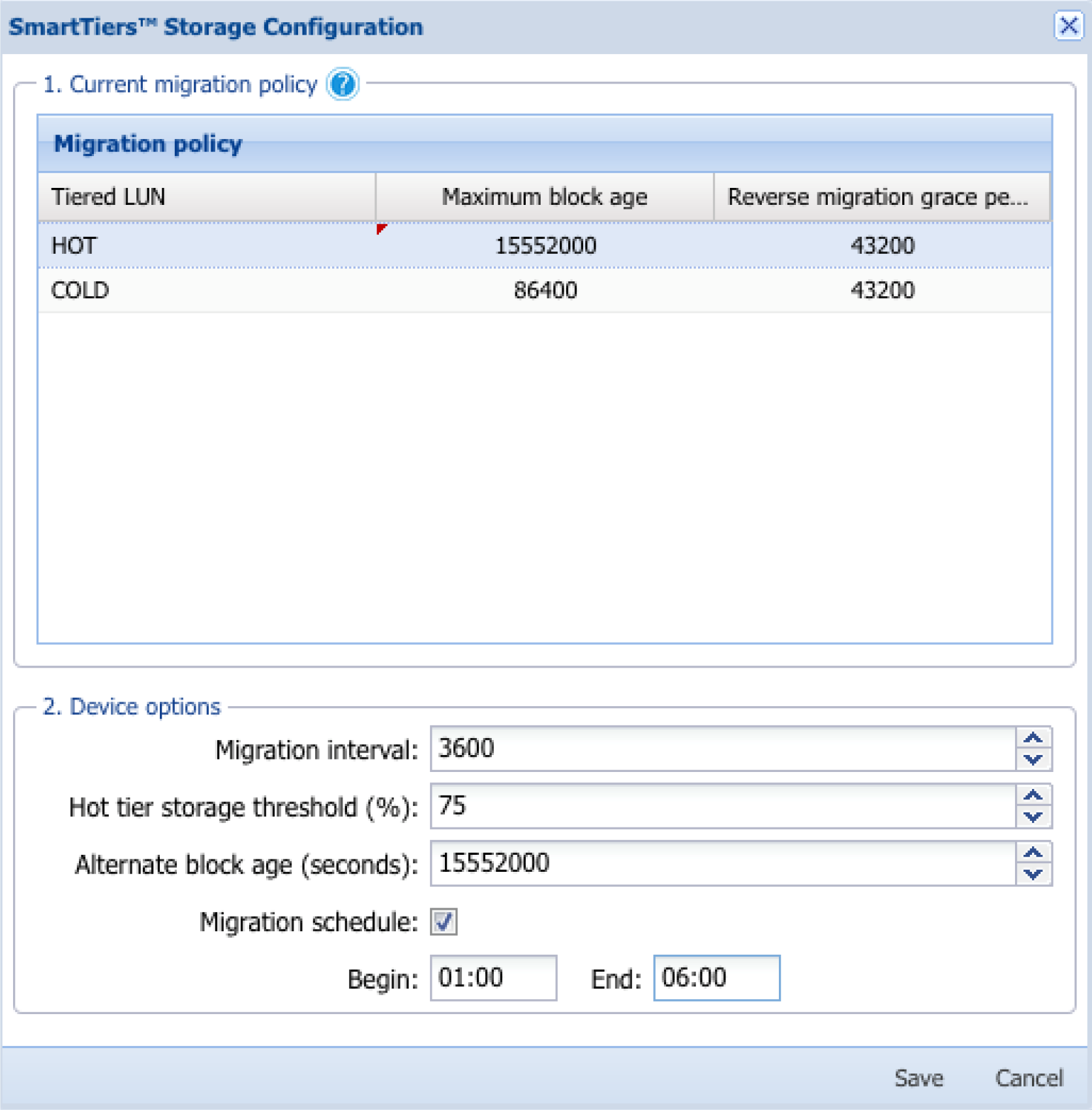

Finally, I will mention Tiered Pools. Tiered pools are a feature you can use in BUURST™ SoftNAS, whereby you can have different levels of performance all within the same pool. When you set up a tiered pool on SoftNAS, you can have a ‘hot’ tier made of fast SSD devices along with a ‘cold’ tier that is made of slower, less expensive HDD devices. You set block-level age policies that enable the less frequently accessed data to migrate down to the cold tier HDD devices while your frequently accessed data will remain in the hot tier on the SSD devices. Let’s say we want to provision 20TB of storage. We think that about 20% of our data would be active at any time, and the other 80% could be on cold storage. An example of what that tiered pool may look like is below.

The tier migration policy has the following configuration:

- Maximum block age: Age limit of blocks in seconds.

- Reverse migration grace period: If a block is requested from the lower tier within this period, it will be migrated back up.

- Migration interval: Time in seconds between checks.

- Hot tier storage threshold: If the hot tier fills to this level, data is migrated off.

- Alternate Block Age: Addition age to migrate blocks in the case of HOT tier becoming full.

Summary

If you are looking for a way to tune your storage pools based on your application requirements, then you should try SoftNAS. It gives you the flexibility to leverage and combine different storage mediums to achieve the cost, performance, and scalability that you are looking for. Feel free to reach out to BUURST™ sales team for more information.