Today I would like to show you how to create highly available NFS mounts for your Kubernetes pods using SoftNAS Cloud NAS. Sometimes we require our microservices running on Kubernetes to be able to share common data. Naturally, a common NFS share would achieve that. Since Kubernetes deployments are all about high availability, then why not also make our common NFS shares highly available as well? I’m going to show you a way to do that using a SoftNAS appliance to host the NFS mount points.

A Little Background on SoftNAS

SoftNAS is a software-defined storage appliance created by BUURST™ that you can deploy from the AWS Marketplace, Azure Cloud Marketplace, and VMWare OVA. SoftNAS allows you to create aggregate storage pools from cloud disk devices. These pools are then shared over NFS, SMB, or iSCSI for your applications and users to consume.

SoftNAS also can run in HA mode, whereby you deploy two SoftNAS instances and can failover to the secondary node if the primary NFS server fails health check. This means that my Kubernetes shared storage can failover to another zone in AWS if my primary zone fails. It’s accomplished by using a floating VIP that moves between the primary and secondary nodes, depending on which one has the active file system.

The Basic SoftNAS Architecture

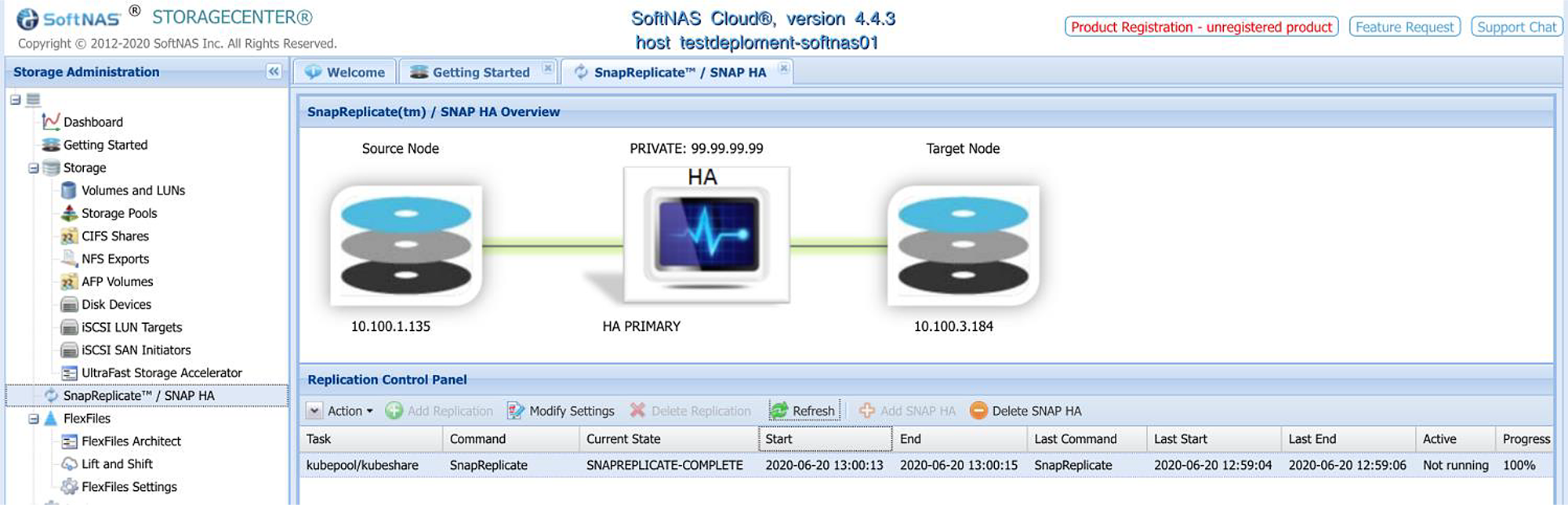

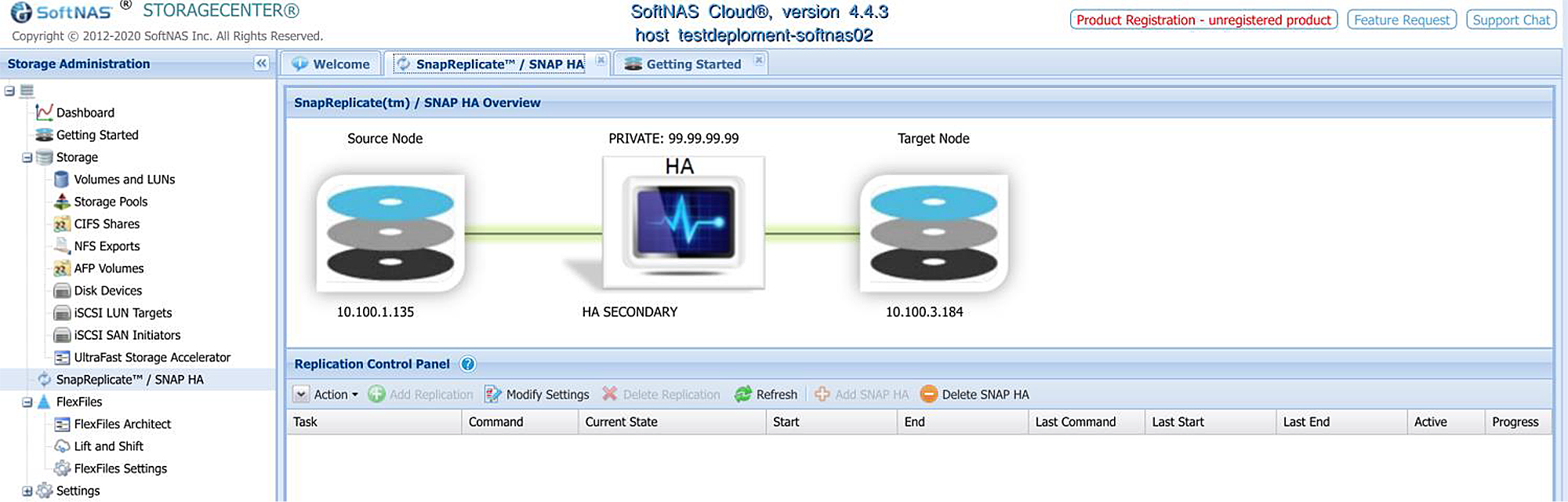

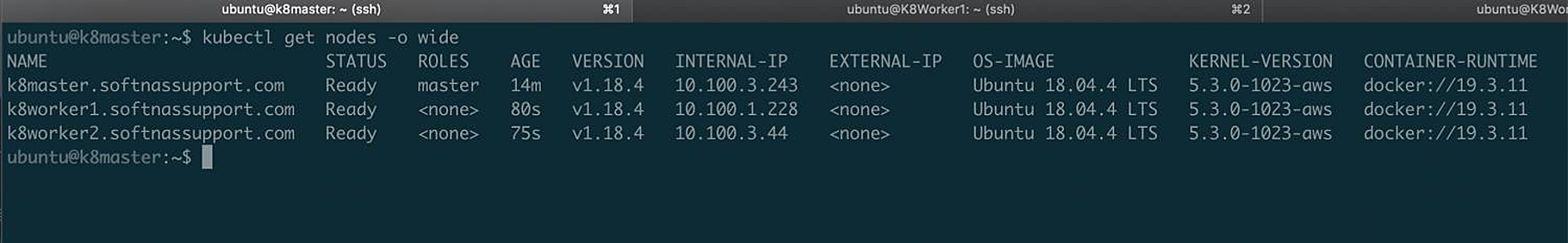

For this blog post, I’m going to use AWS. I’m using Ubuntu 18.04 for my Kubernetes nodes. I have deployed two SoftNAS nodes from AWS Marketplace. One node is deployed in US-WEST-2A, and the other is deployed in US-WEST-2B. I went through the easy HA setup wizard and chose a random VIP of 99.99.99.99. The VIP can be whatever IP address you like since it’s only is routed inside your private VPC. My KubeMaster is deployed in US-WEST-2B, and I have two KubeWorkers.

One deployed in zone A and the other deployed in zone B. The below screenshot shows what HA looks like when you enable it. My primary node on the left, my secondary node on the right, and the VIP that my Kubernetes nodes are using for this NFS share is 99.99.99.99. The status messages below the nodes show that replication is from primary to secondary is working as expected.

Primary Node

Secondary Node

The Kubernetes Pods

How To Mount The HA NFS Share from Kubernetes

Alright, enough about the background information, let’s mount this VIP on the SoftNAS from our Kubernetes pods. Since the SoftNAS exports this pool over NFS, you will mount it just like you would mount any other NFS share. In the below example, we will launch an Alpine Linux Docker image and have it mount the NFS share at the 99.99.99.99 VIP address. I’ll name the deployment’ nfs-app’.

The deployment will have the below contents inside of your file nfs-app.yaml. Notice in the volumes section of the deployment, we are using the HA VIP of the SoftNAS (99.99.99.99) and the path for our NFS share ‘/kubepool/kubeshare’:

Copy and paste the below configuration into a file called ‘nfs-app.yaml’ to use this example:

kind: Pod apiVersion: v1 metadata: name: nfs-app spec: containers: - name: app image: alpine volumeMounts: - name: nfs-volume mountPath: /mnt/nfs command: ["/bin/sh"] args: ["-c", "sleep 500000"] volumes: - name: nfs-volume nfs: server: 99.99.99.99 path: /kubepool/kubeshare

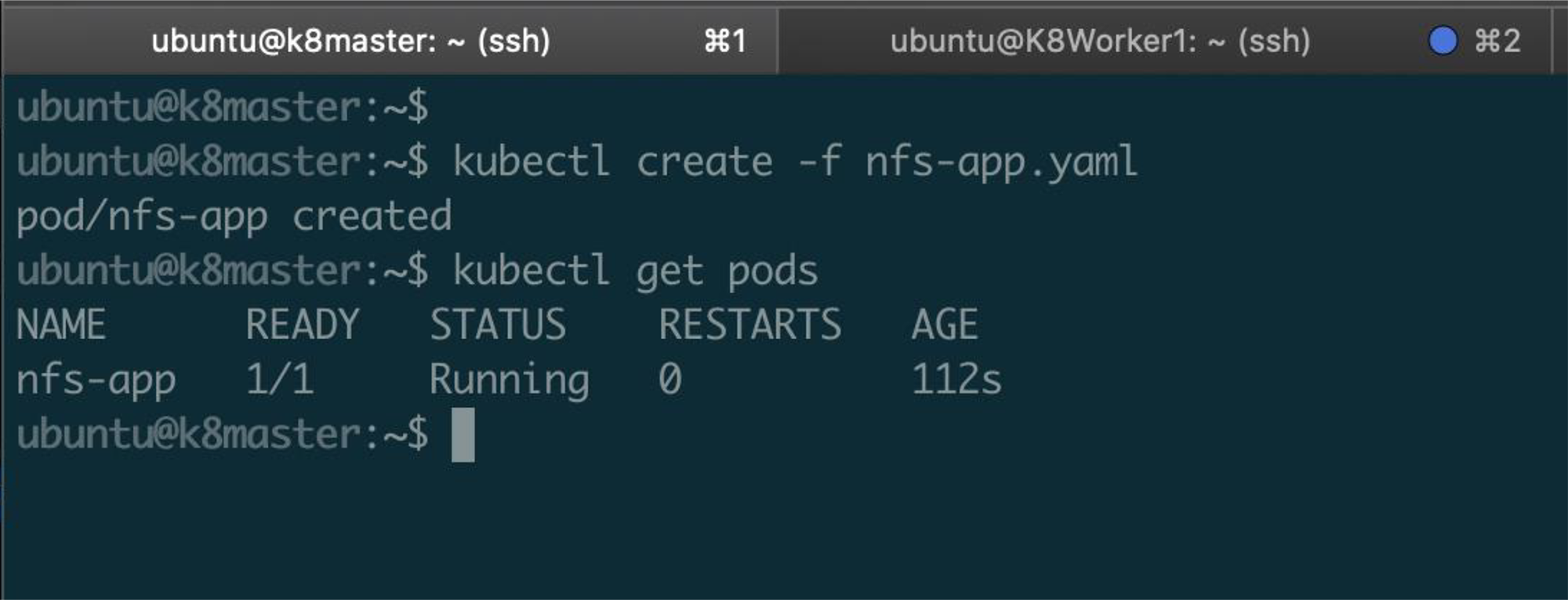

So let’s launch this deployment using Kubernetes.

From my KubeMaster, I run the below command:

'kubectl create -f nfs-app.yaml'

Now let’s make sure the node launched:

'kubectl get pods'

See the below example of what the expected output should be:

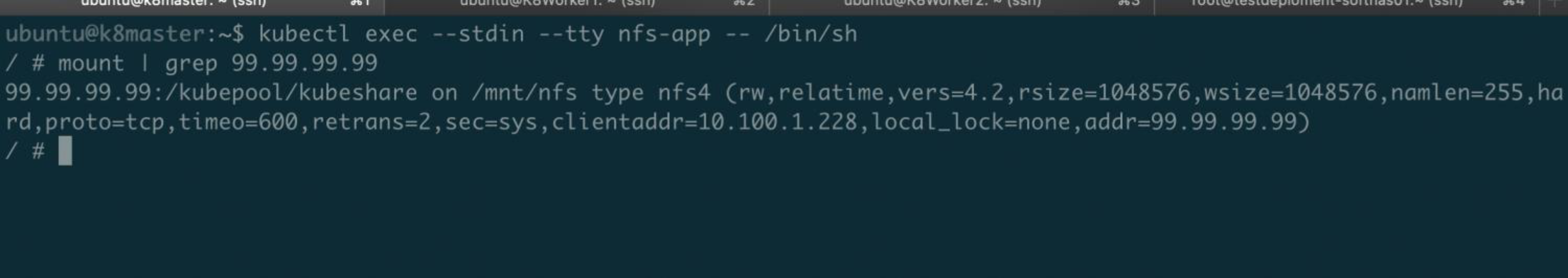

Let’s verify from the Kubernetes pod that our NFS share is mounted from that pod.

To do that, we will connect to the pod and check the storage mounts.

We should see an NFS mount to SoftNAS at the VIP address 99.99.99.99.

Connect to the ‘nfs-app’ Kubernetes pod:

‘kubectl exec --stdin --tty nfs-app -- /bin/sh’

Check for our mount to 99.99.99.99 like below:

'mount | grep 99.99.99.99'

The expected output should look like the below.

We see that the Kubernetes pod has mounted our SoftNAS NFS share at the VIP address using the NFS4 protocol:

Now you have configured highly available NFS mounts for your Kubernetes cluster. If you have any questions or need any more information regarding HA NFS for Kubernetes, please reach out to BUURST for sales or more technical information.