SoftNAS® Surpasses Industry Standards with 40 Concurrent Replications — Outperforming NetApp FSx and FSx for OpenZFSHOUSTON, TEXAS – FOR IMMEDIATE...

Posts by Buurst Staff

SoftNAS® Sets New Benchmark in High-Availability Cloud Storage

SoftNAS® Sets New Benchmark in High-Availability Enterprise Cloud Storage with Real-World Customer ResultsHOUSTON, TEXAS – FOR IMMEDIATE RELEASE...

Buurst’s SoftNAS Now Available on Oracle Cloud Marketplace

SoftNAS, a network attached storage solution, is now integrated with Oracle Cloud InfrastructureHOUSTON, TEXAS – October 8, 2024 - Buurst, a leading...

SoftNAS High Availability and Disaster Recovery-Oriented Solutions Safeguard A Business

Buurst deploys its Hybrid Cloud solution with High-Availability and Disaster Recovery to EMEA customers that can minimize adverse impacts from IT...

SoftNAS™ Expands to Oracle Cloud, Achieves VMware Partner Ready Certification, Releases SoftNAS 5.5

Buurst continues to broaden its reach of its Enterprise-grade, High-Performance SoftNAS virtual Storage productHOUSTON, TEXAS, UNITED STATES,...

SoftNAS® Migration & Upgrade Advisory

June 28, 2022 This Migration & Upgrade advisory is issued to inform any customers on any version of SoftNAS® 3, 4, and up to 5.1. A migration or...

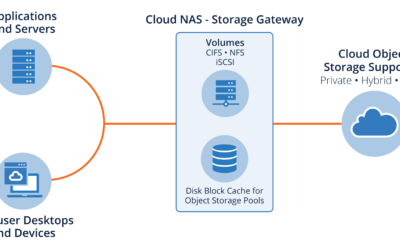

What is Cloud NAS?

Cloud NAS (Network Attached Storage) is a popular storage choice for people looking to use cloud storage for applications, user file systems,...

SoftNAS 5 Webinar Recap on Upgrade Process

To further promote the new SoftNAS 5 product release, Buurst’s engineering and support teams promoted a webinar to highlight new features and the...

SoftNAS 5: New Linux Version, New Kernel, Better Performance

We are excited to announce that our newest version of SoftNAS, SoftNAS 5, is now publically available. In our latest update, we have made the...

SoftNAS Dual Zone High Availability

SoftNAS SNAP HA™ High Availability delivers a 99.999% uptime guarantee that is a low-cost, low-complexity solution that is easy to deploy and...