Cloudberry Backup – Affordable & Recommended Cloud Backup Service on Azure & AWS

Cloudberry Backup – Affordable & Recommended Cloud Backup Service on Azure & AWS

Let me tell you about a CIO I knew from my days as a consultant. He was even-keeled most of the time and could handle just about anything that was thrown at him. There was just the one time I saw him lose control – when someone told him the data backups had failed the previous night. He got so flustered, it was as if his career was flashing before him, and the ship might sink and there were no remaining lifeboats.

If You Don’t Backup Data, What Are the Risks?

Backing up one’s data is a problem as old as computing itself. We’ve all experienced data loss at some point, along with the pain, time, and costs associated with recovering from the impacts caused in our personal or business lives. We get a backup to avoid these problems, insurance you hope you never have to use, but, as Murphy’s Law goes, if anything can go wrong, it will.

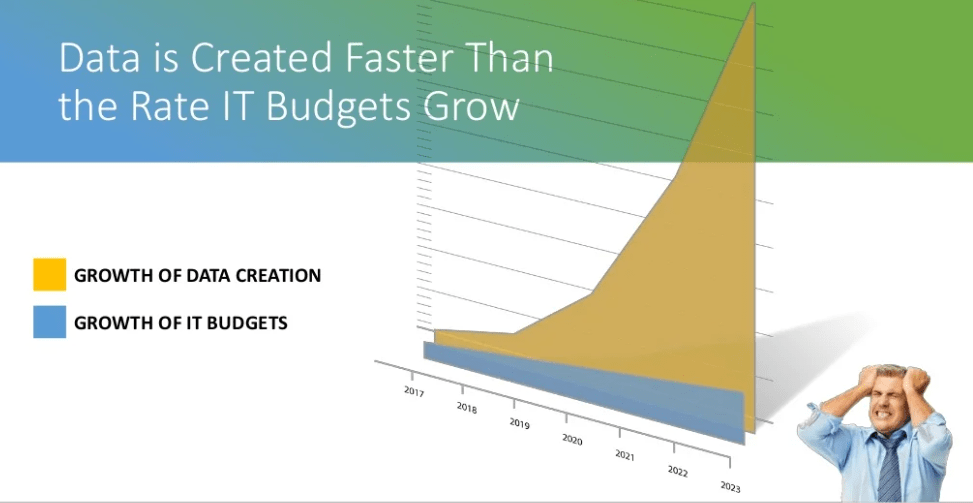

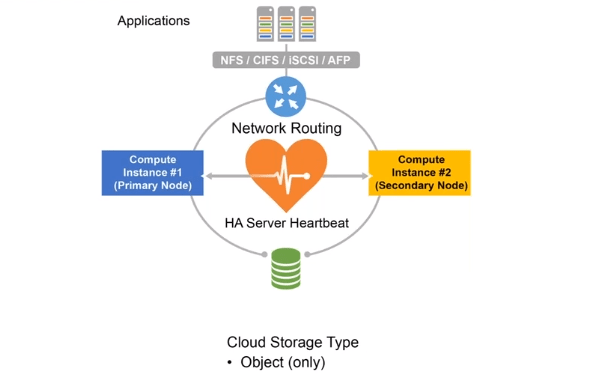

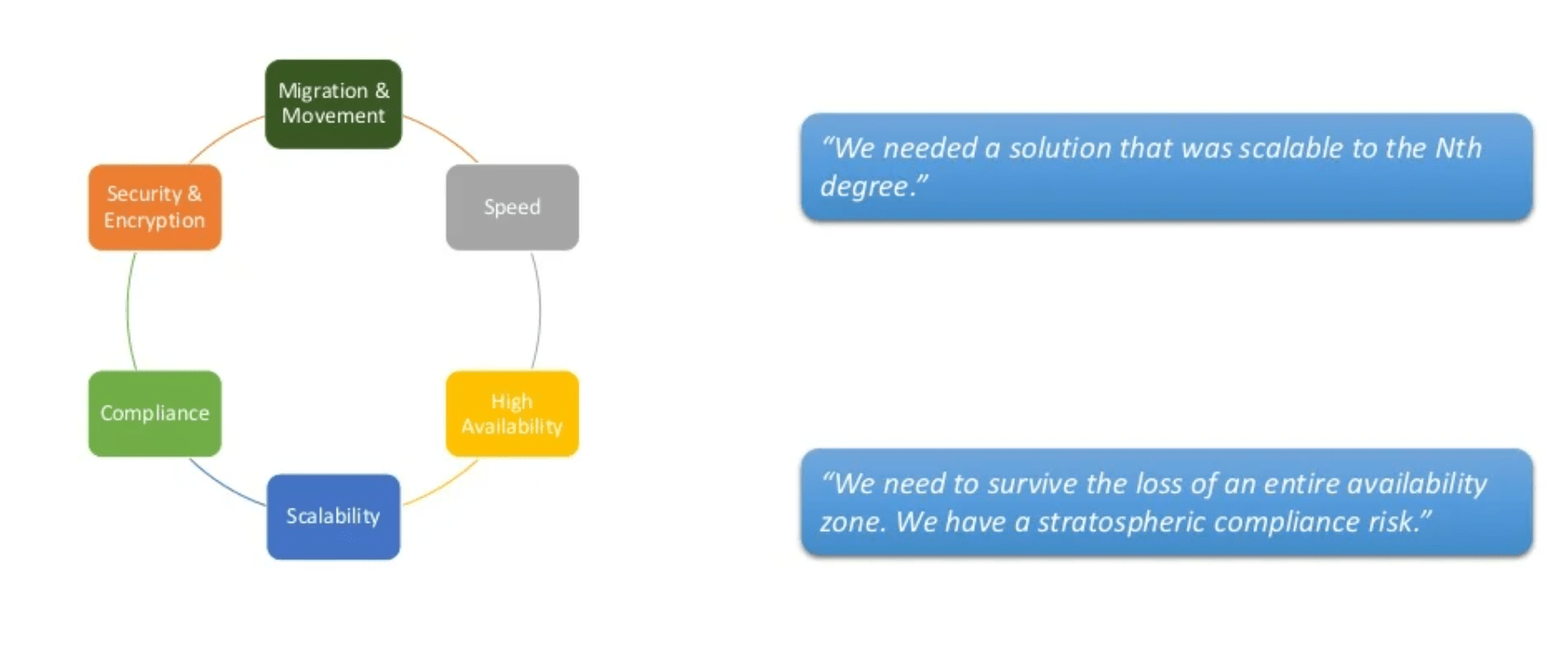

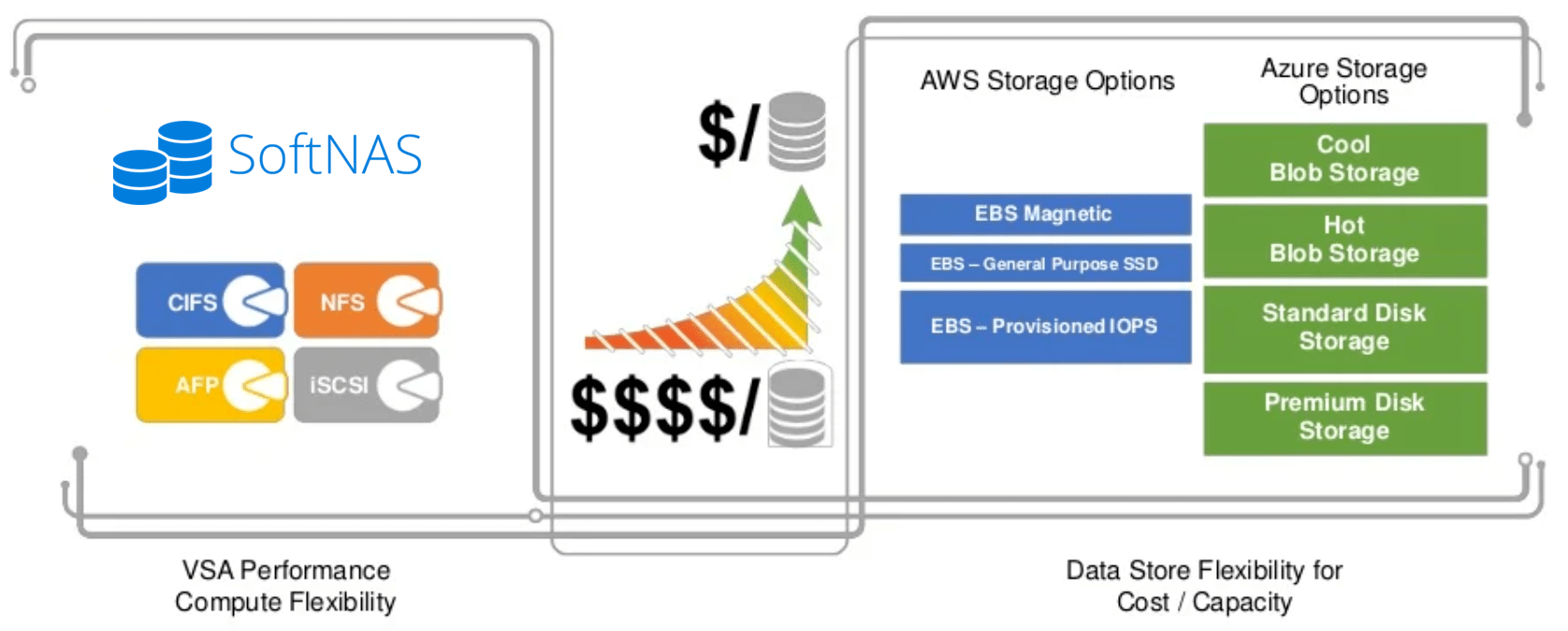

Data storage systems include various forms of redundancy and the cloud is no exception. Though there are multiple levels of data protection within cloud block and object storage subsystems, no amount of protection can cure all potential ills. Sometimes, the only cure is to recover from a backup copy that has not been corrupted, deleted, or otherwise tampered with.

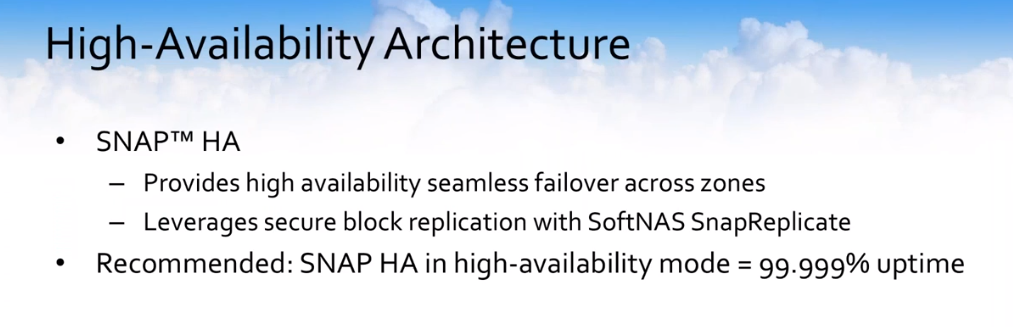

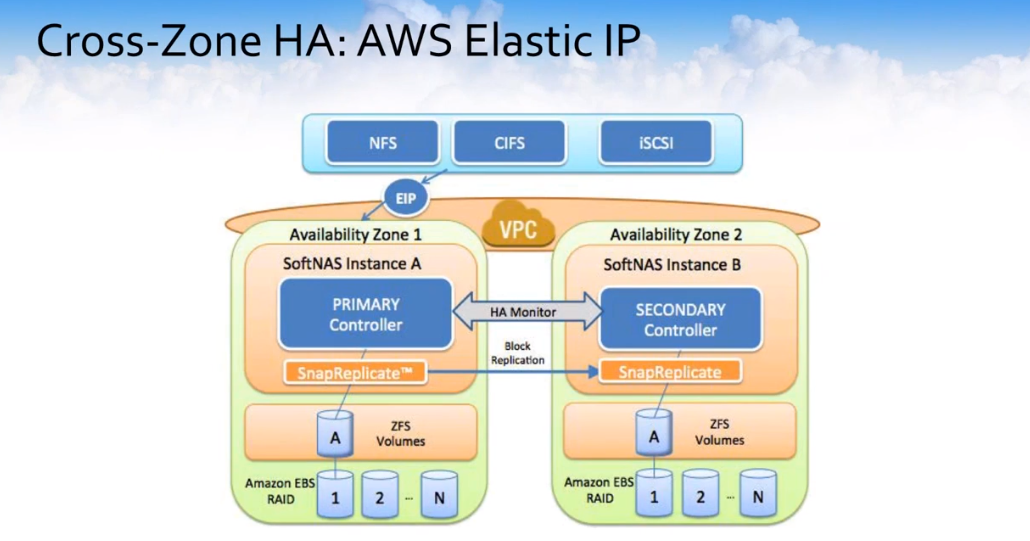

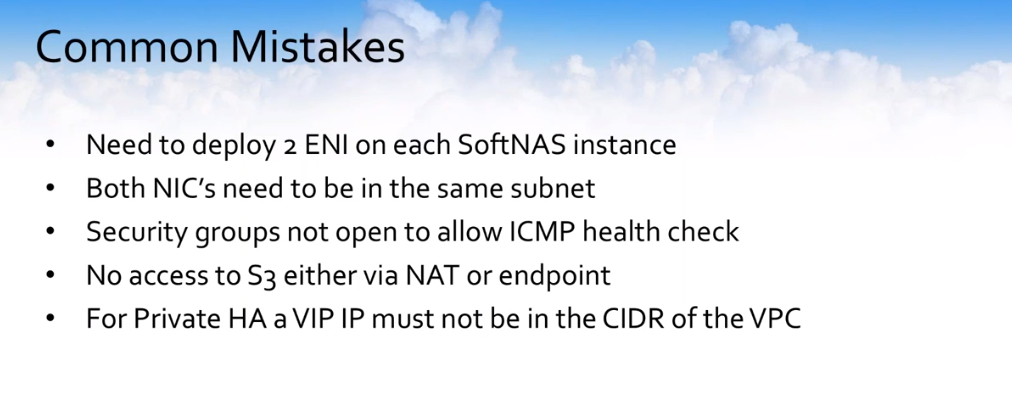

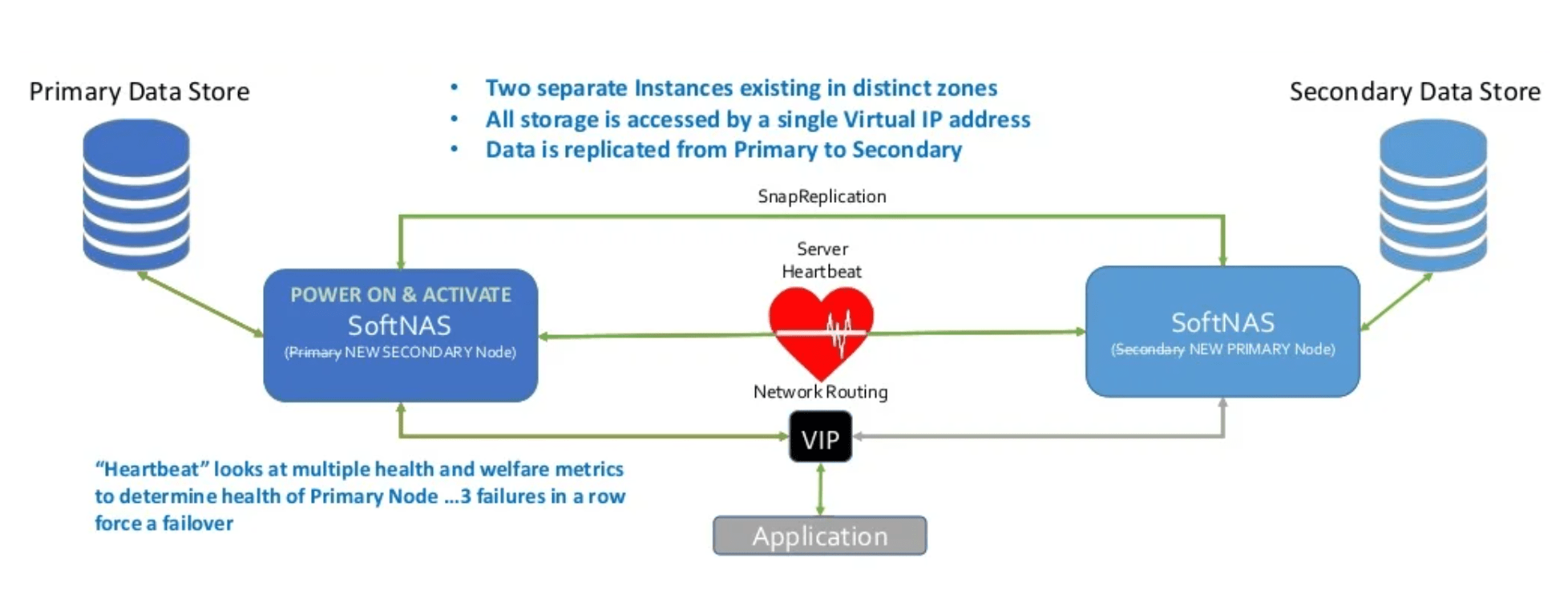

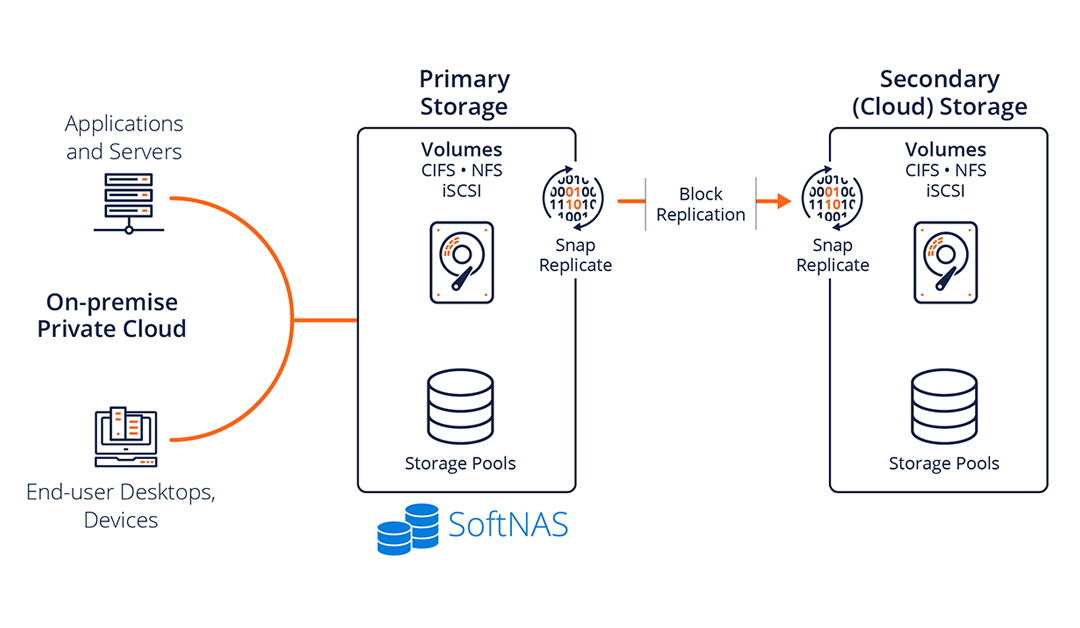

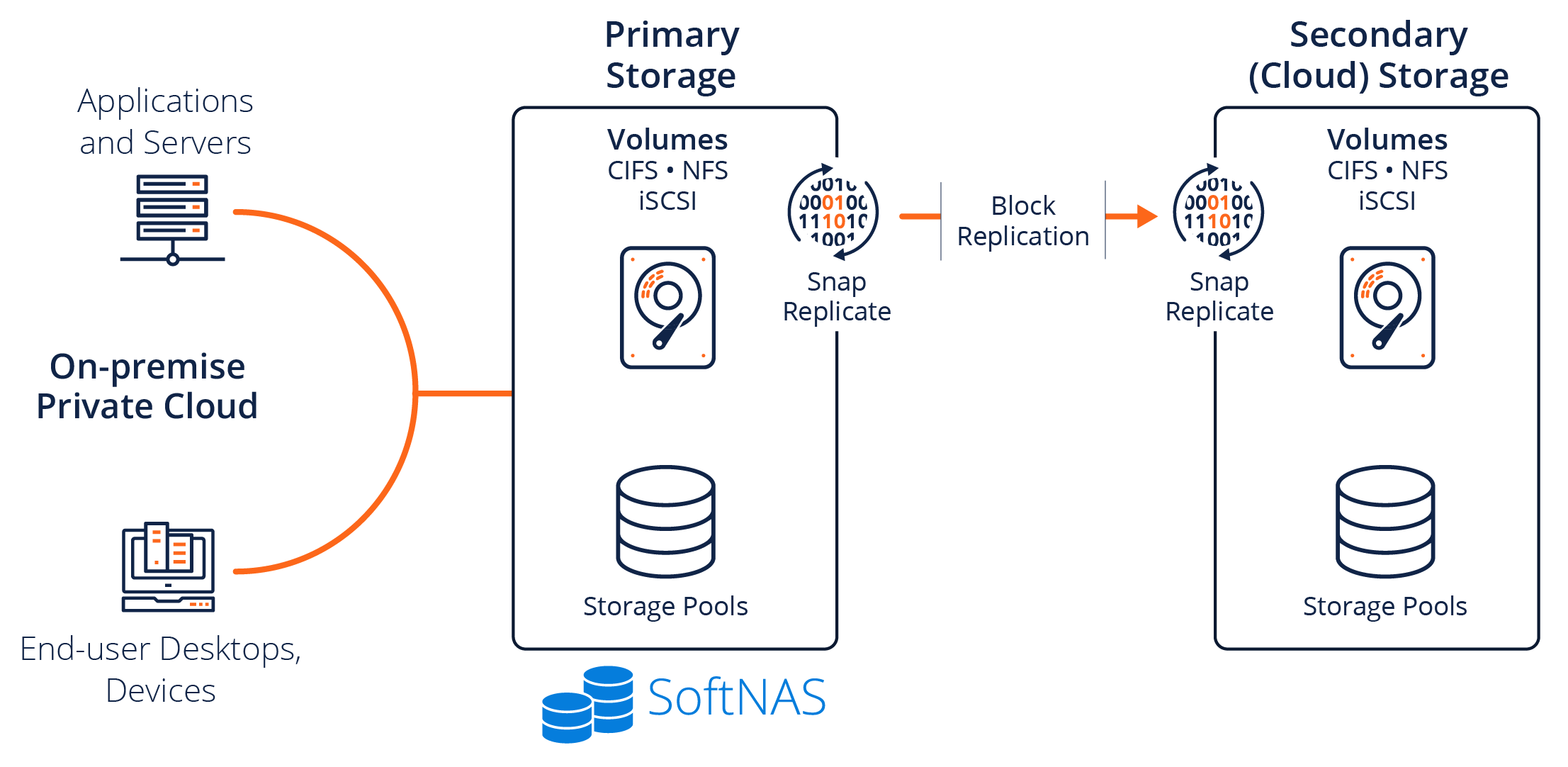

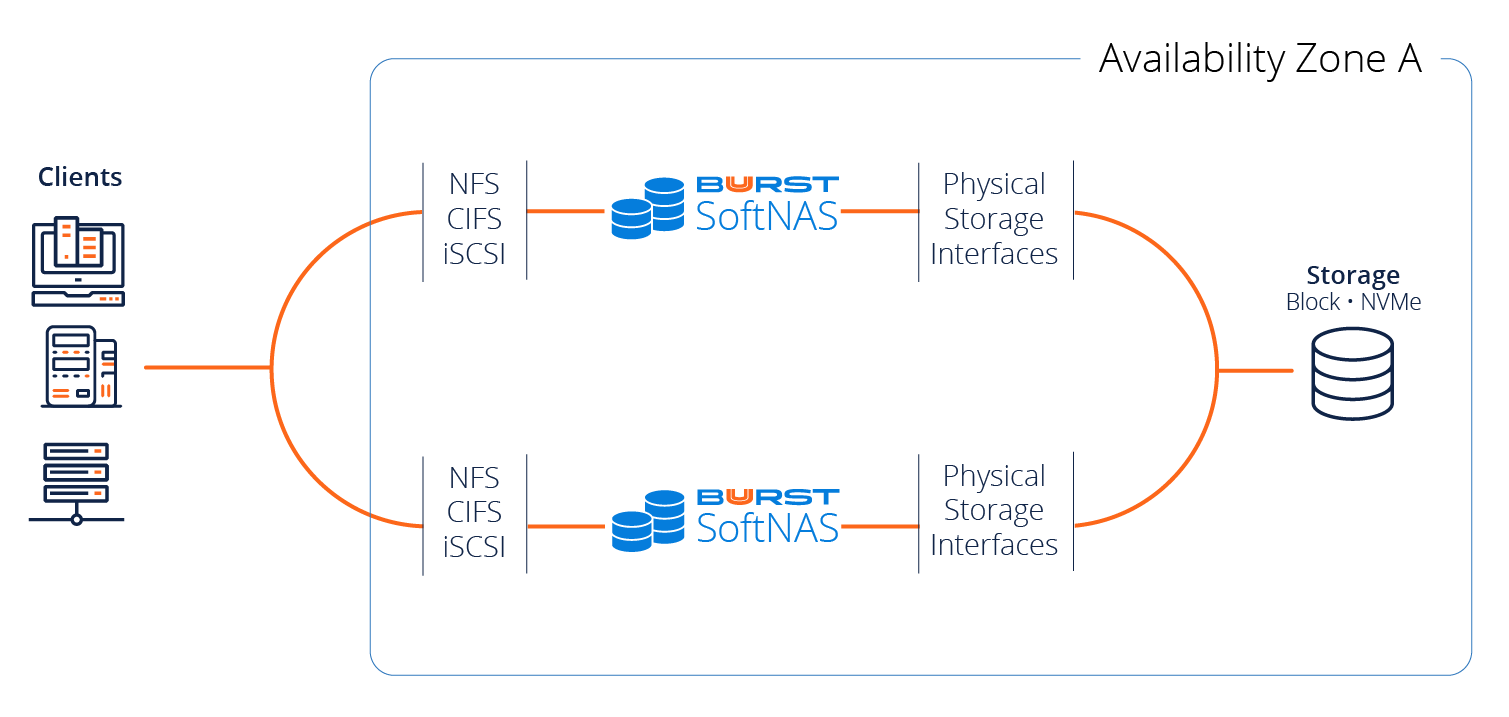

SoftNAS provides additional layers of data protection atop cloud block and object storage, including storage snapshots, checksum data integrity verification on each data block, block replication to other nodes for high availability, and file replication to a disaster recovery node. But even storage snapshots rely upon the underlying cloud storage block and object storage, which can and does fail occasionally.

These cloud-native storage systems tout anywhere from 99.9% up to 11 nines of data durability. What does this really mean? It means there’s a non-zero probability that your data could be lost – it’s never 100%. So, when things do go wrong, you’d do best to have at least one viable backup copy. Otherwise, in addition to recovering from the data loss event, you risk losing your job too.

Why Companies Must Have a Data Backup

Let me illustrate this through an in-house experience.

In 2013, when SoftNAS was a fledgling startup, we had to make every dollar count and it was hard to justify paying for backup software or the storage it requires.

Back then, we ran QuickBooks for accounting. We also had a build server running Jenkins (still do), domain controllers, and many other development and test VMs running atop of VMware in our R&D lab. However, it was going to cost about $10,000 to license Veeam’s backup software and it just wasn’t a high enough priority to allocate the funds, so we skimped on our backups. Then, over one weekend, we upgraded our VSAN cluster.

Unfortunately, something went awry and we lost the entire VSAN cluster along with all our VMs and data. In addition, our makeshift backup strategy had not been working as expected and we hadn’t been paying close attention to it, so, in effect, we had no backup.

I describe the way we felt at the time as the “downtime tunnel”. It’s when your vision narrows and all you can see is the hole that you’re trying to dig yourself out of, and you’re overcome by the dread of having to give hourly updates to your boss, and their boss. It’s not a position you want to be in.

This is how we scrambled out of that hole. Fortunately, our accountant had a copy of the QuickBooks file, albeit one that was about 5 months old. And thankfully we still had an old-fashioned hardware-based Windows domain controller. So we didn’t lose our Windows domain. We had to painstakingly recreate our entire lab environment, along with rebuilding a new QuickBooks file by entering all the past 5 months of transactions and recreating our Jenkins build server. After many weeks of painstaking recovery, we managed to put Humpty Dumpty back together again.

Lessons from Our Data Loss

We learned the hard way that proper data backups are much less expensive than the alternatives. The week after the data loss occurred, I placed the order for Veeam Backup and Recovery. Our R&D lab has been fully backed up since that day. Our Jenkins build server is now also versioned and safely tucked away in a Git repository so it’s quickly recoverable.

Of course, since then we have also outsourced accounting and no longer require QuickBooks, but with a significantly larger R&D operation now we simply cannot afford another such event with no backups ever again. The backup software is the best $10K we’ve ever invested in our R&D lab. The value of this protection outstrips the cost of data loss any day.

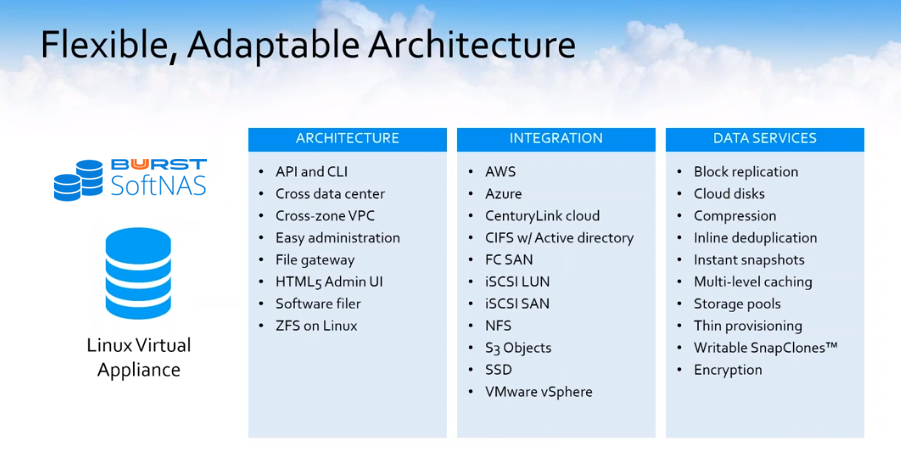

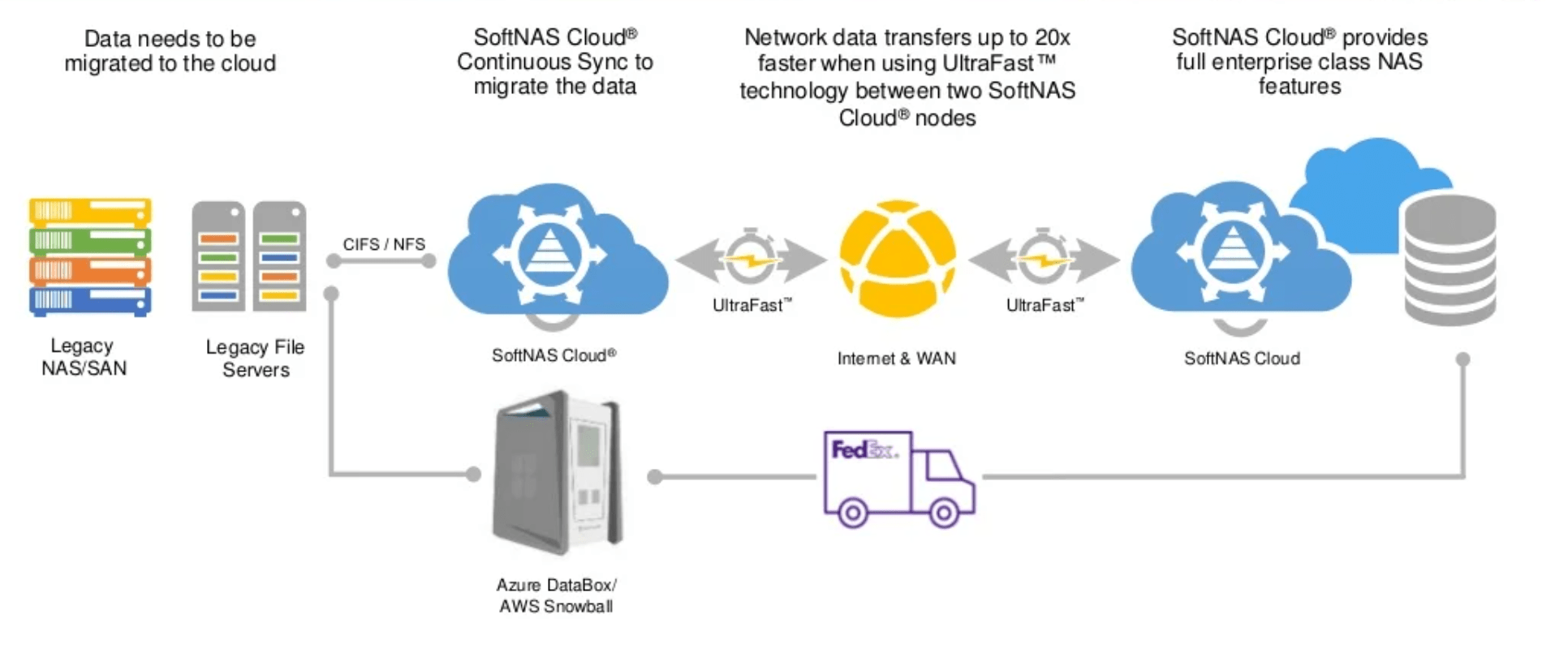

Cloud Backup as a Service

Fortunately, there are some great options available today to back up your data to the cloud, too. And they cost less to acquire and operate than you may realize. For example, SoftNAS has tested and certified the CloudBerry Backup product for use with SoftNAS. CloudBerry Backup (CBB) is a cloud backup solution available for both Linux and Windows. We tested the CloudBerry Backup for Linux, Ultimate Edition, which installs and runs directly on SoftNAS. It can run on any SoftNAS Linux-based virtual appliance, atop AWS, Azure, and VMware. We have customers who prefer to run CBB on Windows and perform the backups over a CIFS share. Did I forget to mention this cloud backup solution is affordable at just $150, and not $10K?

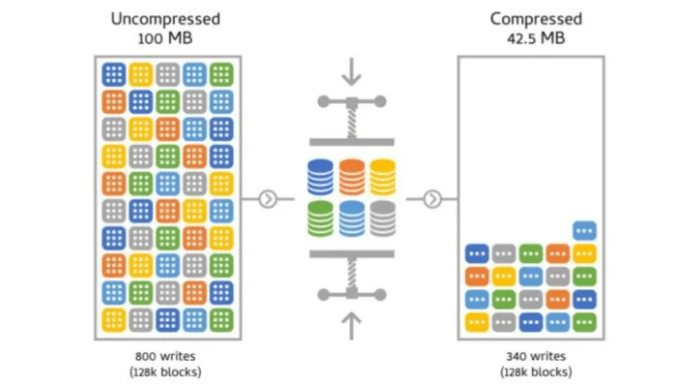

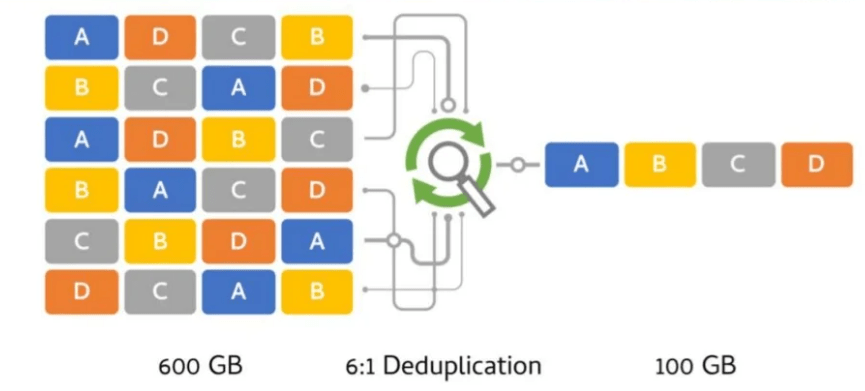

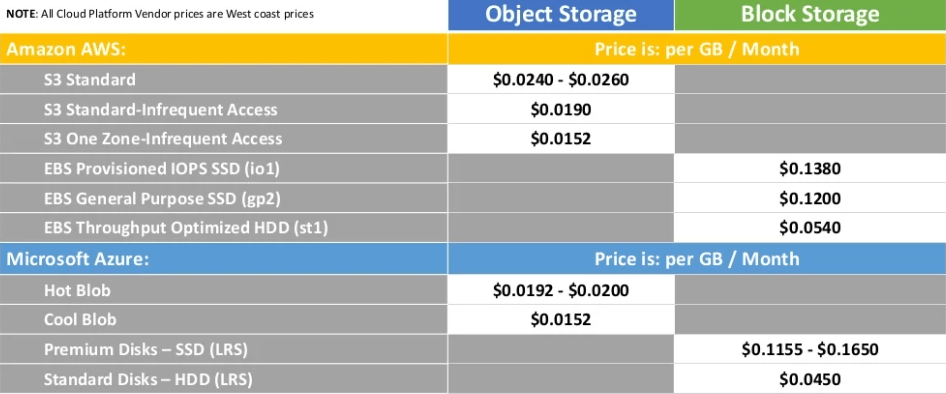

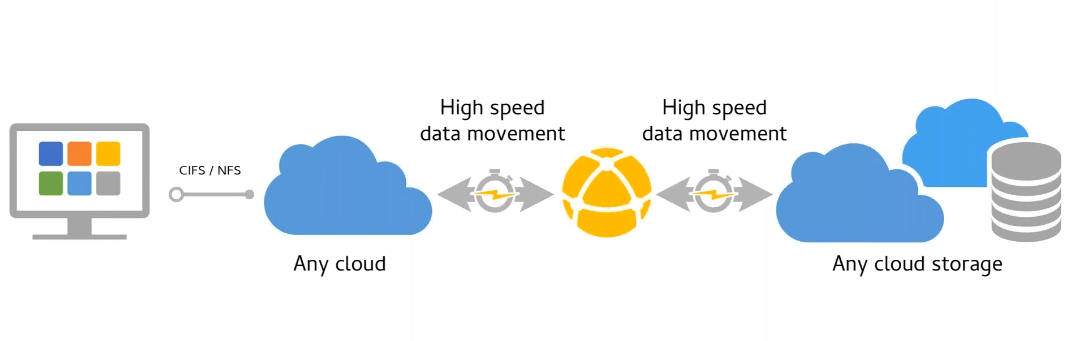

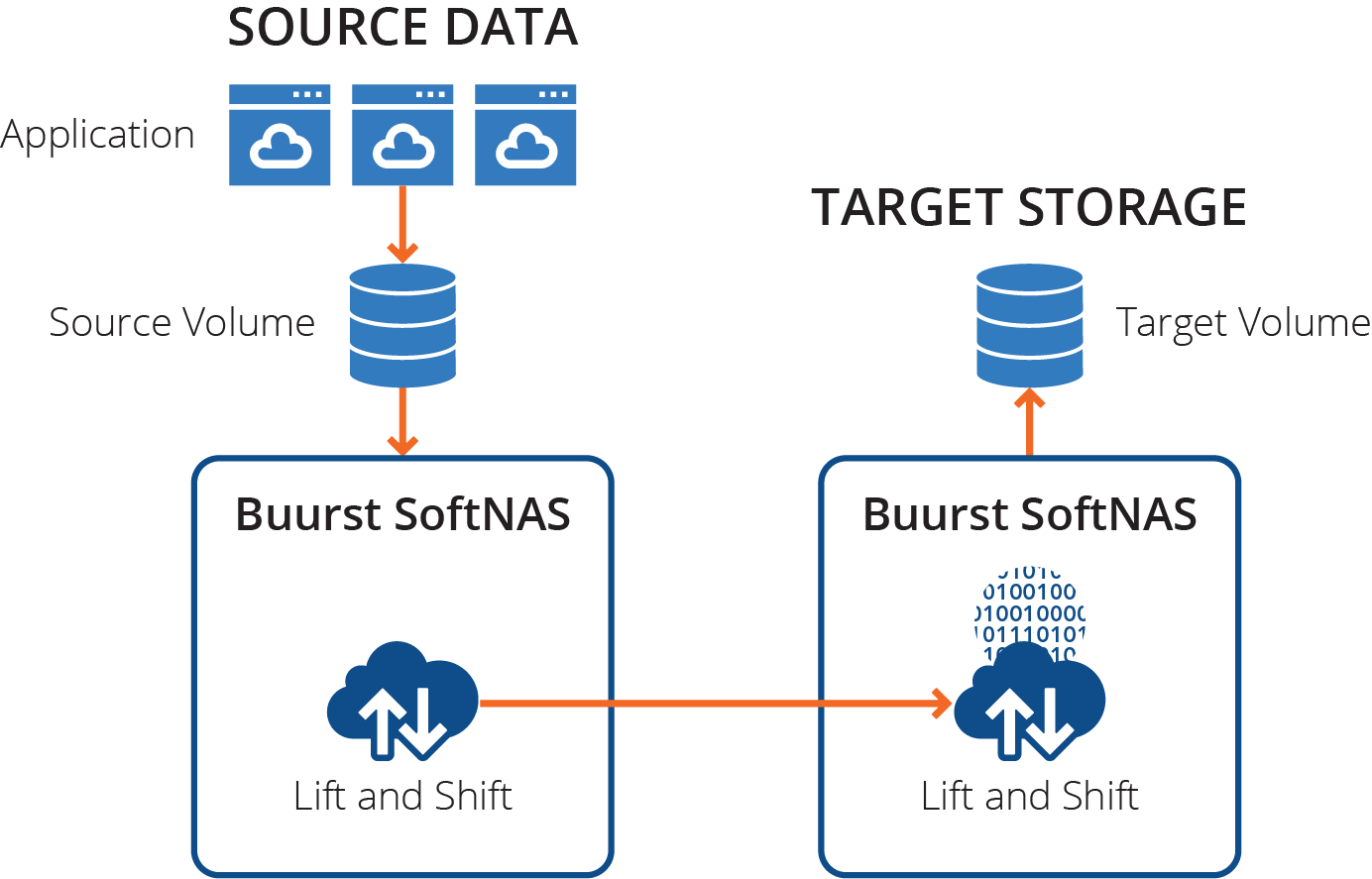

CBB performs full and incremental file backups from the SoftNAS ZFS filesystems and stores the data in low-cost, highly-durable object storage – S3 on AWS, and Azure blobs on Azure.

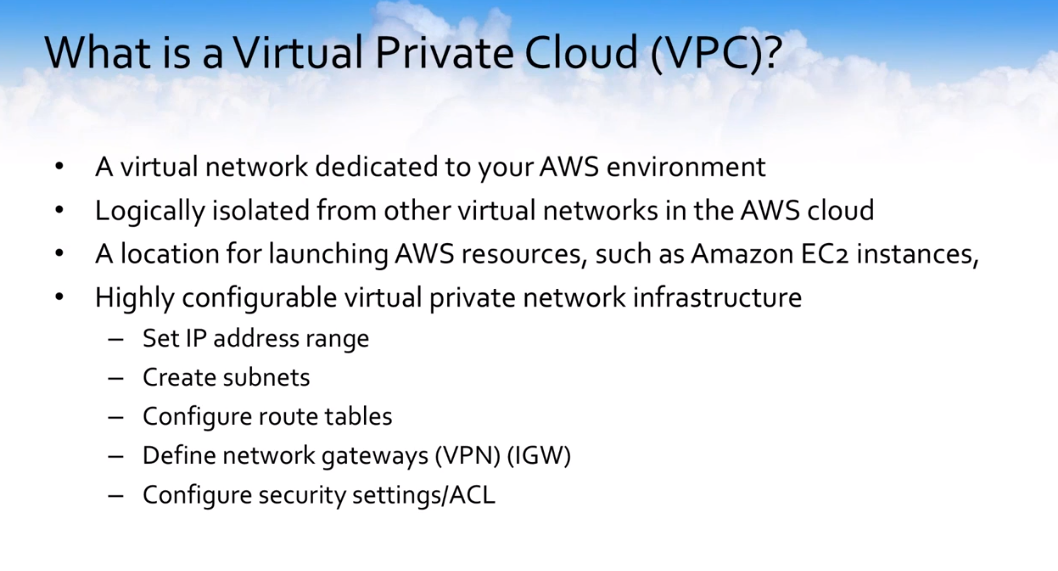

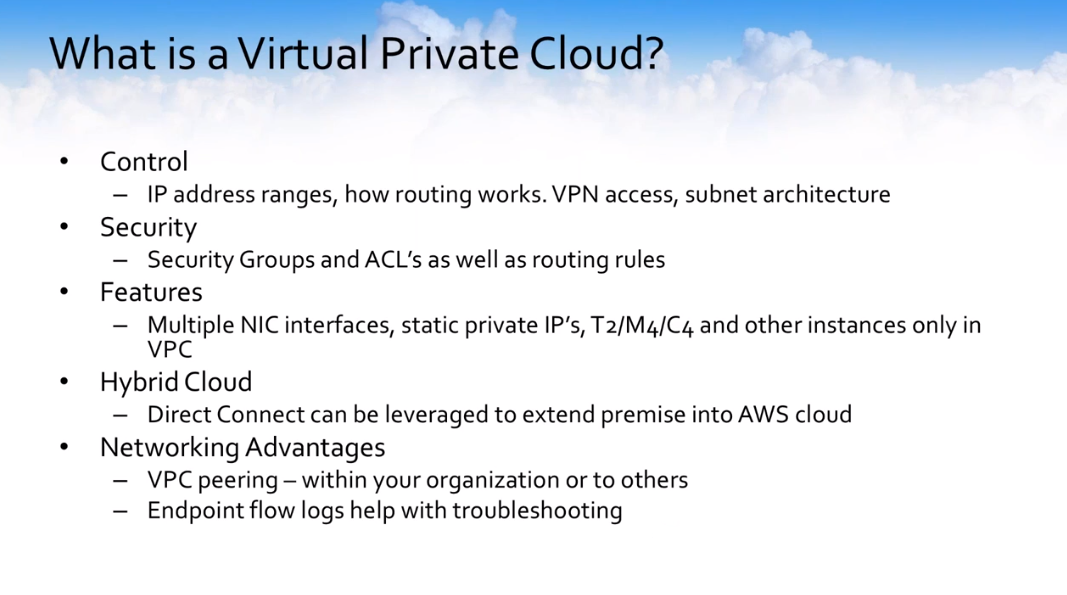

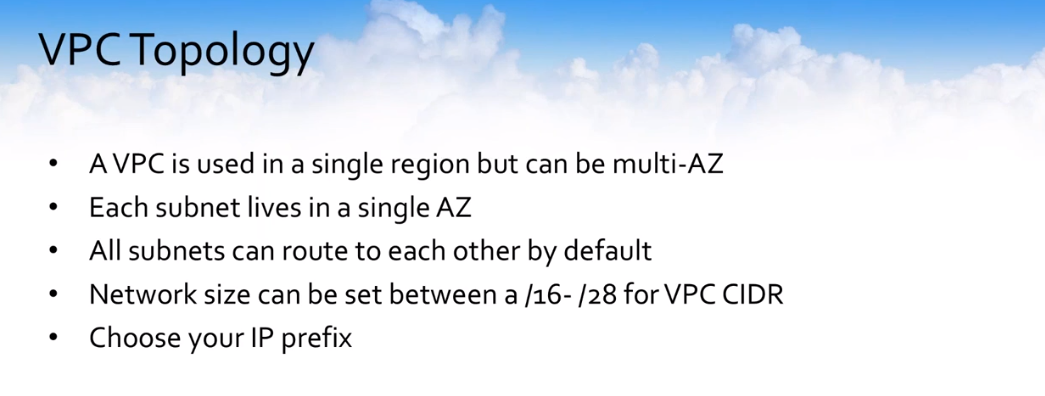

CBB supports a broad range of backup repositories, so you can choose to back up to one or more targets, within the same cloud or across different clouds as needed for additional redundancy. It is even possible to back up your SoftNAS pool data deployed in Azure to AWS, and vice versa. Note that we generally recommend creating a VPC-to-S3 or VNET-to-Blob service endpoint in your respective public cloud architecture to optimize network storage traffic and speed up backup timeframes.

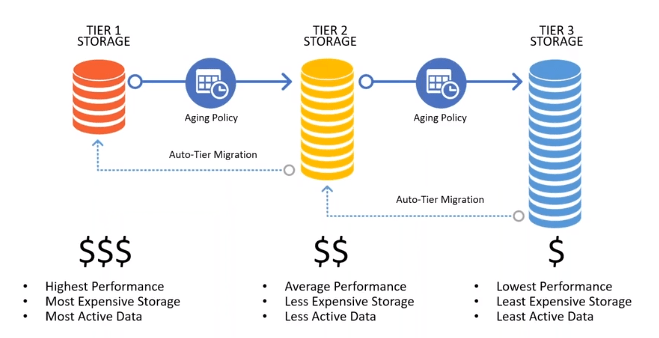

To reduce the costs of backup storage even further, you can define lifecycle policies within the Cloudberry UI that move the backups from object storage into archive storage. For example, on AWS, the initial backup is stored on S3, then a lifecycle policy (managed right in CBB) kicks in and moves the data out of S3 and into Glacier archive storage. This reduces the backup data costs to around $4/TB (or less in volume) per month. You can optionally add a Glacier Deep Archive policy and reduce storage costs even further down to $1 per TB/month. There is also an option to use AWS S3 Infrequent Access Storage.

There are similar capabilities available on Microsoft Azure that can be used to drive your data backup costs down to affordable levels. Bear in mind the current version of Cloudberry for Linux has no native Azure Blob lifecycle management integration. Those functions need to be performed via the Azure Portal.

Personally, I prefer to keep the latest version in S3 or Azure hot blob storage for immediate access and faster recovery, along with several archived copies for posterity. In some industries, you may have regulatory or contractual obligations to keep archive data much longer than with a typical archival policy.

Today, we also use CBB to back up our R&D lab’s Veeam backup repositories into the cloud as an additional DR measure. We use CBB for this because there are no excessive I/O costs when backing up into the cloud (Veeam performs a lot of synthetic merge and other I/O, which drives up I/O costs based on our testing).

In my book, there’s no excuse for not having file-level backups of every piece of important business data, given the costs and business impacts of the alternatives: downtime, lost time, overtime, stressful calls with the bosses, lost productivity, lost revenue, lost customers, brand and reputation impacts, and sometimes, lost jobs, lost promotion opportunities – it’s just too painful to consider what having no backup can devolve into.

To summarize, there are 5 levels of data protection available to secure your SoftNAS deployment:

1. ZFS scheduled snapshots – “point-in-time” meta-data recovery points on a per-volume basis

2. EBS / VM snapshots – snapshots of the Block Disks used in your SoftNAS pool

3. HA replicas – block replicated mirror copies updated once per minute

4. DR replica – file replica kept in a different region, just in case something catastrophic happens in your primary cloud datacenter

5. File System backups – Cloudberry or equivalent file-level backups to Blob or s3.

So, whether you choose to use CloudBerry Backup, Veeam®, native Cloud backup (ex. Azure Backup) or other vendor backup solutions, do yourself a big favor. Use *something* to ensure your data is always fully backed up, at the file level, and always recoverable no matter what shenanigans Murphy comes up with next. Trust me, you’ll be glad you did!

Disclaimer:

SoftNAS is not affiliated in any way with CloudBerry Lab. As a CloudBerry customer, we trust our business data to CloudBerry. We also trust our VMware Lab and its data to Veeam. As a cloud NAS vendor, we have tested with and certified CloudBerry Backup as compatible with SoftNAS products. Your mileage may vary.

This post is authored by Rick Braddy, co-founder, and CTO at Buurst SoftNAS. Rick has over 40 years of IT industry experience and contributed directly to formation of the cloud NAS market.