How To Reduce Public Cloud Storage Costs. Download the full slide deck on Slideshare.

John Bedrick, Sr. Director of Product Marketing Management and Solution Marketing, discussed how SoftNAS Cloud NAS is helping to reduce Public Storage Costs. In this post, you will get a better understanding of the data growth trends and what needs to be considered when looking to make the move into the Public cloud.

How To Reduce Public Cloud Storage Costs

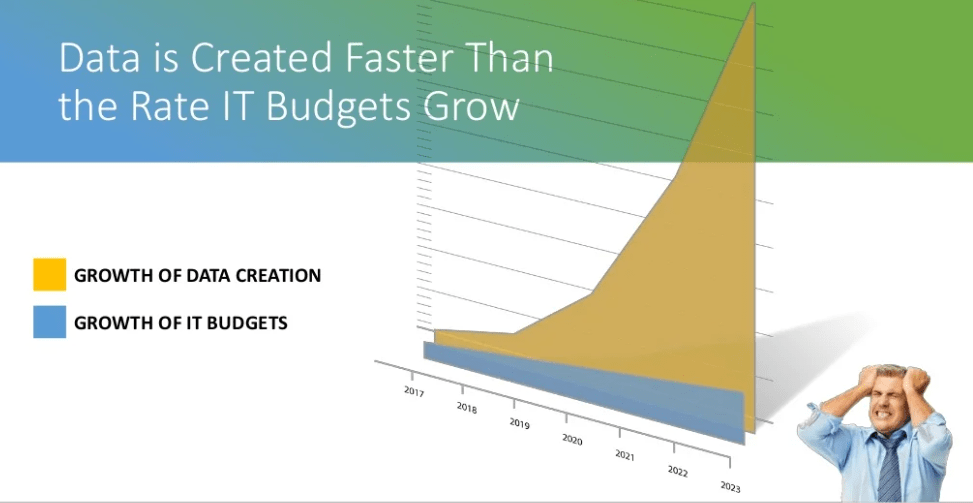

The amount of data that has been created by businesses is staggering – it’s on an order of doubling every 18 months. This is really an unsustainable long-term issue when we see what IT budgets are growing at compared to businesses.

IT budgets on average are growing maybe about 2 to 3% annually. Obviously, according to IDC, by 2020 which is not that far off, 80% of all corporate data growth is going to be unstructured — that’s your emails, PDF, Word Documents, images, etc. — while only about 10% is going to come in the form of structured data like databases, for example. And that could be SQL databases, NoSQL, XML, JSON, etc. Meanwhile, by 2020, we’re going to be reaching 163 Zettabytes worth of data at a pretty rapid rate.

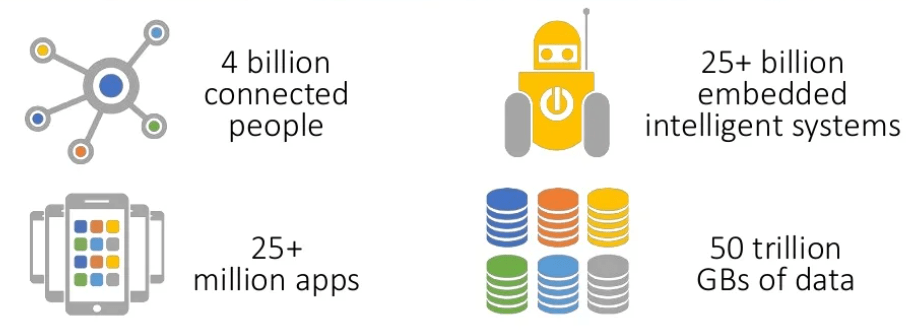

If you compel that by some brand new sources of data that we hadn’t really dealt with much in the past, it’s really going to be challenging for businesses to try to control and manage that when you add in things like the Internet of Things, big data analytics, all of which will create gaps between where the data is produced versus where it’s going to be consumed, analyzed, backed-up.

Really, if you look at things even from a consumer standpoint, almost everything we buy these days generates data that needs to be stored, controlled, and analyzed – from your smart home appliances, refrigerators, heating, and cooling, to the watch that you wear on your wrist, and other smart applications and devices.

If you compel that by some brand new sources of data that we hadn’t really dealt with much in the past, it’s really going to be challenging for businesses to try to control and manage that when you add in things like the Internet of Things, big data analytics, all of which will create gaps between where the data is produced versus where it’s going to be consumed, analyzed, backed-up.

Really, if you look at things even from a consumer standpoint, almost everything we buy these days generates data that needs to be stored, controlled, and analyzed – from your smart home appliances, refrigerators, heating, and cooling, to the watch that you wear on your wrist, and other smart applications and devices.

If you look at 2020, the number of people that will actually be connected will reach an all-time high of four billion and that’s quite a bit. We’re going to have over 25 million apps. We are going to have over 25 billion embedded and intelligent systems, and we’re going to reach 50 trillion gigabytes of data – staggering.

On the meantime, the data isn’t confined merely anymore to traditional data centers so the gap between where it’s stored and where it’s consumed and the preference for data storage is not going to be your traditional data center anymore.

Businesses are really going to be in a need of a multi-cloud strategy for controlling and managing this growing amount of data.

If you look at it, 80% of IT organizations will be committed to hybrid architectures and this is according to IDC. In another study from the “Voice of Enterprise” by the 451 Research Group, it was found that 60% of companies will actually be upgrading in a multi-cloud environment by the end of this year.

Data is created faster than the IT budgets grow

While data is being created faster and the rate of the IT budget is growing, you can see from the slide that there’s a huge gap, which leads to frustration from the IT organization.

Let’s transition to how do we address and solve some of these huge data monsters that are just gobbling up data as fast as it could be produced and creating a huge need for storage.

What do we look for in a public cloud solution to address this problem?

Well, some of these have been around for a little while.

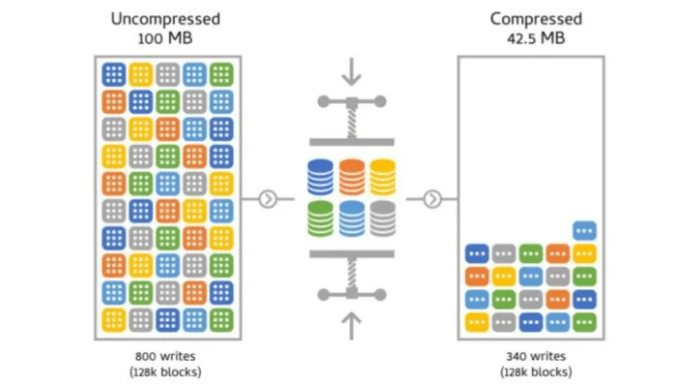

Data storage compression.

Now, for those of you who haven’t been around the industry for very long, data storage compression basically removes the whitespace between and in data for more efficient storage.

If you compress the data that you’re storing, then you can get a net benefit of savings in your storage space and that, of course, immediately translates into cost savings. Now, all of this cost is subject to the types of data that you are storing.

Not all cloud solutions, by the way, include the ability to compress data. One example that comes to mind is a very well-promoted cloud platform vendor’s offering that doesn’t offer compression. Of course, I am speaking about Amazon’s Elastic File System, or EFS for short. An EFS does not offer compression. That means; you either need to have a third-party compression utility to compress your data prior to storing it in the cloud on EFS or solutions like EFS, and that can lead to all sorts of potential issues down the road. Or you need to store your data in an uncompressed format; and of course, if you do that, you’re paying unnecessarily more money for that cloud storage.

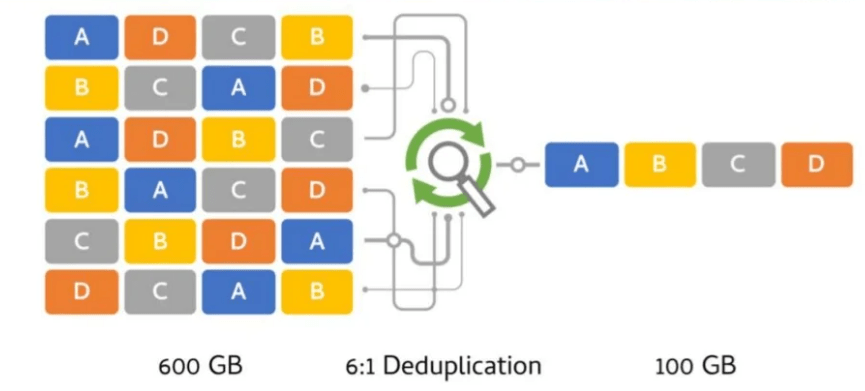

Deduplication

Another technology is referred to as deduplication. What is deduplication? Deduplication sounds and is exactly what it sounds like; it is the elimination or reduction of data redundancies.

If you look at all of the gigabytes, terabytes, and petabytes that you might have of data, there is going to be some level of duplication. Sometimes it’s a question of multiple people who may be even storing the exact same identical file on a system that gets backed up into the cloud. All of that is going to take up additional space.

If you’re able to deduplicate the data that you’re storing, you can achieve some significant storage-space savings, translation into cost savings, and that, of course, is subject to the amount of repetitive data being stored. Just like I mentioned previously with compression, not all solutions in the cloud include the ability to deduplicate data. Just as in the previous example that I had mentioned about Amazon’s EFS, EFS also does not include native deduplication.

Either, again, you’re going to need a third-party dedupe utility prior to storing it in EFS or some other similar solution, or you’re going to need to store all your data in an un-deduped format on the cloud. That means you’re, of course, going to be paying more money than you need to.

Object Storage

Much more cost-effective

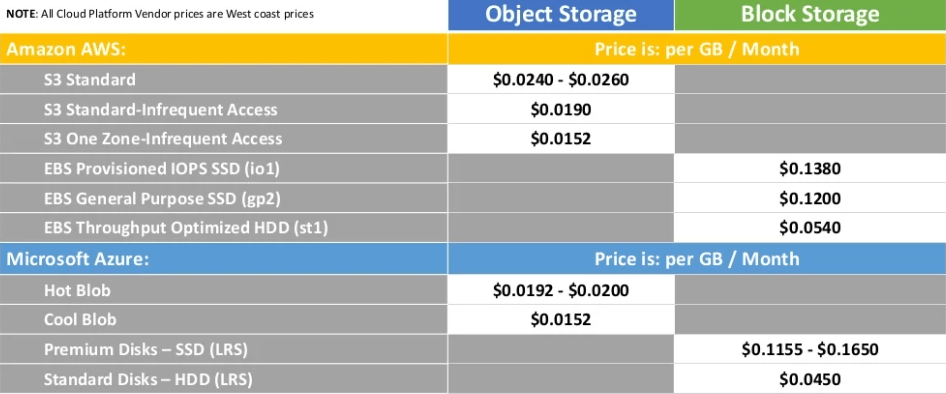

Let’s just take a look at an example of two different types of storage at a high level. What you’ll take away from this image, I hope, is that you will see that object storage is going to be much more cost-effective, especially in the cloud.

Just a word of note. All the prices that I am displaying in this table are coming from the respective cloud platform vendors on the West Coast pricing. They offer different prices based on different locations and regions. In this table, I am using West Coast pricing. What you will see is that the more high-performing public cloud block storage costs are relatively more expensive than the lower-performing public cloud object storage.

In the example, you can see ratios of five or six or seven to one where object storage is less expensive than block storage. In the past, typically what people would use that object storage for would be to put less active storage and data into the object storage. Sort of more of a long curve strategy. You can think of it as maybe akin to the more legacy-type drives that are still being used today.

Of course, what people would do will be putting their more active data in block storage. If you follow that and you’re able to make use of object storage in a way that’s easy for your applications and your users to obtain too, then that works out great.

If you can’t…Most solutions out in the market today are unable to utilize access to cloud-native object storage and so they need something in between to be able to get the benefit of that. Similarly, being able to get cloud-native access to block storage also would require access to some solution for that and there are a few out in the market and, of course, SoftNAS is one of those.

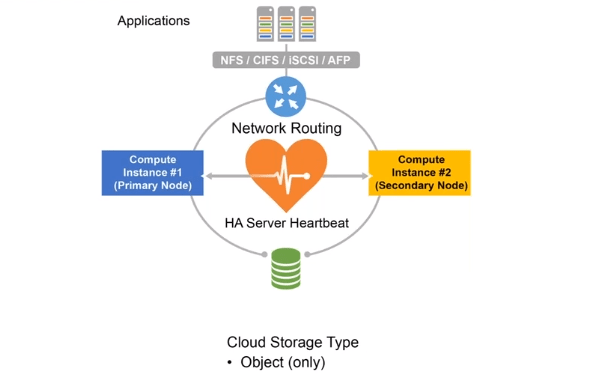

High Availability With Single Storage Pool

Relies on The Robust Nature of Cloud Object Storage

If you’re able to make use of object storage, what are some of the cool things you can do to save more money besides using object storage just by itself? A lot of applications require high availability. High availability (HA) is exactly what it sounds like. It is maintaining a maximum amount of up-time for applications and access to data.

There has been an ability to have two computing instances access a single storage pool — they both share access to the same storage pool –in the past, on legacy on-premises storage systems and it hasn’t been fully brought over into the public cloud until recently.

If you’re able to do this as this diagram shows — having two computer instances access an object-storage storage pool — that means you’re relying on the robust nature of public cloud object storage. The SLAs typically for public cloud object storage are at least 10 or more 9s of uptime. That would be 99.999999% or better, of up-time, which is pretty good.

The reason why you would have two computer instances is that the SLAs for the computing are not the same SLAs for the storage. You can have your compute instance go down in the cloud just like you could on an on-premises system; but at least your storage would remain up, using object storage. If you have two compute instances running in the cloud, if one of those — we’ll call it the primary node — was to fail, then the rollover or fell over would be to the second compute instance, or as I’m referring to on this diagram as the secondary node, and it would pick up.

There would be some amount of delay switching from the primary to the secondary. That will be a gap if you are actively writing to the storage during that period of time but then you would pick back up in a period of time — we’ll call it less than five minutes, for example — which is certainly better than being down for the complete duration until the public cloud vendor gets everything back up. Just remember that not every vendor offers this solution, but it can greatly reduce your overall public cloud storage cost by half. If you don’t need to have twice the storage for a fully high-available system and you can do it all with object storage in just two compute instances, you’re going to save roughly 50% of what the cost would normally be.

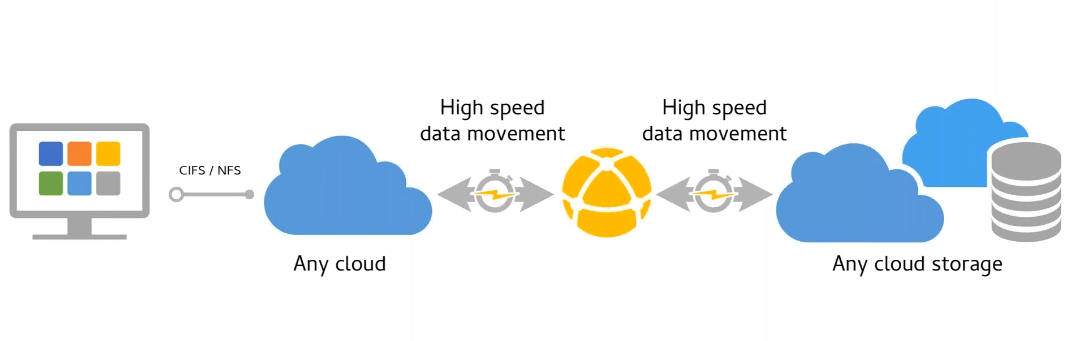

High-speed WAN optimization

Bulk data transfer acceleration

The next area of savings is one that a lot of people don’t necessarily think about when they are thinking about savings in the cloud and that is your network connection and how to optimize that high-speed connection to get your data moved from one point to another.

Traditional ways of filling lots of physical hard drives or storage systems, then putting them on a truck and having that truck drive over to your cloud provider of choice. Then taking those storage devices and physically transferring the data from those storage devices into the cloud or possibly mounting it can be,, very expensive and filled with lots of hidden costs. Plus, you really do have to recognize that you run the risk of your data getting out of sync between the originating source in your data center and the ultimate cloud destination, all of which can cost you money.

Another option, of course, is I’ll lease some high-speed network connections between my data center or my data source and the cloud provider of your choice. That also could be very expensive. Needing a 1G network connection or a 10G network connection, those are pricy. Having the data transfer take longer than it needs to means that you have to keep paying for those leased lines, those high-speed network connections, longer than you would normally want.

The last option which would be transferring your data over slower more noisy error-prone network connections, especially in some parts of the world, is going to take longer due to the quality of your network connection and the inherent nature of the TCP/IP protocol. If it needs to have a retransmit of that data, sometimes because of those error conditions or drops or noise or latency, the process is going to become unreliable.

Sometimes the whole process of data transfer has to start over right from the beginning so all of the previous time is lost and you start from the beginning. All of that can result in a lot of time-consuming effort which is going to wind up costing your business money. All of those factors should also be considered.

Automated Storage Tiering of Aged Data

Match Application Data to Most Cost-effective Cloud Storage

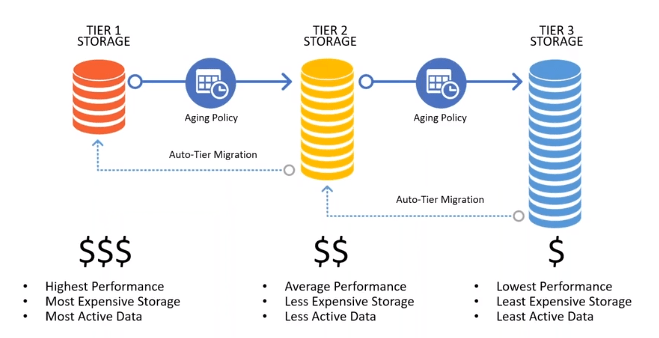

The next option I’m going to talk to you about is one that’s interesting. That is, assuming that you can make use of both object storage and block storage and be able to use them together. Creating tiers of storage where you are making use of the high-speed higher performing block storage on one tier and then also using other tiers which would be less performance and less expensive.

If you can have multiple tiers, where your most active data is only contained within the most expensive higher performing tier, then you are able to save money if you can move the data from tier to tier. A lot of solutions out in the market today are doing this via a manual process. Meaning that a person, typically somebody in IT, would be looking at the age of the data and moving it from one storage type to another storage type, to another storage type.

If you have the ability to create aging policies that can move the data from one tier to another tier, to another tier, and back again, as it’s being requested, that can also save you considerable money in two ways.

One way is, of course, you’re only storing and using the data on the tier of storage that is appropriate at the given time, so you’re saving money on your cloud storage. Also, if it could be automated, you’re saving money on the labor that would have to manually move the data from one tier to another tier. It can all be policy-driven so you’ll save money on the labor for that.

These are all areas in which you should consider looking at to help reduce your overall public cloud storage expense.

SoftNAS can offer in helping you save money in the public cloud.

Save 30-80% by reducing the amount of data to store

SoftNAS provides enterprise-level cloud NAS Storage featuring data performance, security, high availability (HA), and support for the most extensive set of storage protocols in the industry: NFS, CIFS/SMB-AD, iSCSI. It provides unified storage designed and optimized for high performance, higher than normal I/O per second (IOPS), and data reliability and recoverability. It also increases storage efficiency through thin-provisioning, compression, and deduplication.

SoftNAS runs as a virtual machine, providing a broad range of software-defined capabilities, including data performance, cost management, availability, control, backup, and security.