Migrating Existing Applications to AWS Without Reengineering

Migrate Existing Applications to AWS Cloud without Re-engineering... Download the full slide deck on Slideshare.

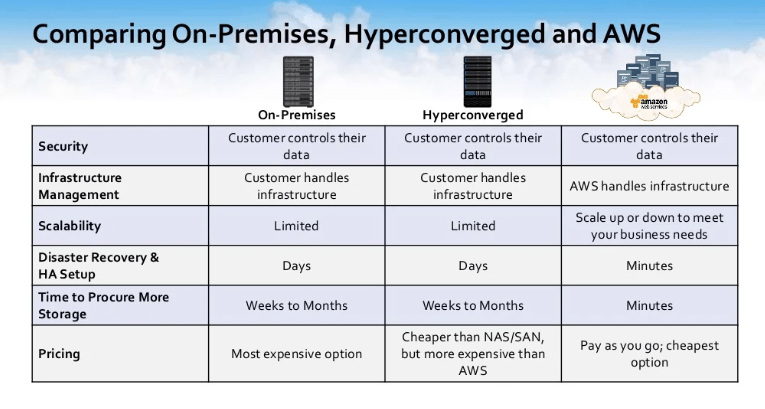

Designing a cloud data system architecture that protects your precious data when operating business-critical applications and workloads in the cloud is of paramount importance to cloud architects today.

Ensuring the high availability of your company’s applications and protecting business data is challenging and somewhat different than in traditional on-premise data centers. For most companies with hundreds to thousands of applications, it’s impractical to build all of these important capabilities into every application’s design architecture.

The cloud storage infrastructure typically only provides a subset of what’s required to protect business data and applications properly. So how do you ensure your business data and applications are architected correctly and protected in the cloud? In this webinar, we covered: Best Practices for protecting business data in the cloud How To design a protected and highly-available cloud system architecture, and Lessons Learned from architecting thousands of cloud system architectures

Migrate Applications to AWS Cloud without Re-engineering

For our agenda today, this is going to be a technical discussion. We’ll be talking about security and data concerns for migrating your applications to AWS Cloud. We will also talk a bit about the design and architectural considerations around security and access control, performance, backup and data protection, mobility and elasticity, and high availability.

Best practices for designing cloud storage for existing apps on AWS.

First, let’s talk about SoftNAS a little bit. We’re just going to give our story and why we have this information and why we want to share this information.

SoftNAS was born in the cloud in 2012. It was born from…Our founder, Rick Brady went out to find a solution that would be able to give him access to cloud storage. When he went out and looked for one, he could find anything that was out there. He took it upon himself to go through the process of creating this solution that we now know as SoftNAS.

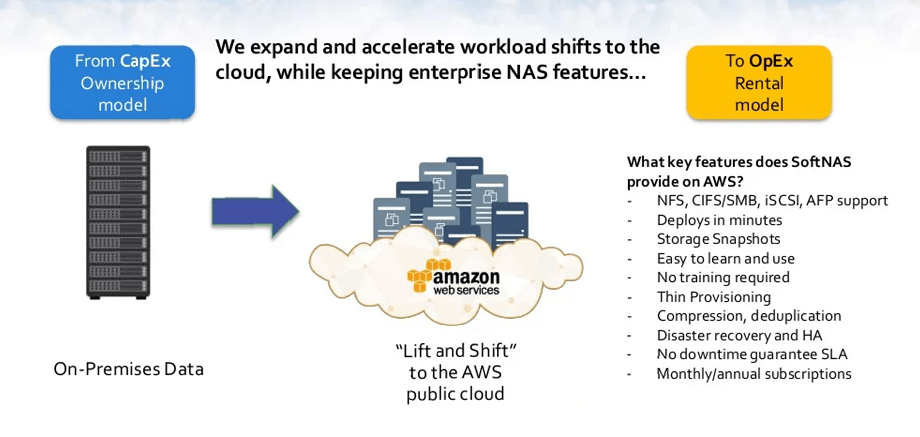

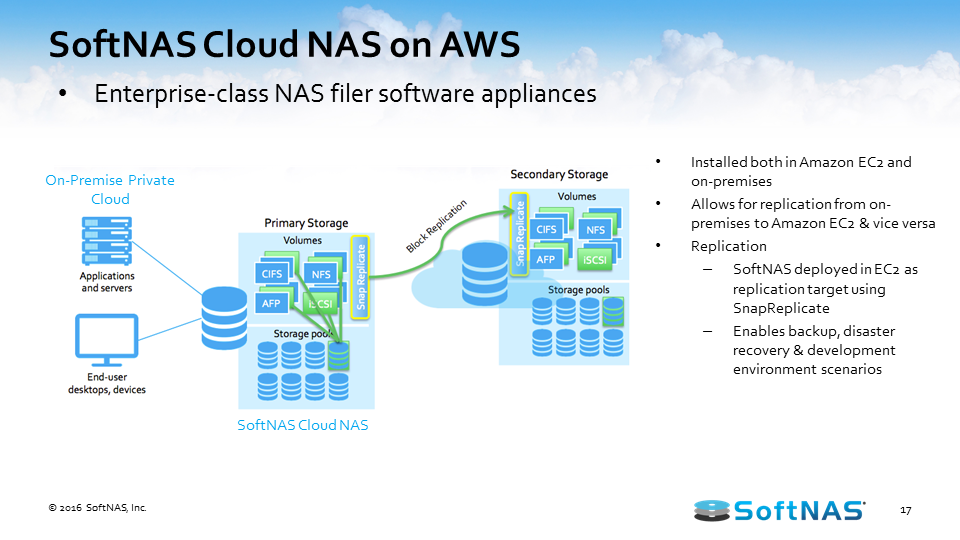

SoftNAS is a cloud NAS. It’s a virtual NAS storage appliance that exists on-premise or within the AWS Cloud and we have over 2,000 VPC deployments. We focus on no app left behind. We give you the ability to migrate your apps into the cloud so that you don’t have to change your code at all. It’s a Lift and Shift bringing your applications to be able to address cloud storage very easily and very quickly.

We work with Fortune 500, SMB companies, and thousands of AWS subscribers. SoftNAS also owns several patents including patents for HA in the cloud and data migration.

Security and data concerns compound design requirements

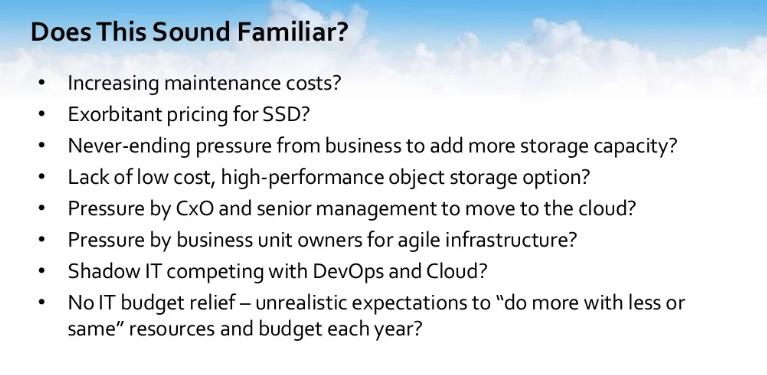

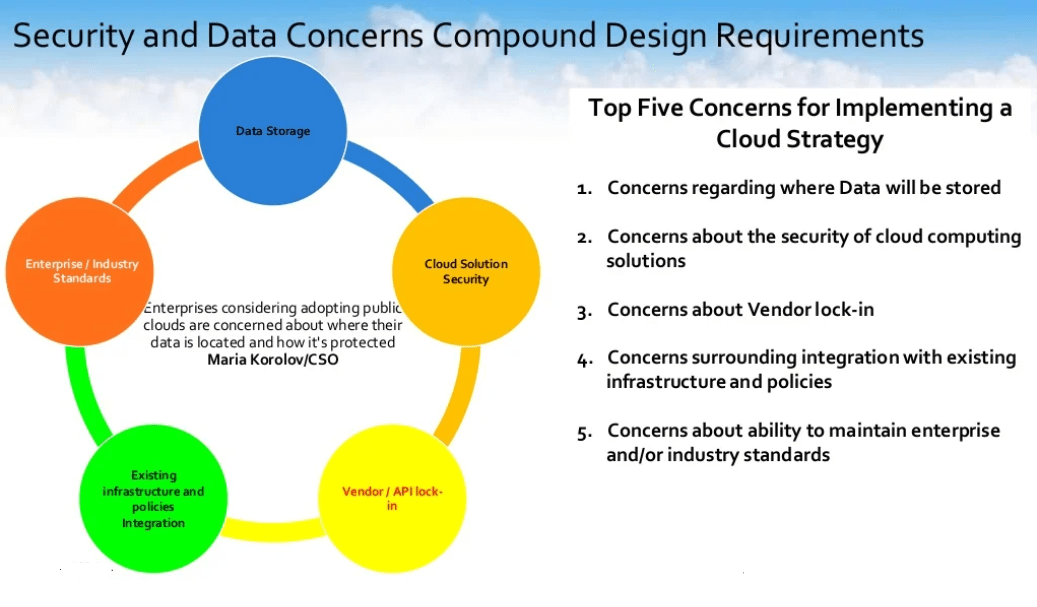

The first thing that we want to go through and we want to talk about is cloud strategy. Cloud strategy, what hinders it? What questions do we need to ask? What are we thinking about as we go through the process of moving our data to the cloud?

Every year, IDG, the number one tech media company in the world. You might know them for creating CIO.com, Computer World, Info World, IT World, and Network World. Basically, if it has the technology and a world next to it, it’s probably owned by IDG.

IDG calls its customers every single year with the goal of measuring cloud computing trends among technology decision-makers to figure out uses and plans across various cloud services, development models, and investments and trying to figure out business strategies and plans for the rest of the IT world to focus on. From that, we actually took the top five concerns.

The top five concerns belief it or not has to do with our data.

Number one concerns come across. Concerns regarding where will my data be stored? Is my data going to be stored safely and reliably? Is it going to be stored in a data center? What type of storage is it going to be stored on? How could I figure that out? Especially when you’re thinking about moving to a public cloud, these are some questions that rake people’s minds.

Questions number 2. Concerns about the security of cloud computing solutions. Is there a risk of unauthorized access, and data integrity protection? These are all concerns that are out there about the security of what I’m going to have in an environment.

We also have concerns that are out there about vendor lock-in. What happens if my vendor of choice changes costs, changes offerings, or just goes? There are concerns out there, number four, with the surrounding integration of existing infrastructure and policies. How do I make the information available outside the cloud while preserving the uniform set of access privileges that I have worked for the last 20 years to develop?

Number five. You have concerns about the ability to maintain enterprise or industry standards — that’s your ISOs, PSI, SaaC, or SaaS. We will share some of the questions that our customers have asked and which they have asked earlier in the design structure of moving their data to the cloud so that this would be beneficial to you.

Security and Access Control

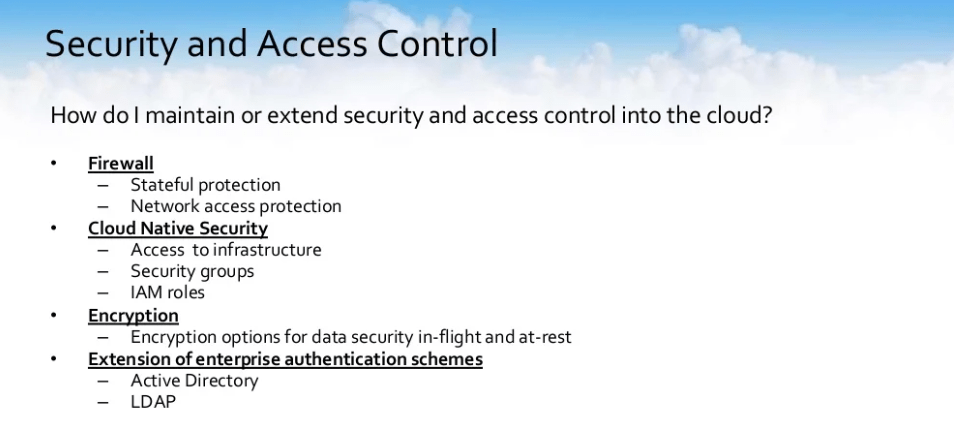

Question number one and it’s based on the same IDG poll. How do I maintain or extend security and access control into the cloud? We often think outside to end when we design for threats.

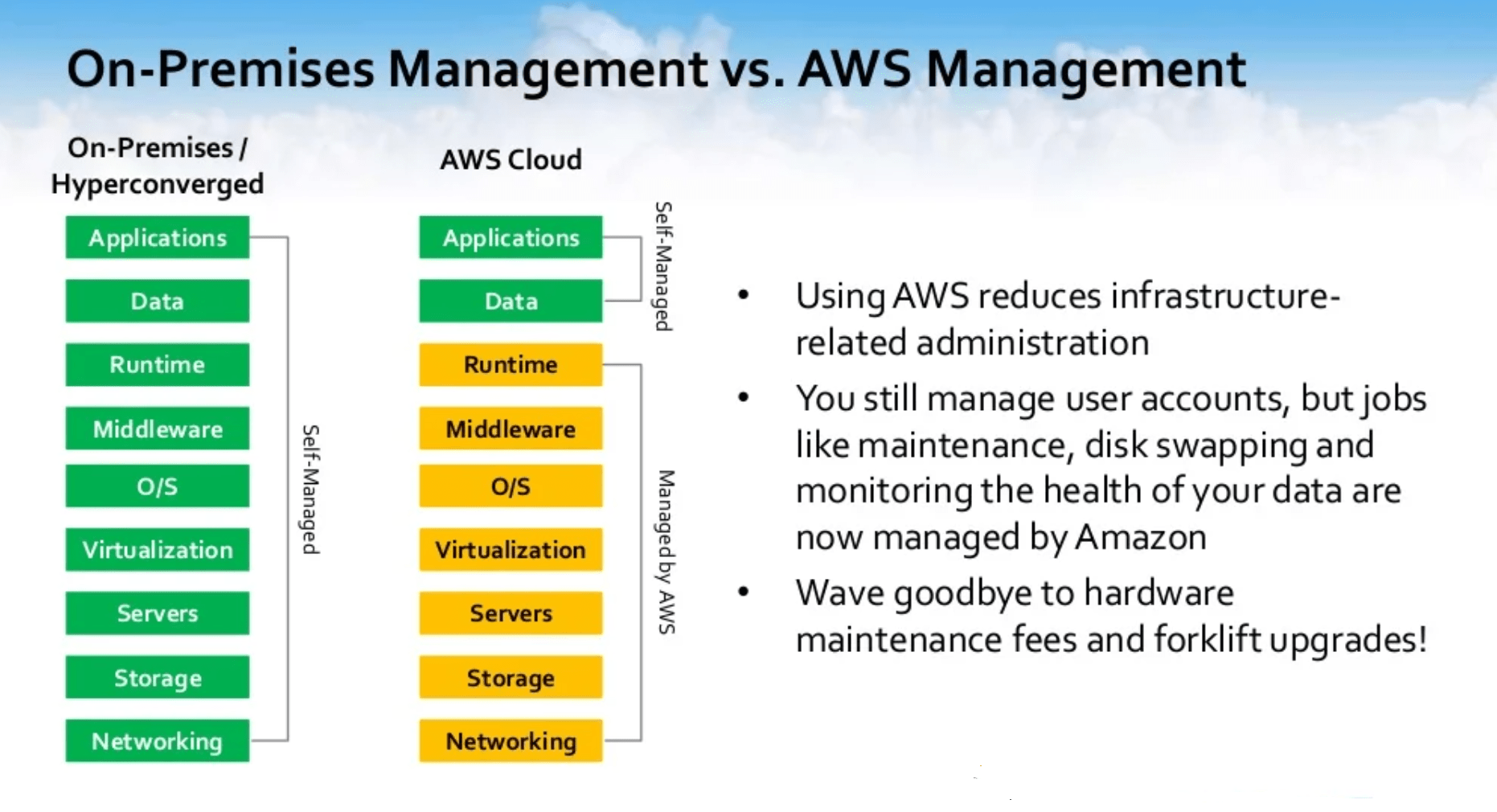

This is how our current on-prem environment is built. It was built with protection from external access. It then goes down to physical access to the infrastructure. Then it’s access to the files and directories. All of these protections need to be considered and extended to your cloud environment, so that’s where the setup of AWS’s infrastructure plays hand-in-hand with SoftNAS NAS Filer.

First, it’s the fact of setting up a firewall and ensuring Amazon already has the ability for you to be able to utilize the firewalls through your access controls to your security groups. Setting up stateful protection from your VPCs, setting up network access protection, and then you go into cloud-native security. Access to infrastructure. Who has access to your infrastructure?

By you setting up your IAM roles or your IAM users, you have the ability to control that from security groups. Being able to encrypt that data. If everything fails and users have the ability to touch my data, how do I make sure that even when they can see it, it’s encrypted and it’s something that they cannot use?

We also talk about the extension of enterprise authentication schemes. How do I make sure that I am tiering into an active directory or tiering into LDAP which is already existing in my environment?

Backup and Data Protectiom

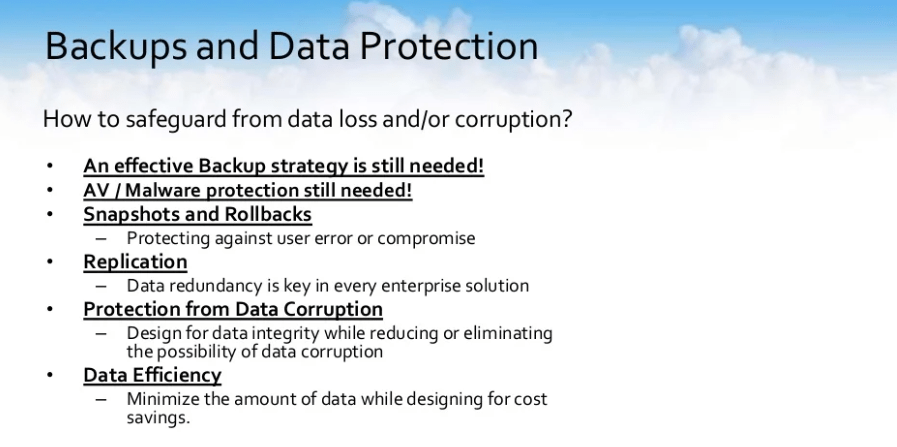

The next question that we want to ask is structured around backups and data protection. How to safeguard from data loss or corruption. We get asked this question almost — I don’t know — probably about 10-15 times a day. We get asked, I’m moving everything to the cloud; do I still need a backup? Yes, you still do need a backup. An effective backup strategy is still needed. Even though your data is in the cloud, you still have redundancy.

Everything has been enhanced, but you still need to be able to have a point in time or extended period of time that you could actually back and grab that data. Do I still need antivirus and malware protection? Yes, antivirus and malware protection is still needed. You also need the ability to have snapshots and rollbacks and that’s one of the things that you want to design for as you decide to move your storage to the cloud.

How do I protect against user error or compromise? We live in a world of compromise. A couple of weeks ago, we saw all in Europe that had run through the problem of ransomware. Ransomware was basically hitting companies left and right. From a snapshot and rollback standpoint, you want to be able to have a point in time that you could quickly rollback so that your users will not experience a long period of downtime. You need to design your store with that in mind.

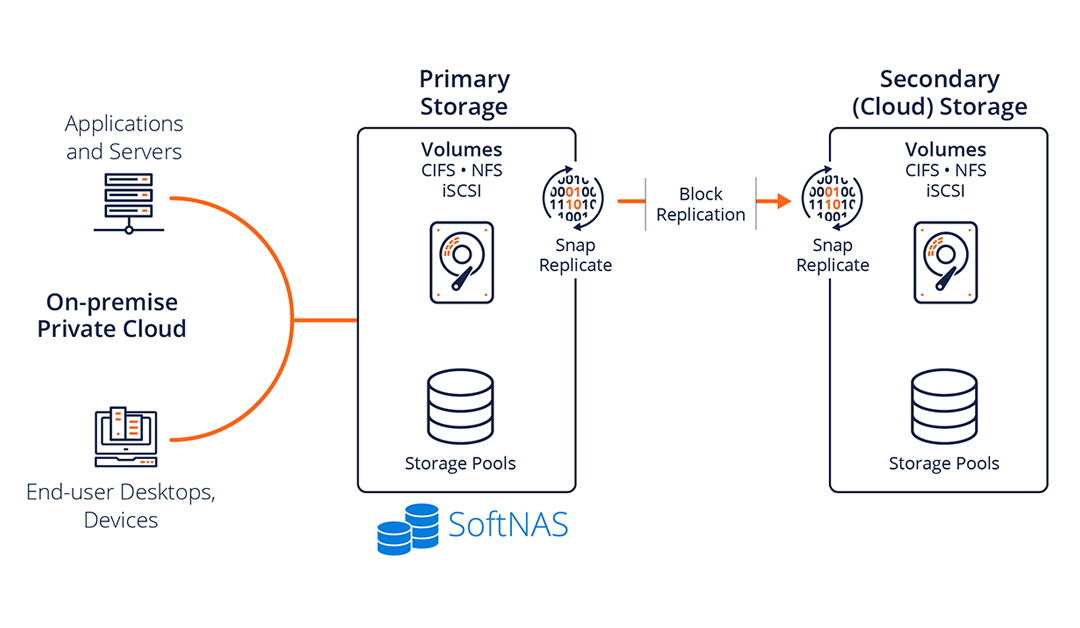

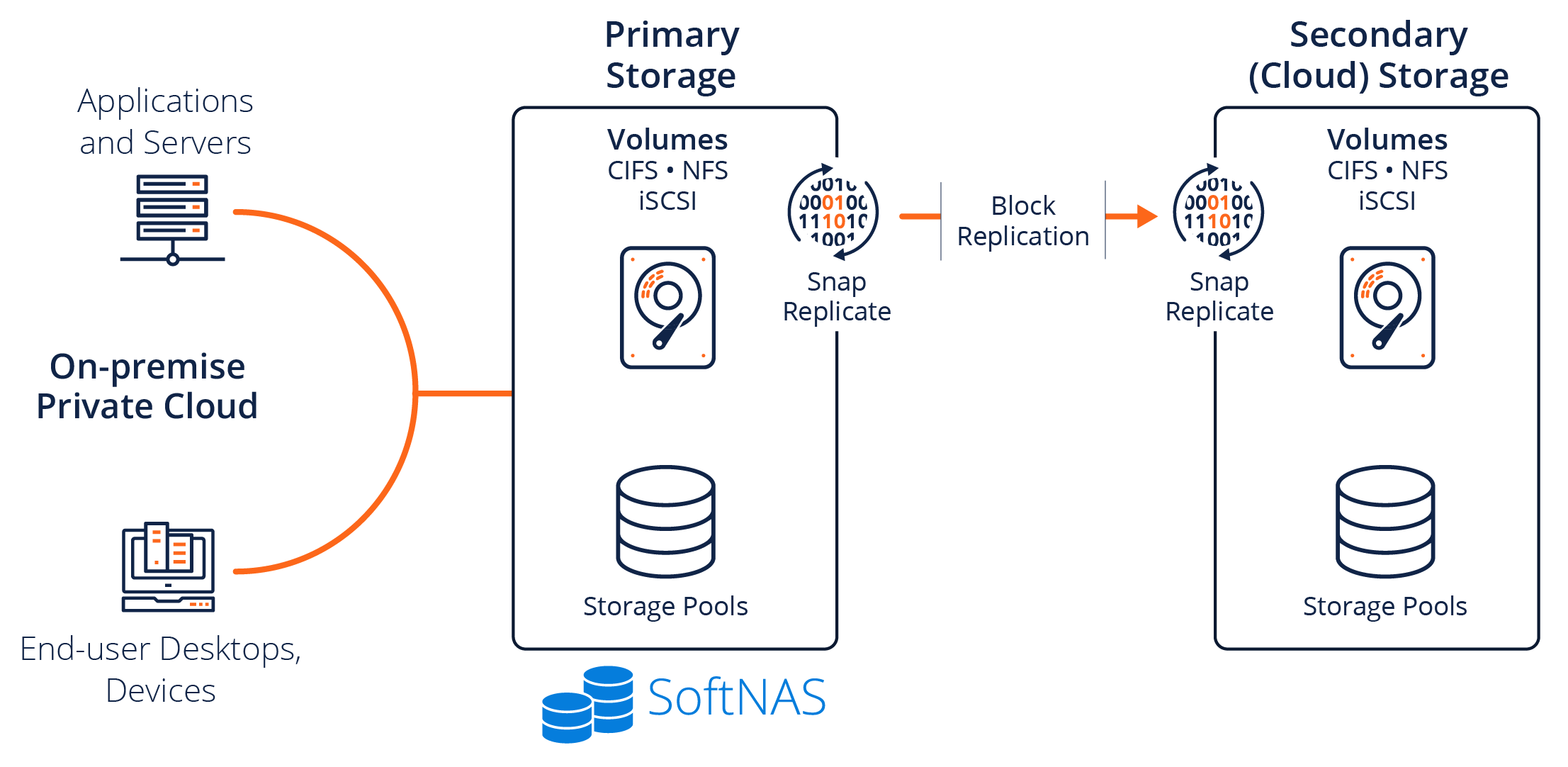

We also need to talk about replication. Where am I going to store my data? Where am I going to replicate my data to? How can I protect so the data redundancy that I am used to in my on-prem environment, that I have the ability to bring some of that to the cloud and have data resiliency?

I also need to think about how do I protect myself from data corruption. How do I design my environment to ensure that data integrity is going to be there, that I am making that protocols with the underlying infrastructure that I am protecting myself from being corrupted by different scenarios that would cause my data to lose its integrity?

Also, you want to think of data efficiency. How can I minimize the amount of data while designing for costs-savings? Am I thinking in this scenario about how do I dedupe, how do I compress that data? All of these things need to be taken into account as you go through that design process because it’s easier to think about it and ask those questions now than to try to shoehorn or fit it in after you’ve moved your data to the cloud.

Performance

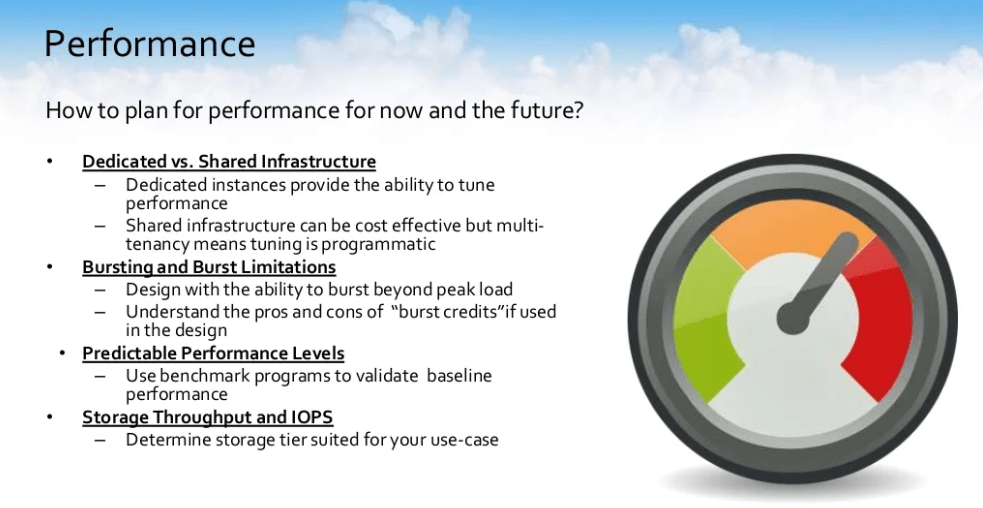

The next thing that we need to think about is performance. We get asked this all the time. How do I plan for performance? How do I design for performance but not just for now? how do I design for performance in the future also? Yes, we could design a solution that is angelic for our current situation; but if it doesn’t scale with us for the next five years, for the next two years, it’s not beneficial – it gets archaic very quickly.

How do I structure it so that I am designing not just for now but for two years from now, for five years from now, for potentially ten years from now? There are different concerns that need to be taken at this point. We need to talk about dedicated versus shared infrastructure. Dedicated instances provide the ability for you to tune performance. That’s a big thing because what you do right now and your use-case as it changes, you need to make sure that you could actually tune for performance for that.

Shared infrastructure. Although shared infrastructure can be cost-effective, multi-tenancy means that tuning is programmatic. If I go ahead and I use a shared infrastructure, that means that if I have to tune for my specific use-case or multiple use-cases within that environment, I have to wait for a programmatic change because it’s not dedicated to me solely. It is used by many other users.

Those are different concerns that you need to think about when it actually comes to the point of, am I going to use dedicated or am I going to use a shared infrastructure?

We also need to think about bursting and bursting limitations. You always design with the ability to burst beyond peak load. That is number one. 101, you have to think about the fact, I know that my regular load is going to be X but I need to make sure that I could burst beyond X. You need to also understand the pros and cons of burst credit. There’re different infrastructures and different solutions that are out there that introduce burst credits.

If burst credits are used, what do I have the ability to burst too? Once that burst credit is done, what does it do? Does it bring me down to subpar or does it bring me back to par? These are questions that I need to ask, or you need to ask as you go into the process of designing for storage, and what the look and feel of your storage are going to be while you’re moving to the public cloud.

You also need to look at and consider predictable performance levels. If I am running any production, I need to know that I have a baseline. That baseline should not fluctuate as much as I have the ability to burst beyond my baseline and be able to use more when I need to use more. I need to know that when I am at the base, I am secure with the fact that my baseline predictable is predictable and my performance levels are going to be just something that I don’t have to worry about.

We need to be able to go ahead and you should already be thinking about using benchmark programs to validate the baseline performance of your system. There’re tons of freeware tools out there, but that’s something that you definitely need to do while you’re going through that development process or design process for performance within the environment.

Storage, throughput, and IOPS. What storage tier is best suited for my use case? In every environment, you’re going to have multiple use cases. Do I have the ability to design my application or the storage that’s going to support my application to be best suited for my use case?

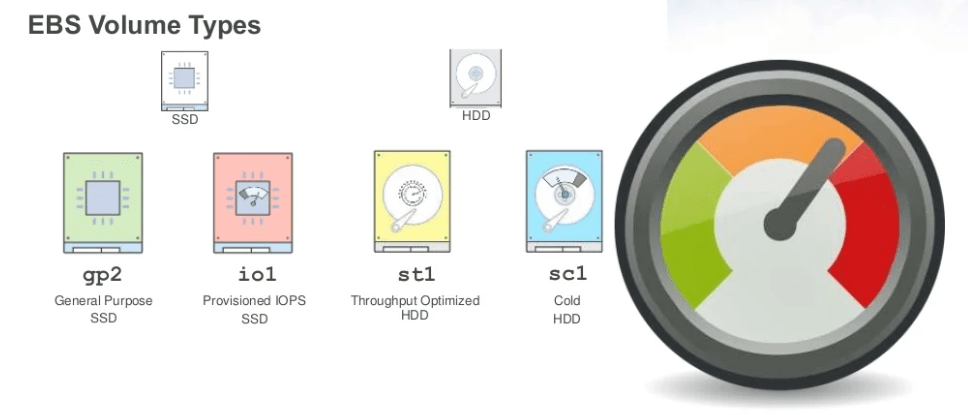

From a performance standpoint, you have multiple apps 19:04 for your storage tiers. You could go with your GP2 – these are the general-purpose SSD drives. There’s Provisioned IOPS. There’s Throughput Optimize. There is Cold HDDs. All of these are options that you need to make a determination on.

A lot of times, you’ll think about the fact that “I need general-purpose IOPS for this application, but Throughput Optimize works well with this application.” Do I have the ability? What’s my elasticity to using both? What’s the thought behind doing it?

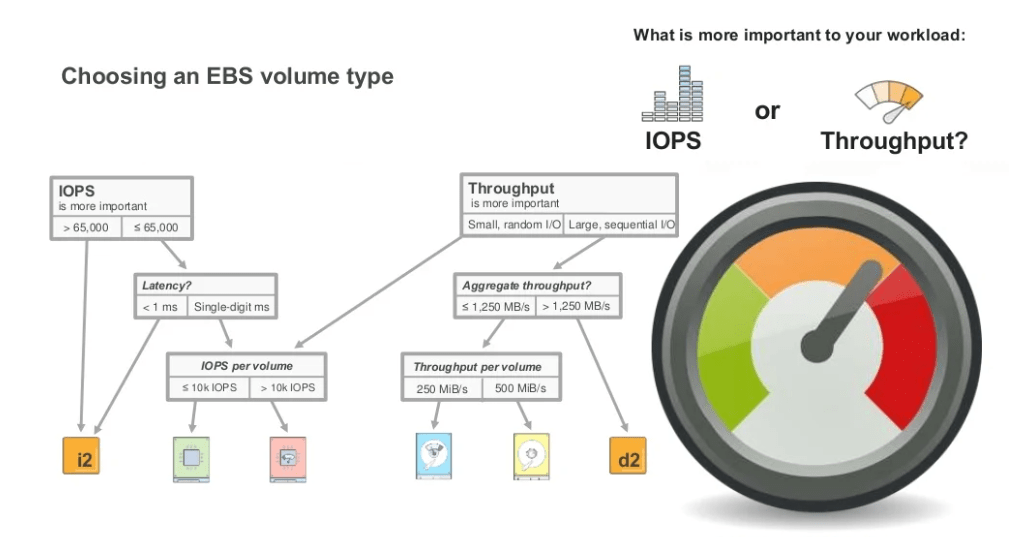

AWS gives you the ability to address multiple storages. The question that you need to ask yourself is, based on my use case, what is most important to my workload? Is it IOPS? Is it Throughput?

Based on the answer to that question, it gives you a larger idea of what storage to choose. This slide breaks down a little bit of moving from anything greater than 65,000 IOPS. Are you positioned to use anything that you need higher throughput from? What type of storage should you actually focus on?

These are definitely things that we actually work with our customers on a regular basis to actually steer them to the right choice so that it’s cost-effective and it is also user-effective for their applications.

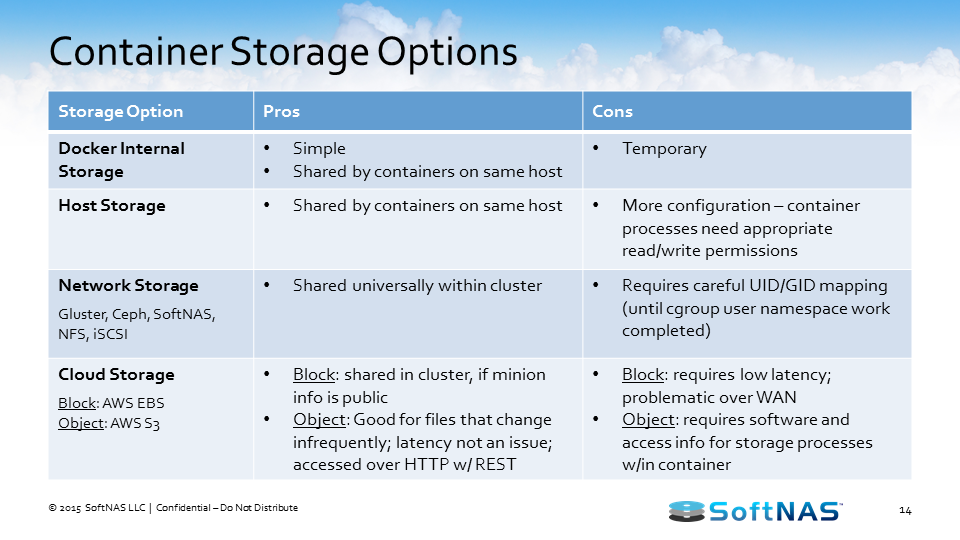

Then AWS S3. A cloud NAS should have the ability to address object storage because there are different use cases within your environment that would benefit from being able to use object storage.

We were at a meetup a couple of weeks ago that we did with AWS and there was an ask from the crowd. The ask came across. They said, “If I have a need to be able to store multiple or hundreds of petabytes of data, what should I use? I need to be able to access those files.”

The answer back was, you could definitely use S3, but you’ll need to be able to create the API to be able to use S3 correctly. With a cloud NAS, you should have the ability to use object storage without having to utilize the API.

How do you actually get to the point that you’re using APIs already built into the software to be able to use S3 storage or object storage the way that you would use any other file system?

Mobility and Elasticity.

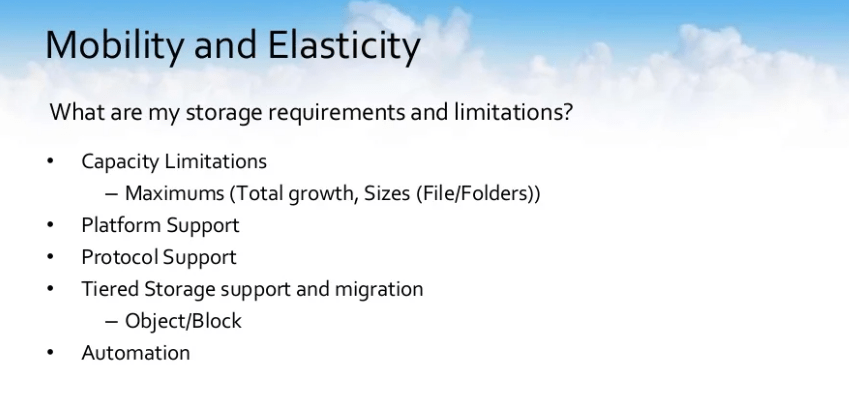

What are my storage requirements and limitations? You would think that these would be the first questions that get asked as we go through this design process. As companies come to us and we discuss it, but a lot of times, it’s not. It’s, people are not aware of their capacity limitations, so they make a decision to use a certain platform or to use a certain type of storage and they are unaware of the maximums. What’s my total growth?

What is the largest size that this particular medium will support? What’s the largest file size? What’s the largest folder size? What’s the largest block size? These are all questions that need to be considered as you go through the process of designing your storage. You also need to think about platform support. What other platforms can I quickly migrate to if needs be? What protocols can I utilize?

From tiered storage support and migration, if I start off with Provisioned IOPS disks, am I stuck with Provisioned IOPS disks? If I realize that my utilization is that of the need for S3, is there any way for me to migrate from Provisioned IOPS storage to S3 storage?

We need to think about that as we’re going with designing storage in the backend. How quickly can we make that data mobile? How quickly can we make it something that could be sitting on a different tier of storage, in this case, from object to block or vice versa, from block back to object?

And automation. Thinking about automation, what can I quickly do? What can I script? Is this something that I could spin up using cloud formation? Are there any tools? Is there API associated with it, CLI? What can I do to be able to make a lot of the work that I regularly do quick, easy scriptable?

Application Modernization or Application Migration

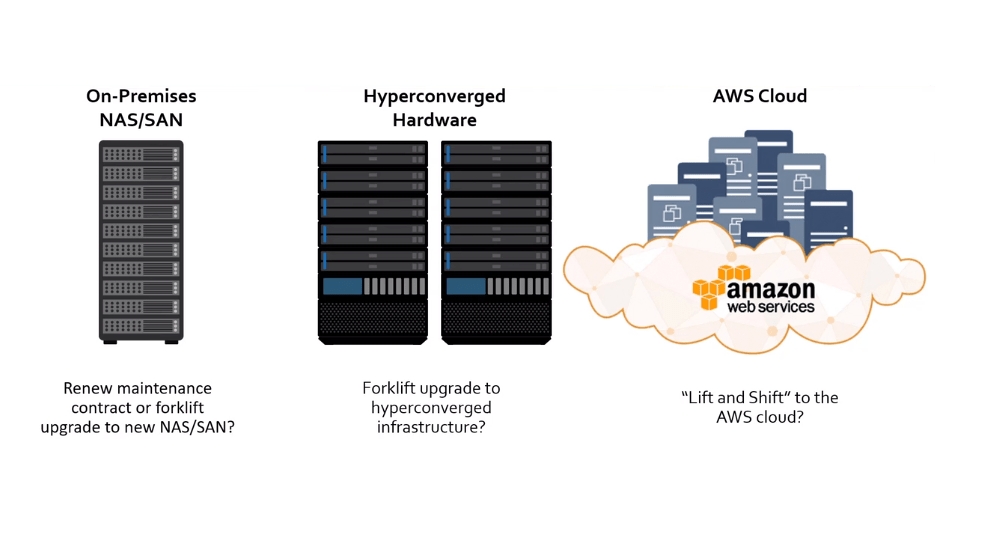

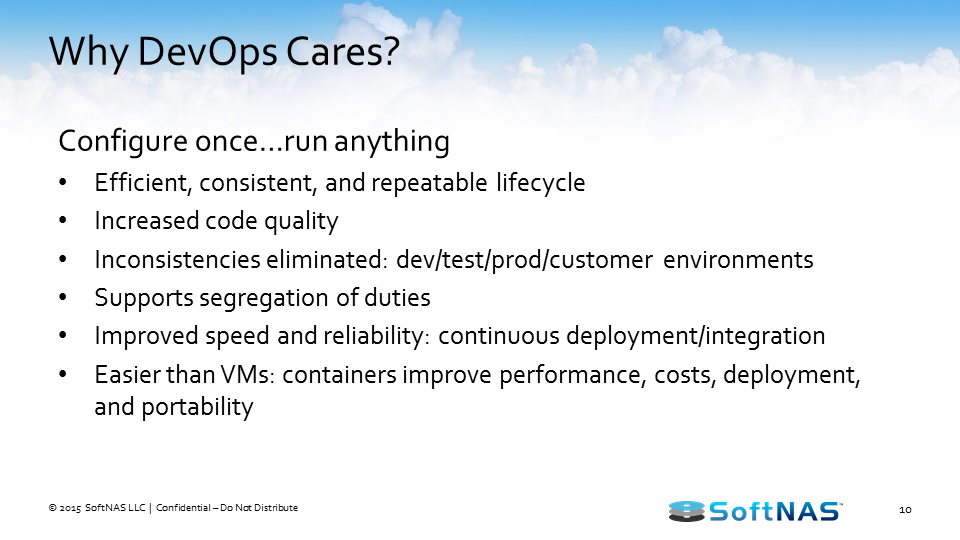

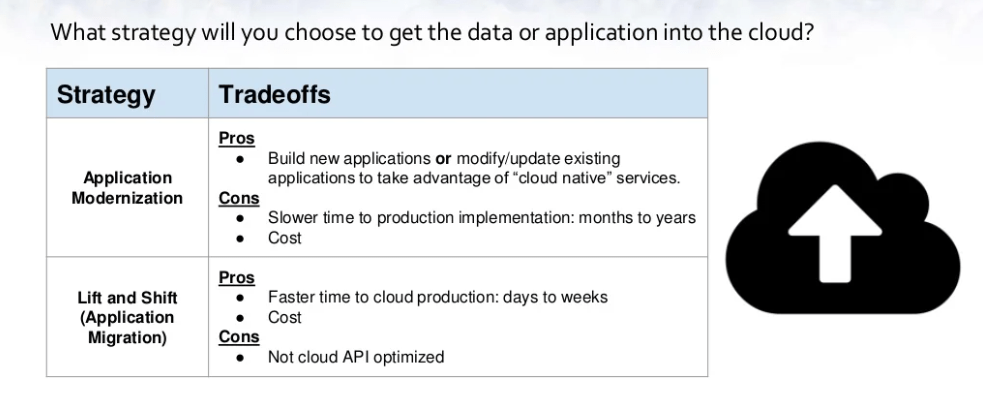

Application Modernization or Application Migration? We get asked this question also a lot. What strategy should I choose to get the data or application to the cloud? There are two strategies that are out there right now.

There is the application modernization strategy which comes with its pros and cons. Then there is also the Lift and Shift strategy which comes with its own pros and cons.

What’s associated with that modernization is the fact that the pros, you build a new application. You can modify and delete and update existing applications to take advantage of cloud-native services. It’s definitely a huge pro. It’s born in the cloud. It’s changed in the cloud. You have access to it.

However, the cons that are associated with that is that there is a slower time to production. More than likely, it’s going to have significant downtime as you try to migrate that data over. The timeline we’re looking at is months to years. Then there are also the costs associated with that. It’s ensuring that it’s built correctly, tested correctly, and then implemented correctly.

Then there is the Lift and Shift migration. The pros that you have for the Lift and Shift is that there is a faster time to cloud production. Instead of months to years, we’re looking at the ability to do this in days to weeks or, depending on how aggressive you could be, it could be hours to weeks. It totally depends.

Then there are costs associated with it. You’re not recreating that app. You’re not going back and rewriting code that is only going to be beneficial for your move. You’re having your developers write features and new things to your code that are actually going to benefit and point you in a way of making sure that it’s actually continuously going to support your customers themselves.

The cons associated with the Lift and Shift approach is that your application is not API optimized, but that’s something that you can make a decision on whether or not that’s something that’s beneficial or needed for your app. Does your app need to speak the API or does it just need to address the storage?

High Availability (HA)

The next thing that we want to discuss, we want to discuss high availability. This is key in any design process. It’s how do I make sure or plan for failure or disruption of services? Anything happens; anything goes wrong, how do I cover myself to make sure that my users don’t feel the pain? My failover needs to be non-disruptive.

How can I make sure that if reading fails, if an instance fails, my users come back and my users don’t even notice that a problem happened? I need to design for that. How do I ensure that during this process I am maintaining my existing connections? It’s not the fact that failover happens and then I need to go back and recreate all my tiers and repoint my pointers to different APIs to different locations.

How do I make sure that I have the ability to maintain my existing connections within a consistent IPO? How do I make sure that I have and what I’ve designed fulfills my IPO needs?

Another thing that comes up and is one of the questions that generally come into our team is, HA per app, or is this HA for infrastructure? When you go ahead and you go through the process of app modernization, you’re actually doing HA per app.

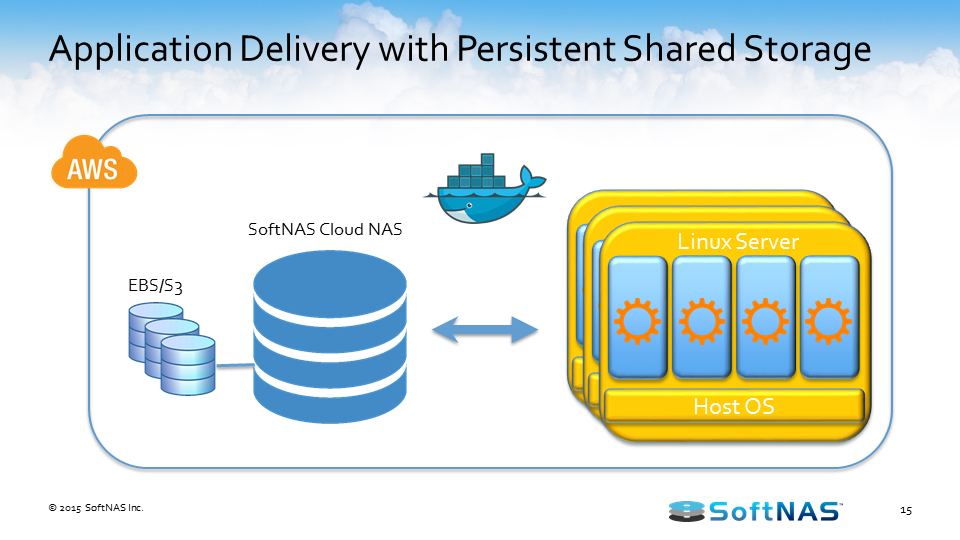

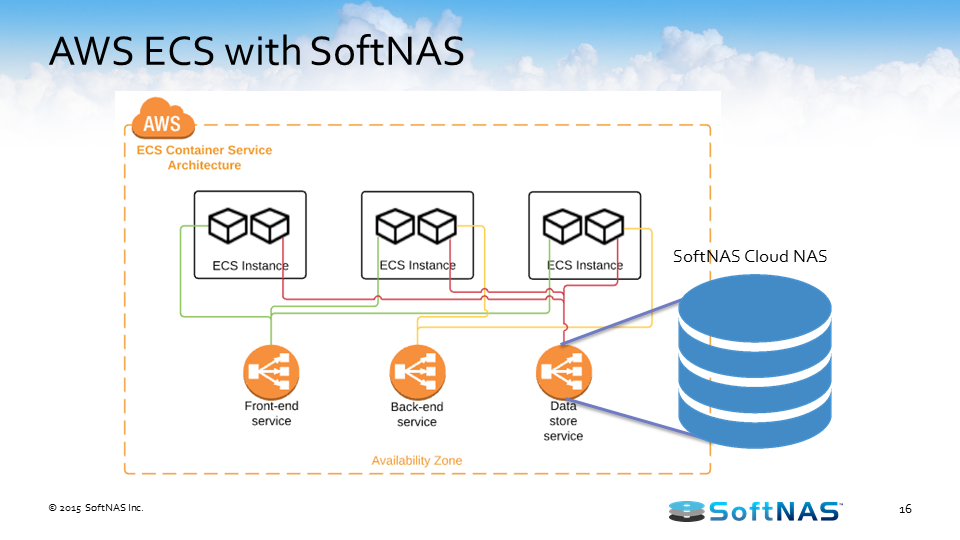

When you are looking at a more holistic solution, you need to think in advance. On your on-premises environment, you’re doing HA for infrastructure. How do you migrate that HA for infrastructure over to your cloud environment? And that’s where the cloud NAS comes in.

Cloud NAS solves many of the security and design concerns.

We have Keith Son which whom we did a webinar a couple of weeks ago. It might have been last week. I don’t remember if it’s up in my mind. Keith Son loves that software, constantly coming to us asking for different ways and multiple ways that he could actually tweak or use our software more.

Keith says, “Selecting SoftNAS has enabled us to quickly provision and manage storage, which has contributed to a hassle-free experience.” That’s what you want to hear when you come to design. It’s hassle-free. I don’t have to worry about it.

We also have a quote there from John Crites from Modus. John says that he’s found that SoftNAS is cost-effective and a secure solution for storing massive amounts of data in the AWS environment.

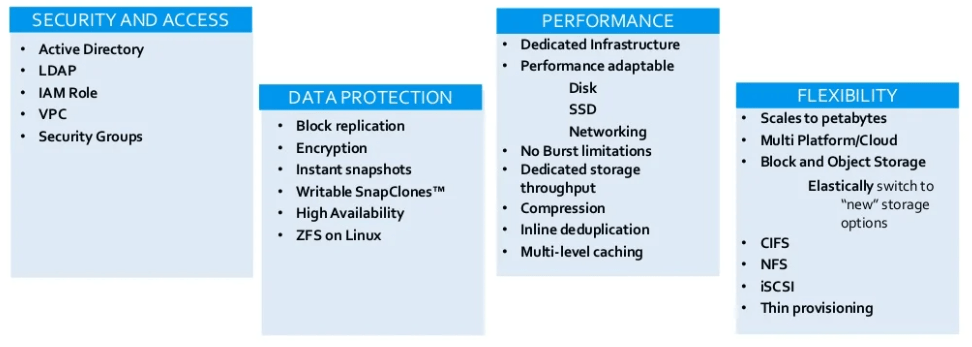

Cloud NAS addresses security and access concerns. You need to be able to tier into the active directory. You need to be able to tier into LDAP. You need to be able to secure your environment using IAM rules. Why? Because I don’t want my security, my secret keys to be visible to anybody. I want to be able to utilize the security that’s already initiated by AWS and have it just through my app.

VPC security groups. We ensure that with your VPC and with the security groups that you set up, only users that you want to have access to your infrastructure. From a data protection standpoint, block replication. How do we make sure that my data is somewhere else?

Data redundancy. We’ve been preaching that for the last 20 years. The only way I can make sure that my data is fully protected is that it’s redundant. In the cloud, although we’re extended redundancy, how do I make sure that my data is faultlessly redundant? We’re talking about bock replication. For data protection, we’re talking about encryption. How could you actually encrypt that data to make sure that even if someone did get access to it they didn’t know what they would do with it? It would be gobbledygook.

You need to be able to have the ability to do instant snapshots. How can I go in, based on my scenario, go in and create an instant snapshot of my environment? So worst case scenario if anything happens, I have a point in time that I could quickly come back to. Write up with snap clones. How do I stand up my data quickly? Worst case scenario if anything happens and I need to be able to revert to a period before that I know I wasn’t compromised; how can I do that quickly?

High availability in ZFS and Linux. How do I protect my infrastructure underneath?

Then performance. A cloud NAS gives you dedicated infrastructure. That means that it gives you the ability to be tunable. If my workload or use-case increases, I have the ability to tune my appliance to be able to grow as my use-case grows or as my use-case needs growth. Performance and adaptability. From disk to SSD, to networking, how can I make my environment or my storage adaptable to the performance that I would need? From no-burst limitations, dedicated throughput, compression, and deduplication are all things that we need to be considering as we go through this design process. The cloud NAS gives you the ability to do it.

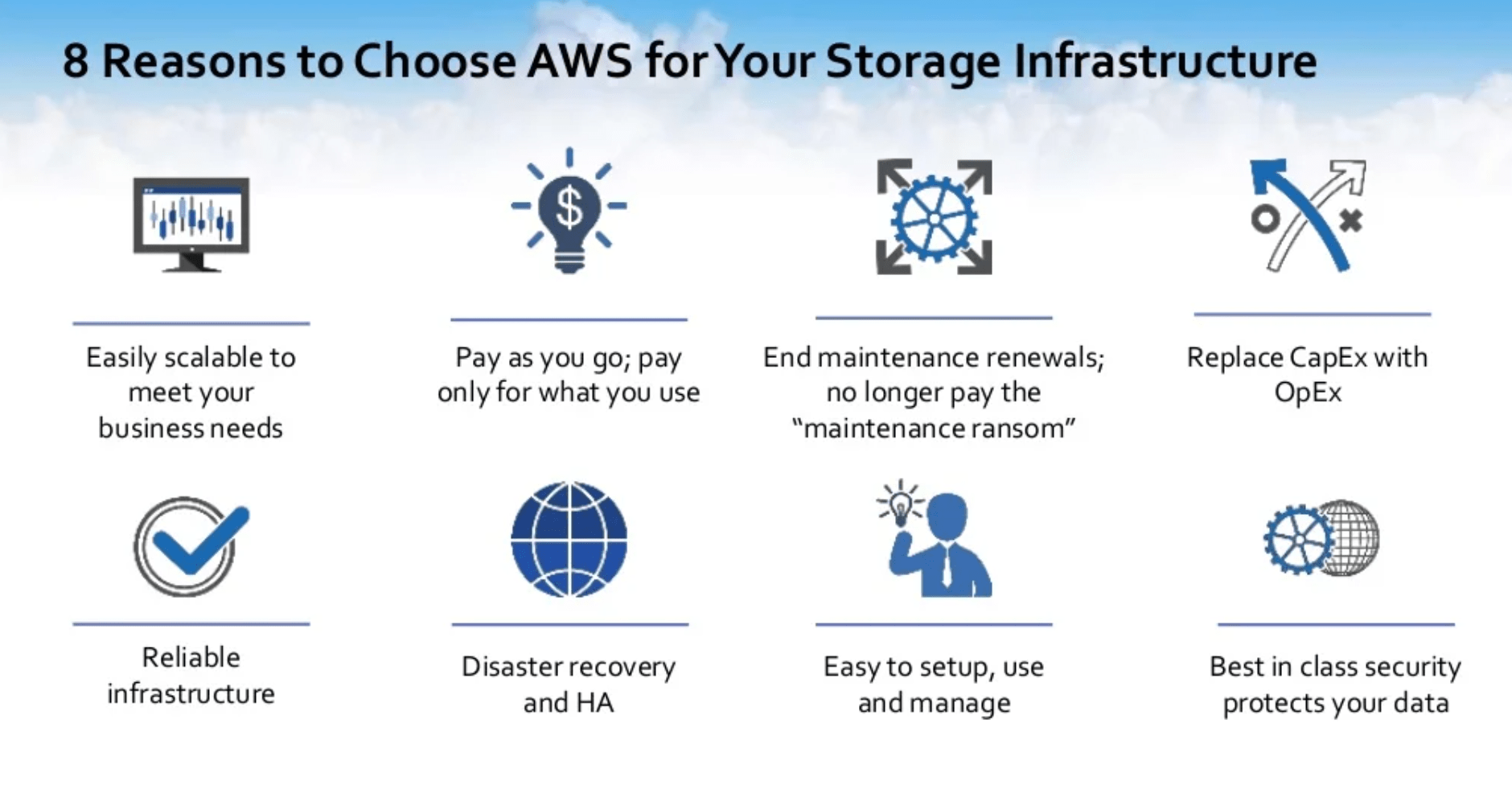

Then flexibility. What can I grow to? Can I scale from gigabyte to petabyte with the way that I have designed? Can I grow from petabytes to multiple petabytes? How do I make sure that I’ve designed with the thought of flexibility? The cloud NAS gives you the ability to do that.

We are also talking about multiplatform, multi-cloud, block, and object storage. Have I designed my environment so that I could switch to new storage options? Cloud NAS gives you the ability to do that.

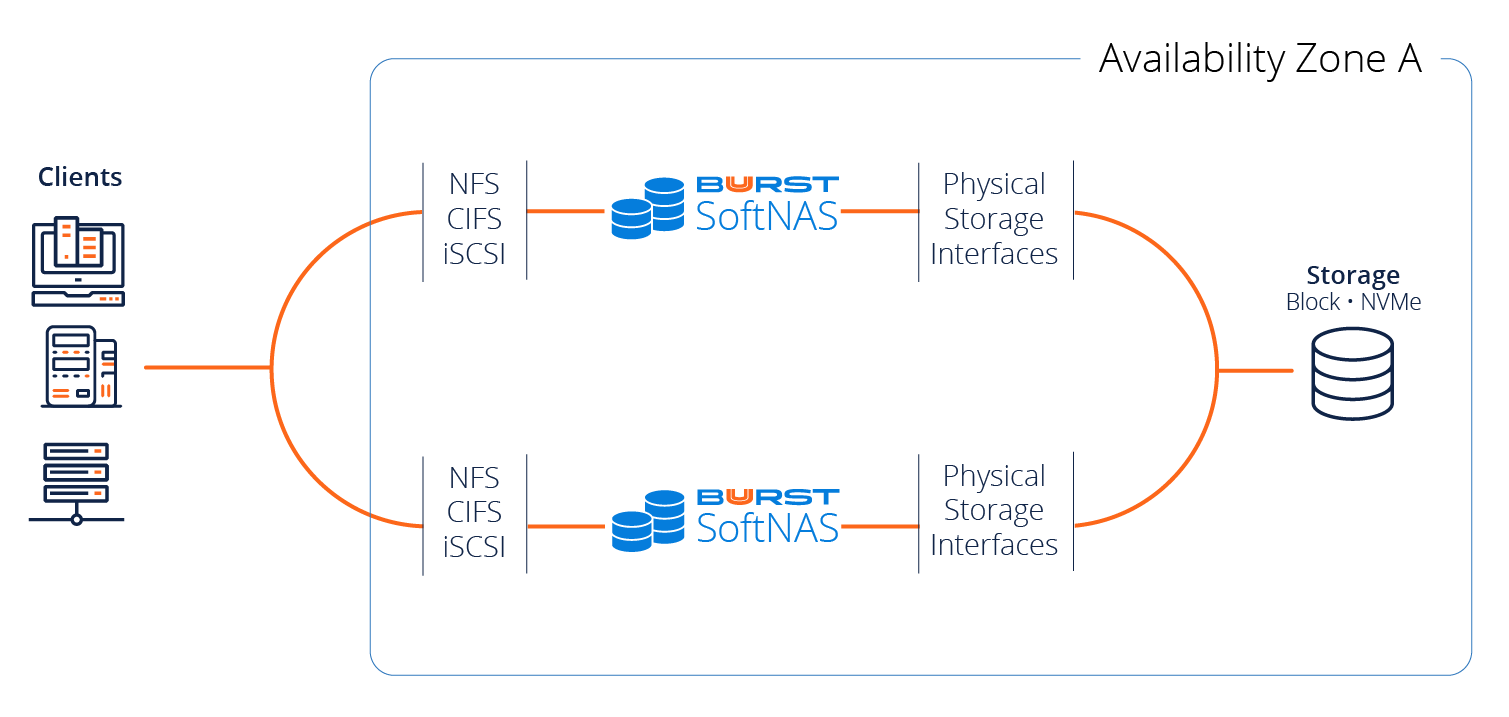

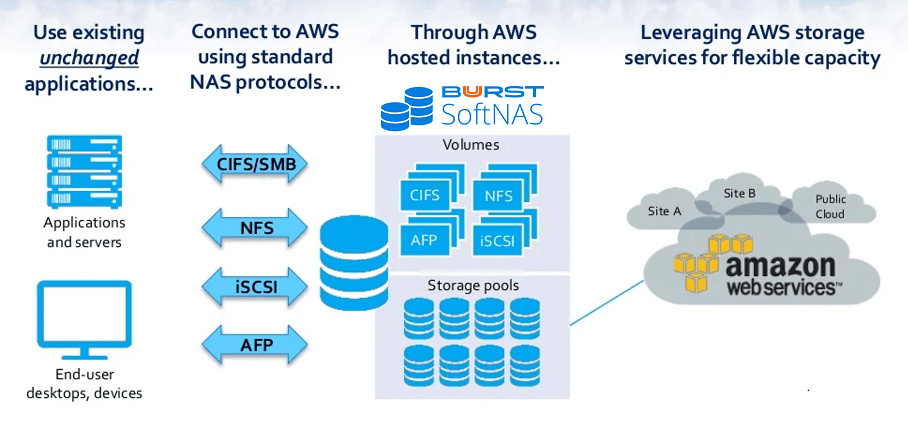

We also need to get to the point of the protocols. What protocols are supported, CIFS, NFS, and iSCSI? Can I then provision these systems? Yes. The cloud NAS gives you the ability to do that.

Easily Migrate Applications to AWS Cloud

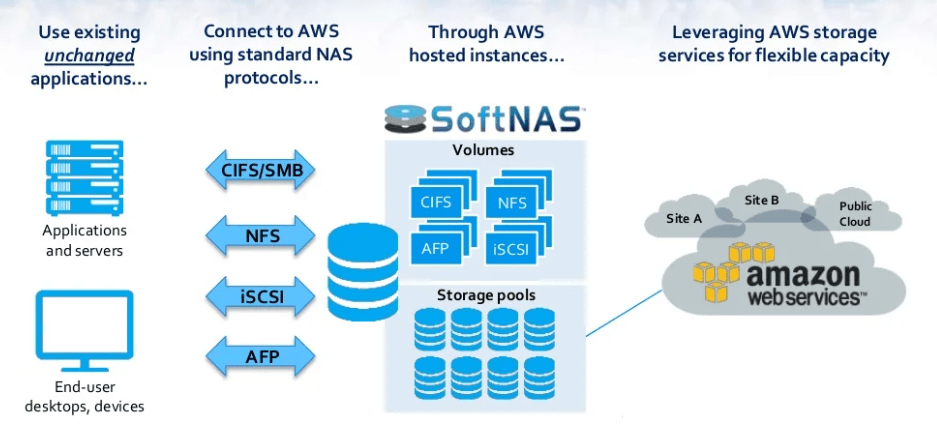

I just wanted to give a very quick overview of SoftNAS and what we do from a SoftNAS perspective. SoftNAS, as we said, is a cloud NAS. It’s the most downloadable and most utilized cloud NAS in the cloud.

We give you the ability to easily migrate those applications to AWS. You don’t need to change your applications at all. As long as your applications connect via CIFS or NFS or iSCSI or AFP, we are agnostic. Your applications would connect exactly the same way that they had connected as they do on-premise. We give you the ability to address cloud storage and this is in the form of S3. It’s in the form of Provisioned IOPS. It’s in the form of gp2s.

Anything that Amazon has available as storage, SoftNAS gives you the ability to aggregate into a storage pool and enhance sharing it out via volumes that are CIFS, NFS, or iSCSI. Giving you the ability to have your applications move seamlessly.

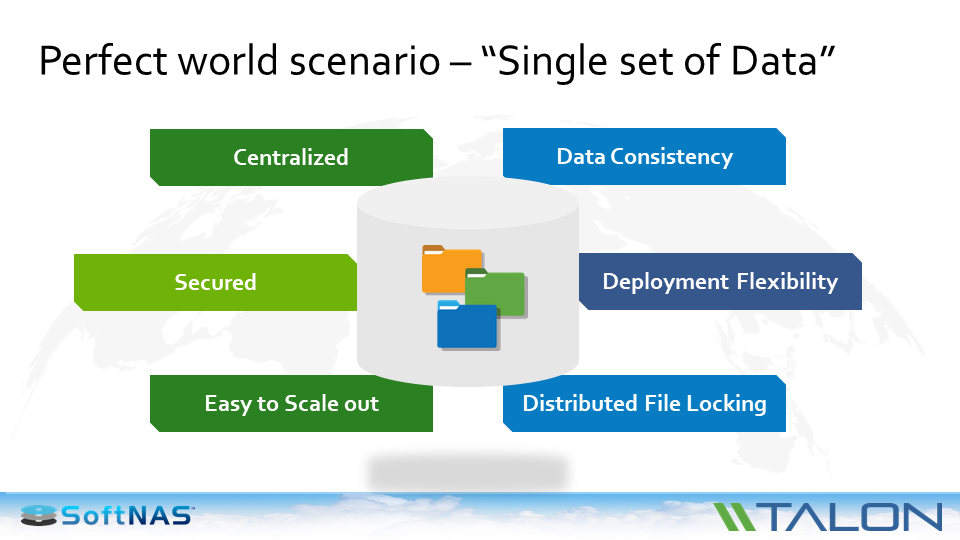

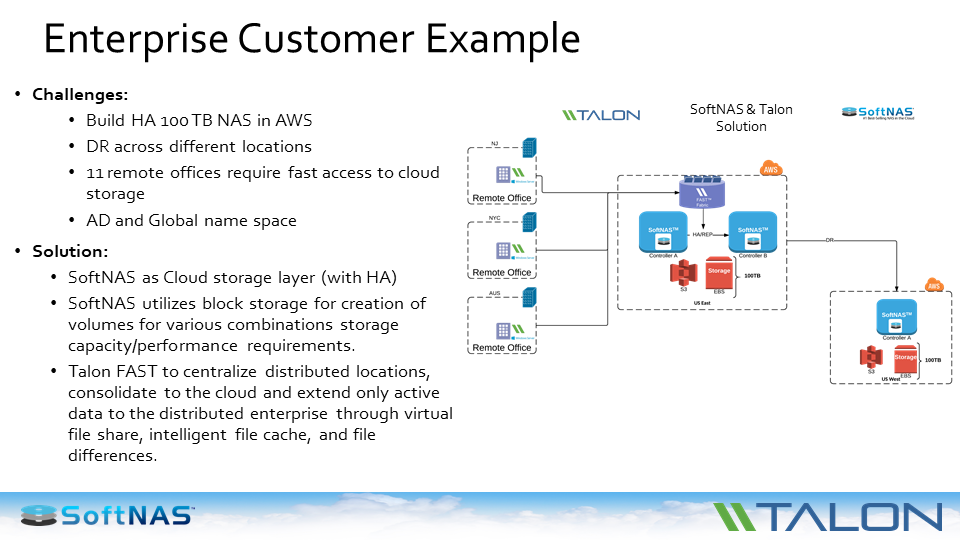

These are some of our technology partners. We work hand-in-hand with Talon, ScanDisk, NetApp, and SwiftStack. All of these companies love our software and we work hand-in-hand as we deliver solutions together.

We talked about some of the functions that we have that are native to SoftNAS. With the Cloud-Native IAM Role Integration, we have the ability to do that – to encrypt your data at rest or in transit.

Then also the fact of firewall security, we have the ability to be able to utilize that too. From a data protection standpoint, it’s a copy-on-write file system so it gives you the ability to be able to ensure the data integrity of the information in your storage.

We’re talking about instant storage snapshots whether manual or scheduled and rapid snapshot rollback there, we support RAID all across the board with all AWS EBS and also the ability to do it with S3 back storage.

We also give you the ability to…From a built-in snapshot scenario for your end-users, this is one of the things that our customers love, the Windows previous version support. IT teams love this; because if they have a user that made a mistake, instead of them having to go back in and recover a whole volume to be able to get the data back, they just tell them to go ahead.

Go back in. Windows previous versions, right click on that, restore that previous version. Giving your users something that they are used to on-premise that they have immediately within the cloud.

High performance. Scaling up to gigabytes per second for throughput. For performance, we talked about no burst limits protecting against Split-brain on HA fall over giving you the ability to migrate those applications without writing or rewriting a single piece of code.

We talked about automation in the ability to utilize our REST APIs. Very robust REST API cloud integration using ARM or cloud formation template available in every AWS ARM region.