“10 Architectural Requirements for Protecting Business Data in the Cloud”. Download the full slide deck on Slideshare.

Designing a cloud data system architecture that protects your precious data when operating business-critical applications and workloads in the cloud is of paramount importance to cloud architects today. Ensuring the high availability of your company’s applications and protecting business data is challenging and somewhat different than in traditional on-premise data centers.

For most companies with hundreds to thousands of applications, it’s impractical to build all of these important capabilities into every application’s design architecture. The cloud storage infrastructure typically only provides a subset of what’s required to properly protect business data and applications. So how do you ensure your business data and applications are architected correctly and protected in the cloud?

In this post, we covered: Best Practices for protecting business data in the cloud. How To design a protected and highly-available cloud system architecture, Lessons Learned from architecting thousands of cloud system architectures.

10 Architectural Requirements for Protecting Business Data in the Cloud!

We’re going to be talking about 12 architectural requirements for protecting your data in the cloud. We will cover some best practices, and some lessons learned from what we’ve done for our customers in the cloud. Finally, we will tell you a little bit about our product SoftNAS Cloud NAS and how it works.

1) High Availability (HA)

High Availability (HA) is one of the most important of these requirements. It’s important to understand that not all HA solutions are created equal. The key aspect to high availability is to make sure that data is always highly available. That would require some type of replication from point A to point B.

You also need to ensure-depending upon what public cloud infrastructure you may be running this on-that your high availability supports properly the redundancy that’s available on the platform. Take something like Amazon Web Services which offers different availability zones. You would want to find an HA solution that is available to run in different zones and provide availability across set availability zones, which is to the point of making sure that you have all your data stored in more than one zone.

You would want to ensure that you have a greater uptime in a single computer instance. The high availability that is available today from SoftNAS, for example, allows you on a public cloud infrastructure to deploy an instance in separate availability zones. It allows you to choose different storage types. It allows you to replicate that data between those two. And in case of a failover or an incident that would require a failover, your data should be available to you within 30 to 60 seconds.

You also want to ensure that whatever HA solution you’re looking for avoids what we like to call the split-brain scenario, which means that data could end up on a node that is not on the other node or newer data could end up on the target node after an HA takeover. You want to ensure that whatever type of solution you find that provides high availability that it meets the requirements of ensuring there is no split-brain in nodes.

2) Data Protection

The next piece that we want to cover is data protection. I want to stress that when we talk about data protection, there are multiple different ways to perceive that requirement.

We are looking at data protection from the storage architecture standpoint. You want to find a solution that supports snapshots and rollbacks. In snapshots, we look at these as a form of insurance – you buy them; you use them, but you hope you never have to need them.

I want to point out that snapshots do not take the place of a backup either. You want to find a solution that can replicate your data, whether that would be from replicating your data on-premise to a cloud environment, whether it would be replicating it from different regions within a public cloud environment, or even if you wanted to replicate your data from one public cloud platform to the other to ensure that you had a copy of the data in another environment.

You want to ensure that you can provide RAID mirroring. You want to ensure that you have efficiency with your data – being able to provide different features like compression, deduplication, etc. A copy-on-write file system is a key aspect to avoid data integrity risks. Being able to support things like Window’s previous versions for rollbacks. These are all key aspects of a solution that should provide you with the proper data protection.

3) Data security and access control

Data security and access control are always at the top of mind with everyone these days, so a solution that supports encryption. You want to ensure that the data is encrypted not only at rest but during all aspects of data transmission – data-at-rest, data-in-flight.

The ability to provide the proper authentication authorization, whether that’s integration with LDAP for NFS permissions, for example, or leveraging active directory for Windows environments. You want to ensure that whatever solution you find can support the native cloud IAM roles available or servers principle roles available on the different public cloud platforms.

You want to ensure that you’re using firewalls and limiting access to who can gain access to certain things to limit the amount of exposure you have.

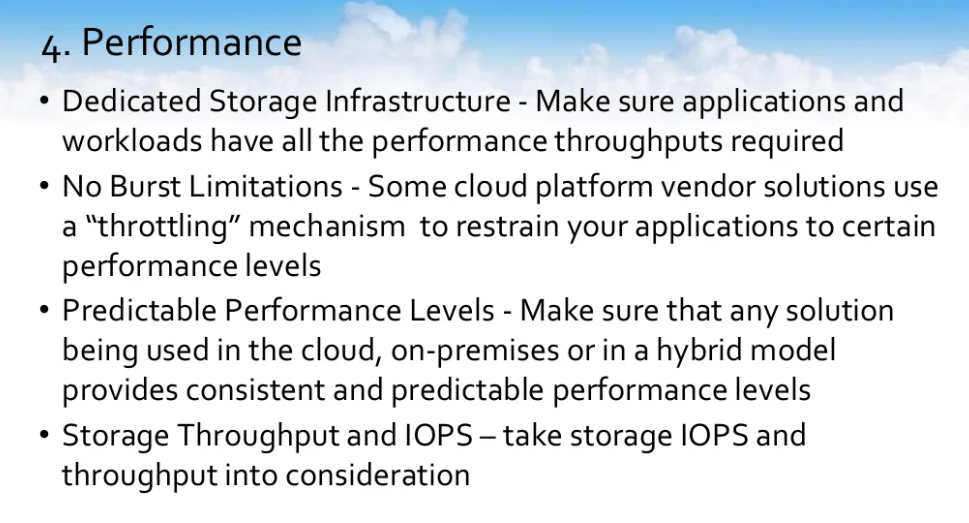

4) Performance

Performance is always at the top of everyone’s mind. If you take a look at a solution, you want to ensure that it uses dedicated storage infrastructure so that all applications can have the performance throughput that’s required.

No-burst limitations. You will find that some cloud platform vendor solutions use a throttling mechanism in order to give you only certain aspects of performance. You need a solution that can give you guaranteed predictable performance.

You want a solution that when you start it up on day one with 100,000 files, the performance is the same on day 300 and there are 5 million files. It’s got to be predictable and it can’t change. You have to ensure that you look at what your actual storage’s throughput and IOPS requirements are before you deploy a solution. This is the key aspect of things.

A lot of people come in and look to deploy a solution without really understanding what their performance requirements are and sometimes we see people who undersize the solution; but a lot of times, we see people who oversize the solution as well. It’s something to really take into consideration to understand.

5) Flexibility and Usability

You want a solution that’s very flexible from a usability standpoint – something that can run on multiple cloud platforms. You can find a good balance of the cost for performance; broad support for protocols like CIFS, NFS, iSCSI, and AFP; some type of automation with the cloud integration; the ability to support automation via uses of the script, APIs, command-lines, and all of these types of things.

Something that’s software-defined, and something that allows you to create clones of your data so that you can test your usable production data in a development environment; this is a key aspect that we found.

If you have the functionality, it allows you to test out what your real performance is going to look like before going into production.

6) Adaptability

You need a solution that’s very adaptable. The ability to support all of the available instances in VM types on different platforms, so whether you want to use high-memory instances, whether your requirements mandate that you need some type of ephemeral storage for your application.

Whatever that instance may be or VM may be, you want a solution that can work with it. Something that will support all the storage types that are available; whether that would be block storage, so EBS on Amazon, or Premium storage on Microsoft Azure, to also being able to support object storage.

You want to be able to leverage that lower-cost object storage like Azure Blobs or Amazon S3 in order to set specific data that maybe doesn’t have the same throughput and IOPS requirements as something else to be able to leverage that lower-cost storage.

This goes back to my point of understanding your throughput and IOPS requirements so that you can actually select the proper storage to run your infrastructure. Something that can support both an on-premise on a cloud or a hyper cloud environment – multiple cloud support and being able to adapt to the requirements as they change.

7) Data Expansion Capability

You need to find a solution that can expand as you grow. If you have a larger storage appetite and your need for storage to grow, you want to be able to extend this on the fly. This is going to be one of the huge benefits that you’d find in a software-defined solution run on a cloud infrastructure. There is no more rip and replace to extend storage. You could just attach more disks and extend your useable data sets.

This goes then to dynamic storage capacity and being able to support maximum files and directories. We’ve seen certain solutions that once they get to a million files, performance starts to degrade. You need something that can handle billions of files and Petabyte worth of data so that you know that what you got deployed now today will meet your data needs five years from now.

8) Support, Testing, and Validation

You need a solution that has that support safety net and availability of 24/7, 365, with different levels of support so that you can access it through multiple channels.

You would probably want to find a solution that has design support and offered a free trial or a proof of concept version. Find out what guarantees and warranties and SLA different solutions can provide to you. The ability to provide monitoring integration with it, integrations with things like Cloud Watch, integration with things like Azure Monitoring and Reporting uptime requirements, all of your audit log, system log, and integration.

Make sure that whatever solution you find can handle all of the troubleshooting gates. How will a vendor stand behind its offer? What’s their guarantee? Get a documented guarantee from each vendor that spells out exactly what’s covered, what’s not covered, and if there is a failure, how is that covered from a vendor perspective.

9) Enterprise Readiness

You need to make sure that whatever solution you choose to deploy that its enterprise-ready. You need something that can scale to billions of files because we’re long passed millions of files. We are dealing with people and customers that have billions of files with petabytes of data.

It can be highly resilient. It can provide a broad range of applications and workloads. It can help you meet your DR requirements in the cloud and also can give you some reporting and analytics on the data that you have deployed and are in place.

10) Cloud Native

Is the solution cloud-native? Was the solution built from the ground to reside in the public cloud or is it a solution that was converted to run in a public cloud? How easy is it to move legacy applications onto the solution in the cloud?

You should outline your cloud platform requirements. Honestly take the time and outline what your cost and your company’s requirements for the public cloud are. Are you doing this to save money to get better performance? Maybe you’re closing a data center. Maybe your existing hardware NAS is up for a maintenance renewal or it’s requiring a hardware upgrade because it is no longer supported. Whatever those reasons, they are very important to understand.

Look for a solution that has positive product reviews. If you look in the Amazon Web Services Marketplace for any type of solutions out there, the one thing about Amazon is it’s really great for reviews.

Whether that’s deploying a software solution out of the marketplace, or whether that’s going and buying a novel on Amazon.com, check out all earlier reviews. Look at third-party testing and benchmark results. Run your own tests and benchmarks. This is what I would encourage you. Look at different industry analysts, and customer and partner testimonials and find out if you have a trustworthy vendor.

About SoftNAS Cloud NAS

I’d like to talk to you for just a few seconds now about SoftNAS Cloud NAS. What SoftNAS NAS Filer offers is a fully featured Enterprise cloud NAS for primary data storage.

It allows you to take your existing applications that maybe are residing on-premise that needs that legacy protocol support like NFS, CIFS, or iSCSI and move them over to a public cloud environment. The solution allows you to leverage all of the underlying storage of the public cloud infrastructure. Whether that would be object storage or block storage, you can use both. You could mix and match.

We offer a solution that can run not only on VMware on-premise but it can also run on public cloud environments such as AWS and Microsoft Azure. We offer a full high availability cross-zone solution on Amazon and a full high availability cross-network in Microsoft Azure.

We support all types of storage on all platforms. Whether that’s hot blob or cool blob on Azure, magnetic EBS, we can allow you to create pools on top of that and essentially give you file server-like access to these particular storage mediums. SEE SOFTNAS DEMO