Meeting Cloud File Storage Cost and Performance Goals – Harder than You Think

Meeting Cloud Storage Cost and Performance Goals – Harder than You Think

According to Gartner, by 2025 80% of enterprises will shut down their traditional data centers. Today, 10% already have. We know this is true because we have helped thousands of these businesses migrate workloads and business-critical data from on-premises data centers into the cloud since 2013. Most of those workloads have been running 24 x 7 for 5+ years. Some of them have been digitally transformed (code for “rewritten to run natively in the cloud”).

The biggest challenge in adopting the cloud isn’t the technology shift – it’s finding the right balance of storage cost vs performance and availability that justifies moving data to the cloud. We all have a learning curve as we migrate major workloads into the cloud. That’s to be expected as there are many choices to make – some more critical than others.

Applications in the cloud

Many of our largest customers operate mission-critical, revenue-generating applications in the cloud today. Business relies on these applications and their underlying data for revenue growth, customer satisfaction, and retention. These systems cannot tolerate unplanned downtime. They must perform at expected levels consistently… even under increasingly heavy loads, unpredictable interference from noisy cloud neighbors, occasional cloud hardware failures, sporadic cloud network glitches, and other anomalies that just come with the territory of large-scale data center operations.

In order to meet customer and business SLAs, cloud-based workloads must be carefully designed. At the core of these designs is how data will be handled. Choosing the right file service component is one of the critical decisions a cloud architect must make.

Application performance, costs, and availability

For customers to remain happy, application performance must be maintained. Easier said than done when you no longer control the IT infrastructure in the cloud.

So how does one negotiate these competing objectives around cost, performance, and availability when you no longer control the hardware or virtualization layers in your own data center? And how can these variables be controlled and adapted over time to keep things in balance? In a word – control. You must correctly choose where to give up control and where to maintain control over key aspects of the infrastructure stack supporting each workload.

One allure of the cloud is that it’s (supposedly) going to simplify everything into easily managed services, eliminating the worry about IT infrastructure forever. For non-critical use cases, managed services can, in fact, be a great solution. But what about when you need to control costs, performance, and availability?

Unfortunately, managed services must be designed and delivered for the “masses”, which means tradeoffs and compromises must be made. And to make these managed services profitable, significant margins must be built into the pricing models to ensure the cloud provider can grow and maintain them.

In the case of public cloud-shared file services like AWS Elastic File System (EFS) and Azure NetApp Files (ANF), performance throttling is required to prevent thousands of customer tenants from overrunning the limited resources that are actually available. To get more performance, you must purchase and maintain more storage capacity (whether you actually need that add-on storage or not). And as your storage capacity inevitably grows, so do the costs. And to make matters worse, much of that data is actually inactive most of the time, so you’re paying for data storage every month that you rarely if ever even access. And the cloud vendors have no incentive to help you reduce these excessive storage costs, which just keep going up as your data continues to grow each day.

After watching this movie play out with customers for many years and working closely with the largest to smallest businesses across 39 countries, At Buurst™. we decided to address these issues head-on. Instead of charging customers what is effectively a “storage tax” for their growing cloud storage capacity, we changed everything by offering Unlimited Capacity. That is, with SoftNAS® you can store an unlimited amount of file data in the cloud at no extra cost (aside from the underlying cloud block and object storage itself).

SoftNAS has always offered both data compression and deduplication, which when combined typically reduces cloud storage by 50% or more. Then we added automatic data tiering, which recognizes inactive and stale data, archiving it to less expensive storage transparently, saving up to an additional 67% on monthly cloud storage costs.

Just like when you managed your file storage in your own data center, SoftNAS keeps you in control of your data and application performance. Instead of turning control over to the cloud vendors, you maintain total control over the file storage infrastructure. This gives you the flexibility to keep costs and performance in balance over time.

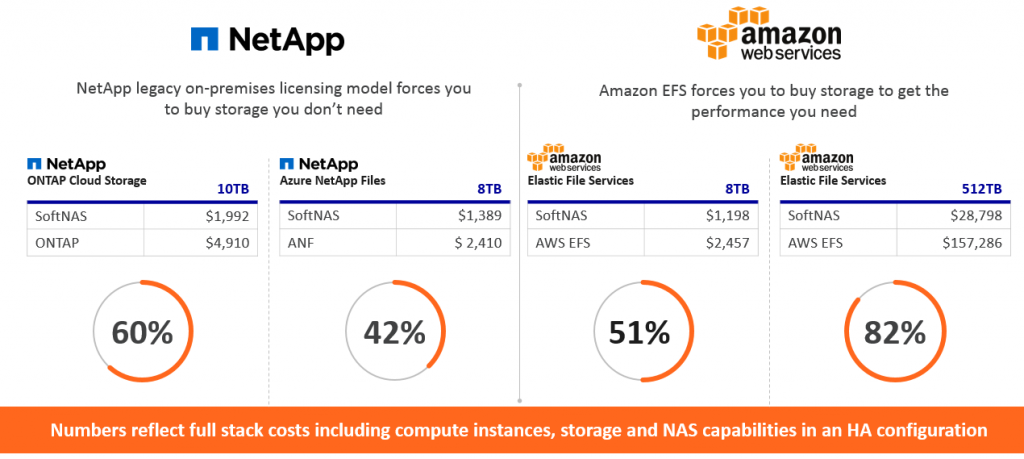

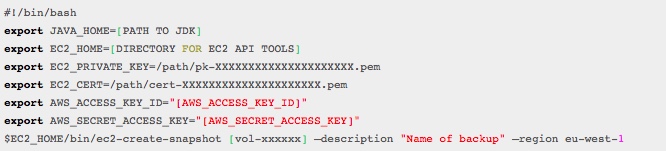

To put this in perspective, without taking data compression and deduplication into account yet, look at how Buurst SoftNAS costs compare:

SoftNAS vs NetApp ONTAP, Azure NetApp Files, and AWS EFS

These monthly savings really add up. And if your data is compressible and/or contains duplicates, you will save up to 50% more on cloud storage because the data is compressed and deduplicated automatically for you.

Fortunately, customers have alternatives to choose from today:

- GIVE UP CONTROL – use cloud file services like EFS or ANF, pay for both performance and capacity growth, and give up control over your data or ability to deliver on SLAs consistently

- KEEP CONTROL – of your data and business with Buurst SoftNAS, and balance storage costs, and performance to meet your SLAs and grow more profitably.

Sometimes cloud migration projects are so complex and daunting that it’s advantageous to just take shortcuts to get everything up and running and operational as a first step. We commonly see customers choose cloud file services as an easy first stepping stone to a migration. Then these same customers proceed to the next step – optimizing costs and performance to operate the business profitably in the cloud and they contact Buurst to take back control, reduce costs, and meet SLAs.

As you contemplate how to reduce cloud operating costs while meeting the needs of the business, keep in mind that you face a pivotal decision ahead. Either keep control or give up control of your data, its costs, and performance. For some use cases, the simplicity of cloud file services is attractive and the data capacity is small enough and performance demands low enough that the convenience of files-as-a-service is the best choice. As you move business-critical workloads where costs, performance and control matter, or the datasets are large (tens to hundreds of terabytes or more), keep in mind that Buurst never charges you a storage tax on your data and keeps you in control of your business destiny in the cloud.

Next steps:

Learn more about how SoftNAS can help you maintain control and balance cloud storage costs and performance in the cloud.