On-Premise vs AWS Cloud NAS Storage – Which is Best for Your Business? download the full slide deck on Slideshare.

The maintenance bill is due for your on-premises SAN/NAS–or it just increased. It’s hundreds of thousands or millions of dollars just to keep your existing storage gear under maintenance. And you know you will need to purchase more storage capacity for this aged hardware.

- Do you renew and commit another 3-5 years by paying the storage bill and further commit to a data center architecture?

- Do you make a forklift upgrade and buy new SAN/NAS gear or move to hyper-converged infrastructure?

- Do you move to the AWS cloud for greater flexibility and agility?

- Will you give up security and data protection?

Difference between on-premise vs Hyper-converged vs AWS

We’re going to be talking about on-premises to cloud conversation. We’re going to show you the difference between on-premise vs hyper-converged vs AWS. We will also tell you why you should choose AWS over on-premise and hyper-converged. Then we will tell you a little bit about SoftNAS and how it helps with your cloud migrations.

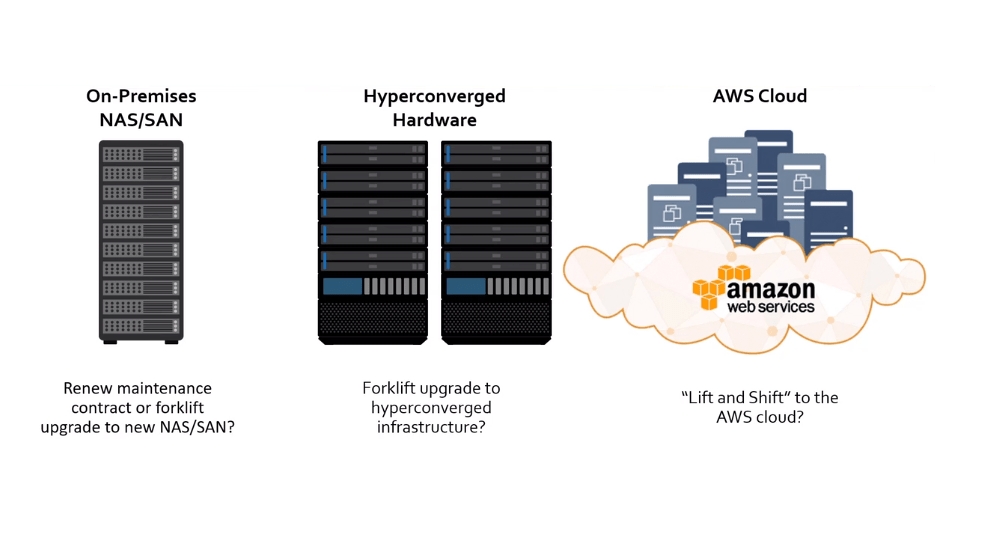

On-premise vs AWS: upgrade, paid maintenance, or public cloud?

Let’s focus on a storage dilemma that we have happening with IT teams and organizations all across the world.

The looming question is what do I do when my maintenance renewal comes up?

Teams are left with three options. Either we stay on-premise and pay the renewal fee for your maintenance bill which is a continuously increasing expense, or you could consider a forklift upgrade where you’re buying a new NAS or SAN and moving to a hyper-converged platform.

The drawback with this option is that you still haven’t solved all your problems because you’re still on-prem, you’re still using hardware and the new maintenance renewal is about 24 to 12 months away. Finally, customers can Lift and Shift data to AWS – hardware will no longer be required, and data centers could be unplugged.

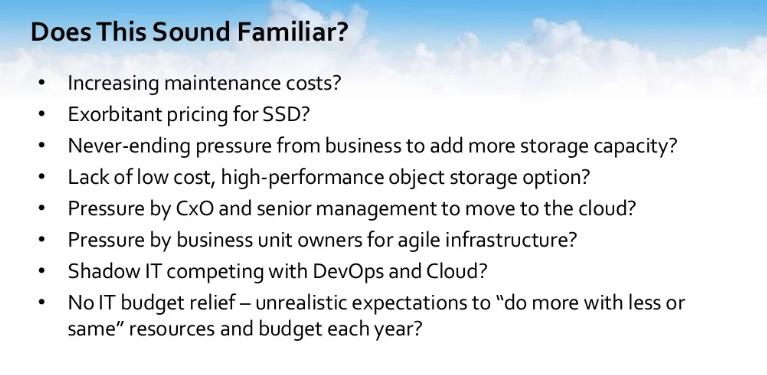

There is an increase in maintenance costs for support, swapping disks, for downtime. There is exorbitant pricing for SSD drives and SSD storage when you pay a nominal leg for pricing that you need to pay to ensure that your environment works as advertised. You have never-ending pressure from businesses to add more storage capacity – we need it, we need it now, and we need more of it. There is a lack of low-cost high-performance object storage, and you’re pressured by the business owners for agile infrastructure.

On-Premise vs Hyper-Converged vs AWS Cloud

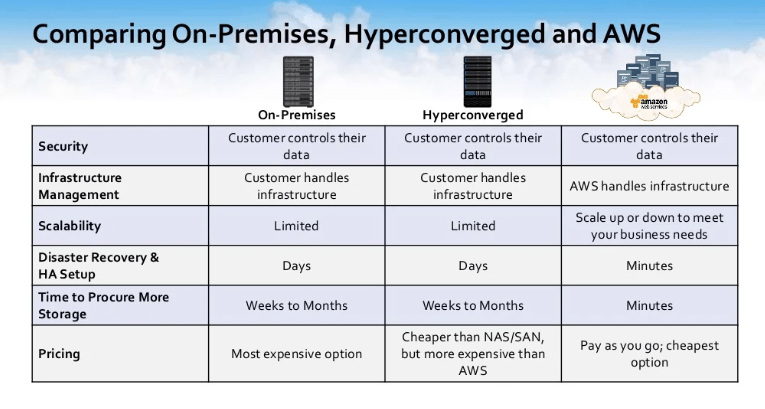

The business is growing; data is growing. You need to be ahead of the curve and way ahead of the curve to actually keep up. Let’s take a look. Let’s do a stare and compare all these three options that are there so On-Premise, Hyper-Converged, and AWS Cloud.

From a security standpoint, all these three options deliver a secure environment with all the rules and policies that you’ve already designed to protect your environment — they travel with you. From an infrastructure and management scenario, your on-premise and hyper-converged still require your current staff to maintain and update the underlying infrastructure.

That’s where we’re talking about your disk swaps, your networking, your racking, and un-racking. AWS can help you limit this IT burden with its Manage Infrastructure.

From a scalability standpoint, I dare that you call your NAS or SAN provider and tell them that you think you bought too much storage last year and you want to give them back some. In AWS, you get just that option. You can scale up or scale down allowing you to grow as needed and not as estimated.

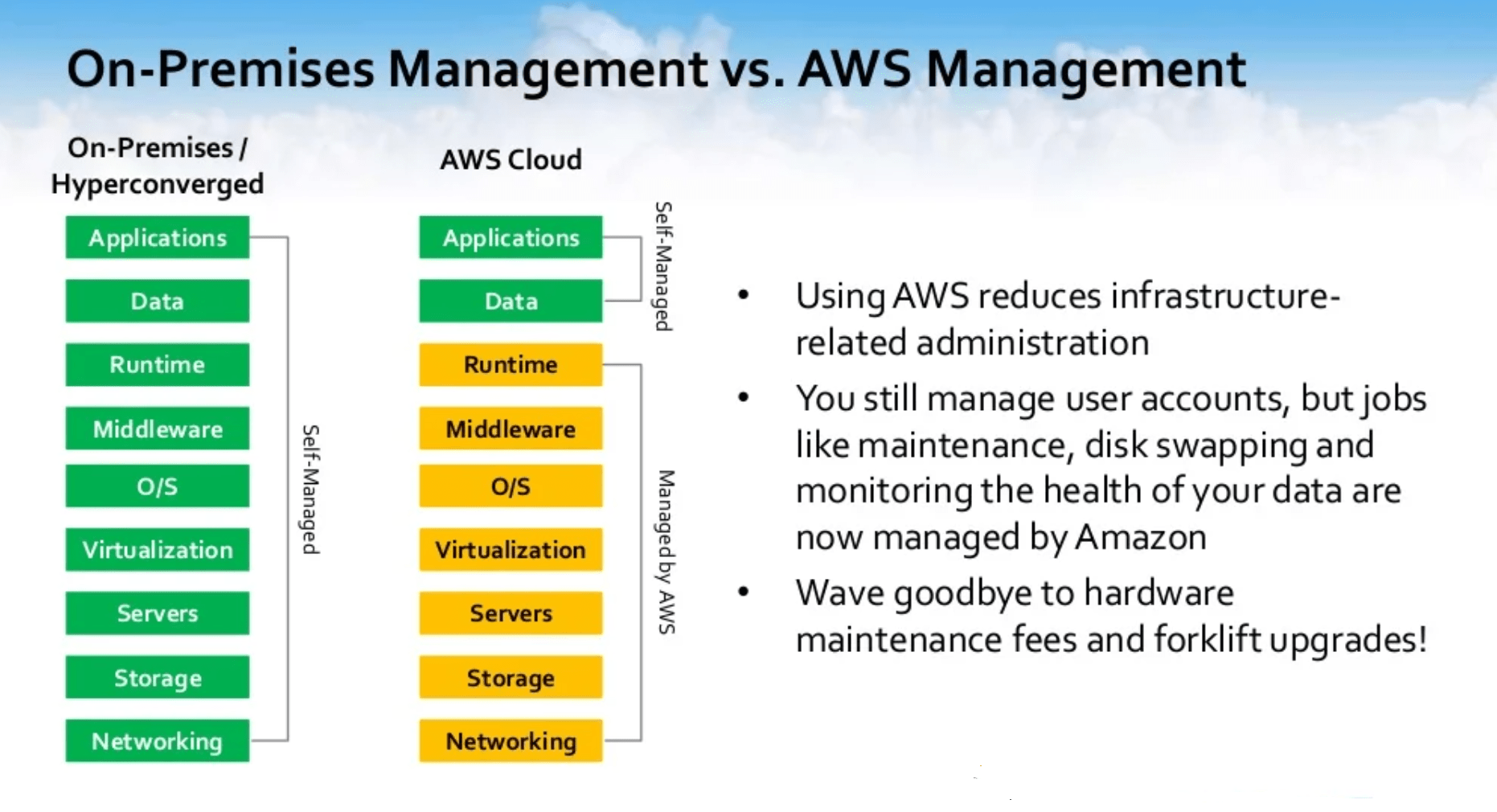

On-premise vs AWS Management

For your on-premise and hyper-converged system, you control and manage everything from layer one all the way up to layer seven. In an AWS model, you can remove the need for jobs like maintenance, disk swapping, and monitoring the health of your system and hand that over to AWS. You’re still in control of managing user-accounts access in your application, but you could wave goodbye to hardware, and maintenance fees in your forklift upgrades.

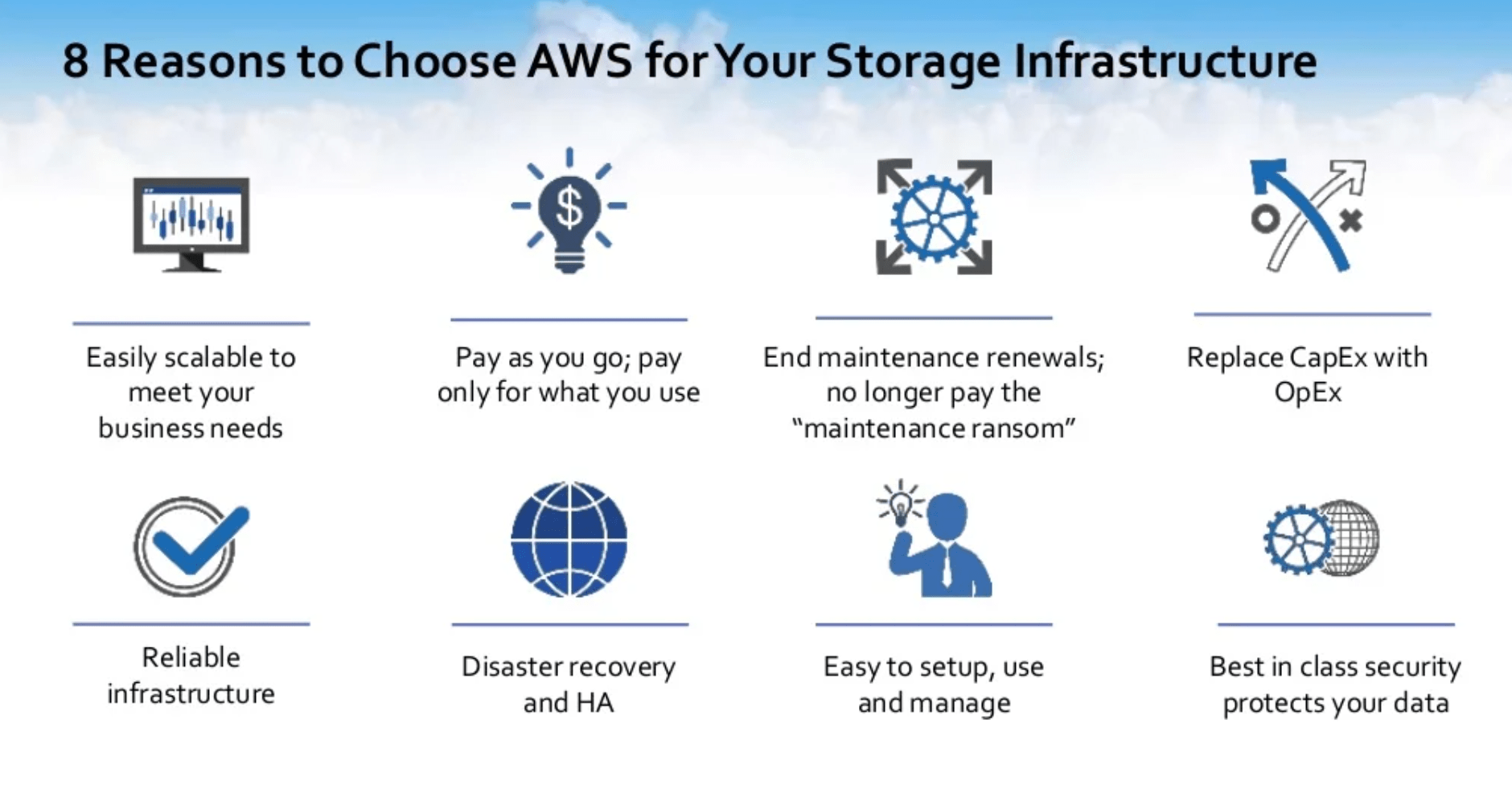

8 reasons to choose AWS for your storage infrastructure.

From a scalability standpoint. We talked about this earlier. Gives you the ability to grow your storage as needed, not as estimated. For the people who are storage gurus, you know exactly what that means.

I’ve definitely been in rooms sitting with people with predictive modeling about how much data we are going to grow by for the next quarter or for the next year. I could tell you for 100% fact, that I have never ever been in a room where we’ve come up with an accurate number. It’s always been an idea, a hope, a dream, a guess.

With AWS and the scalability that it provides the ability to grow your storage and then pay for it as you go and only pay for what you use. That in itself is worth its own weight in gold. Not only that, you get a chance to end your maintenance renewals and no longer pay for that maintenance ransom where they are holding access to your data until your maintenance ransom is paid.

There are also huge benefits for trading-in CAPEX for the OPEX model. There is no more long-term commitment. When you’re finished with the resource, you send it back to the provider. You’re done with using your S3 disk, you turn it off and you send that back to Amazon.

You also gain freedom from having to make a significant long-term investment for equipment that you and I know will eventually break down or become outdated. You also have a reliable infrastructure. We’re talking about S3 and its (11) nines worth of durability. EC2, where it gives you (4) nines worth of accessibility for your multi-AZ deployments. You have functions like disaster recovery, ease of use, and management, and you’re utilizing the best in the class in security to protect your data.

If you’re currently using an on-premises NAS system and it’s coming up for maintenance renewal, what do you intend to do?

- Are you going to do an in-place upgrade or you use your existing hardware, but you update the software?

- Are you going to go a forklift update where you buy new hardware and software?

- Or are you going to move to a hyper-converged system? Or are you considering the public cloud whether it’s AWS or other options?

I think most of you are intending to move to the public cloud, whether it’s AWS or others. Looks like a lot of you are interested in in-place NAS and SAN upgrades, so it’s interesting.

A lot of you are also considering moving to hybrid-converged. For those of you who answered other, we would be curious to learn more about what your other plans are. On the questions pane, you’re more than welcome to write what you’re intending to do.

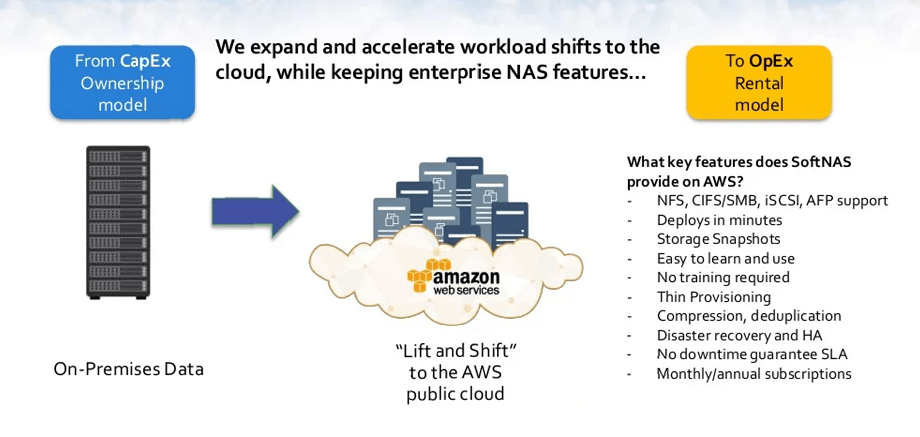

Lift and Shift Data Migration while keeping enterprise NAS features

Lifting and shifting to the cloud can be done in multiple ways. We’re talking about, from a petabyte-scale, you can incorporate AWS Import using Snowball. You can connect directly using AWS Direct Connect, or you could use some open-source tools or programs like Rsync, Lsync, and Robocopy.

Once your data is in the cloud, it’s how you’re going to maintain that same enterprise-level of experience that you’re used to. With SoftNAS Cloud NAS, you have that ability. We give you the opportunity to be able to have no downtime SLA and we’re the only company that gives that guarantee.

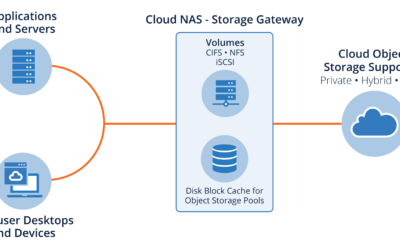

SoftNAS allows you to lift and shift while maintaining the same enterprise-level of service and experience that you are used to. We are the only company that gives a no downtime guarantee SLA. We will give you the same enterprise feel in the cloud that you are used to on-premise. Whether or not that’s serving out your data via NFS for your apps that need NFS, CIFS, or SMB, we can do that.

We deploy within minutes. We give you the ability, as we demonstrated, to do storage snapshots. The GUI in itself is easy to learn, easy to use, no training. You don’t need to send your teams back to get training to be able to use SoftNAS as software.

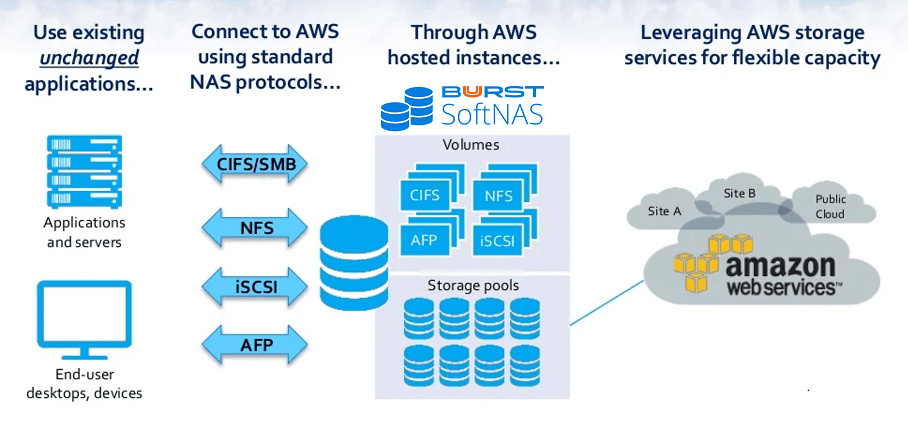

We allow you to use the standard protocols. We leverage AWS’s storage elasticity. SoftNAS enables the existing applications to migrate unchanged. Our software provides all the Enterprise NAS capabilities — whether it’s CIFS, NFS, or iSCSI — and it allows you to make the move to the cloud and preserve your budget for the innovation and adaptations that translate to improved business outcomes.

SoftNAS can also run on-premise via a virtual machine and create a virtual NAS from a storage system and connect to AWS for cloud-hosted storage.

What storage workloads do you intend to move to the AWS Cloud?

For those of you who are interested in moving to AWS, is it going to be NFS, CIFS, iSCSI, AFP, or you are not just intending to move to AWS at all? It looks like about over 40% of you are intending to move NFS workloads to the AWS Cloud, which is pretty common with what we’ve seen.

CIFS and iSCSI support is also balanced too. A couple of you just have no interest in moving to the AWS Cloud. For those of you who don’t have an interest in moving to the AWS Cloud, again, on the questions page, please let us know why you don’t intend to move to AWS.

Easily Migrate Existing Applications to AWS Cloud

SoftNAS in a nutshell is an enterprise NAS filer that exists on a Linux appliance with a ZFS backing in the cloud or on-premise.

We have a robust API, CLI, and cloud base that integrate with AWS S3, EBS on-Premise storage, and VMware. This allows us to provide data services like block replication, which allows you to access cloud disks. We give you storage enhancements such as compression and in-line deduplication, multi-level caching, and the ability to produce writable snap clones, and encrypt your data at rest or in-flight.

We continue to deliver the best brand services by working with our industry-leading partners. Some of these people you guys might know as Amazon, Microsoft, and VMware. We continue to partner with them to enhance both our offerings and theirs.

We recommend that you have your technical leads try out SoftNAS Virtual NAS Appliance. Tell them to visit our website and they’ll be able to go and try out SoftNAS.

For some of the more technical people in our audience, we invite you to go to our AWS page where you can learn a little bit more about the details of how SoftNAS AWS NAS works.

Why chose SoftNAS over the AWS storage points?

We give you the ability, from a SoftNAS standpoint, to be able to encrypt the data. As we spoke about in the webinar, we’re the only appliance that gives a no downtime SLA. We stand by that SLA because we have designed our software to be able to address and take care of your storage needs. We do have the ability to connect to blob storage, and we are in other platforms other than AWS – that would be CenturyLink, and Azure, among some the others.

You have an application that you need to be able to move to the cloud. However, in order for you to rewrite that application, it’s going to take you six months to a year to be able to support S3 or any kind of block storage. We give you the ability to migrate that data by setting up an NFS or a CIFS whatever that application is used to already in your enterprise.