Type of AWS Storage for your Use-Case: Object Storage

When choosing data storage, what do you look for?

AWS offers several storage types and options, and each is better suited to a certain purpose than the others. For instance, if your business only needs to store data for compliance and with little need for access, Amazon S3 volumes are a good bet. For enterprise applications, Amazon EBS SSD-backed volumes offer a provisioned IOPS option to meet the performance requirements.

And then there are concerns about the cost. Savings in cost usually come at the price of performance. However, the array of options from the AWS platform for storage means there usually is a type that achieves the balance of performance and cost that your business needs.

In this series of posts, we are going to look at the defining features of each AWS storage type. By the end, you should be able to tell which type of AWS storage sounds like the right fit for your business’ storage requirements. This post focuses on the features of AWS Object storage or S3 storage. You may also read our post which explains all about AWS block storage.

Amazon S3 storage

Amazon Object storage has been designed to be the most durable storage layer, with all offerings stated to provide 99.999999999%, or 11 nines of durability of objects over a given year. This durability equates to an average annual expected loss of 0.000000001% of objects, or, said more practically, a loss of a single object once every 10,000 years. Given the advantages that Object storage brings to the table, why wouldn’t you want to use it for every scenario that includes data? This is a question put to solution architects at SoftNAS almost every day. Object storage excels in durability but its design makes it not so suitable for some use cases.

When thinking about utilizing AWS Object storage the questions you need to have answers to are:

1. What is the Data life cycle?

2. What is the Data access frequency?

3. How latency-aware is your Application?

4. What are the service limitations?

The hallmark of S3 storage has always been high throughput – with high latency. But AWS has refined its offerings by adding several S3 storage classes that address different needs. These include:

- AWS S3 Standard

- S3 Intelligent Tiering

- S3 Standard IA (Infrequent Access)

- S3 One Zone IA

- S3 Glacier

- S3 Glacier Deep Archive

These types are listed in order of increasing latency and decreasing cost/GB.

The access time across all of these S3 storage classes ranges from milliseconds to hours.

How frequently you will need to access your data dictates the type of S3 storage you should choose for your backend. The cost for S3 tiers is determined not only by the amount of storage but also the access to the storage. The billing incorporates storage used, network data transferred in, network data transferred out, data retrieval and the number of data requests (PUT, GET, DELETE). Workloads with random read and write operations, low latency and high IOPS requirements are not suitable for S3 storage. Use-cases/workloads that are not latency-sensitive and require high throughput are good candidates.

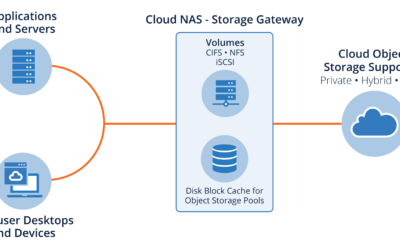

AWS S3 is object storage – so you must remember that all data will be stored as objects in the native format, with no hierarchies as there are when using a file system. But then, objects may be stored across several machines, and can be accessed from anywhere.

Read our post on all about block storage here, it also includes tips on designing your AWS storage for optimum performance.

Need More Help or Information?

Even with all the above information, identifying the right data storage type, instance sizes and setting up custom architectures to suit your business performance requirements can be tricky. SoftNAS has assisted thousands of businesses with their AWS VPC configurations, and our in-house experts are available to answer queries and provide guidance free of charge.